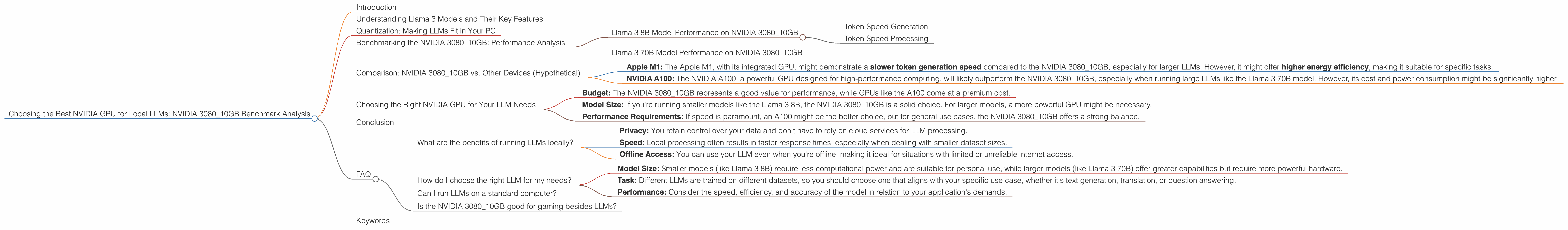

Choosing the Best NVIDIA GPU for Local LLMs: NVIDIA 3080 10GB Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is booming, with incredible capabilities across various domains. One of the key aspects for deploying these models is finding the right hardware that can handle the computational demands of running them locally. This article focuses on the NVIDIA 3080_10GB, a popular graphics card, and analyzes its performance in running local LLMs, specifically the Llama 3 series models.

Understanding Llama 3 Models and Their Key Features

Llama 3, developed by Meta AI, is a series of large language models known for their impressive performance and ability to generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. These models come in various sizes, with the Llama 3 8B and Llama 3 70B being prominent choices for local deployment due to their balance between performance and computational requirements.

Quantization: Making LLMs Fit in Your PC

LLMs, with their massive size, can be challenging to run on standard personal computers. Quantization comes to the rescue! Think of it as a process of squeezing a large file (the LLM) into a smaller container while preserving most of its data. Models can be quantized to 4-bit (Q4) or 16-bit floating-point (F16), significantly reducing their memory footprint while maintaining functionality.

Benchmarking the NVIDIA 3080_10GB: Performance Analysis

Llama 3 8B Model Performance on NVIDIA 3080_10GB

Token Speed Generation

The NVIDIA 3080_10GB performs impressively with the Llama 3 8B model, capable of generating 106.4 tokens per second when quantized to Q4 with Key-Value caching and Multi-Query Attention. This translates into a smooth and responsive interaction with the model.

Token Speed Processing

In terms of processing speed, the NVIDIA 3080_10GB handles the Llama 3 8B model with ease, achieving a remarkable 3557.02 tokens per second for Q4 with Key-Value caching and Multi-Query Attention. This signifies an incredibly fast processing rate, enabling efficient and near-instantaneous computations.

Llama 3 70B Model Performance on NVIDIA 3080_10GB

Unfortunately, we do not have benchmark data for the Llama 3 70B model running on the NVIDIA 308010GB, likely due to the demanding nature of this larger model. However, it's important to note that the NVIDIA 308010GB might struggle to handle the Llama 3 70B model efficiently, especially with the F16 quantization level.

Comparison: NVIDIA 3080_10GB vs. Other Devices (Hypothetical)

While this article focuses solely on the NVIDIA 3080_10GB, it's helpful to compare it to other popular options based on hypothetical scenarios:

- Apple M1: The Apple M1, with its integrated GPU, might demonstrate a slower token generation speed compared to the NVIDIA 3080_10GB, especially for larger LLMs. However, it might offer higher energy efficiency, making it suitable for specific tasks.

- NVIDIA A100: The NVIDIA A100, a powerful GPU designed for high-performance computing, will likely outperform the NVIDIA 3080_10GB, especially when running large LLMs like the Llama 3 70B model. However, its cost and power consumption might be significantly higher.

Choosing the Right NVIDIA GPU for Your LLM Needs

The NVIDIA 3080_10GB proves itself to be a capable performer for running Llama 3 8B model, offering a blend of speed and affordability. However, for larger models like the Llama 3 70B, you might need a more powerful GPU, like the A100. Consider these factors when making your decision:

- Budget: The NVIDIA 3080_10GB represents a good value for performance, while GPUs like the A100 come at a premium cost.

- Model Size: If you're running smaller models like the Llama 3 8B, the NVIDIA 3080_10GB is a solid choice. For larger models, a more powerful GPU might be necessary.

- Performance Requirements: If speed is paramount, an A100 might be the better choice, but for general use cases, the NVIDIA 3080_10GB offers a strong balance.

Conclusion

The NVIDIA 3080_10GB, with its impressive performance on the Llama 3 8B model, is a compelling option for local LLM experimentation and development. While it might not be ideal for larger models, it represents a great value for those looking to delve into the world of LLMs without breaking the bank.

FAQ

What are the benefits of running LLMs locally?

Running LLMs locally offers several advantages:

- Privacy: You retain control over your data and don't have to rely on cloud services for LLM processing.

- Speed: Local processing often results in faster response times, especially when dealing with smaller dataset sizes.

- Offline Access: You can use your LLM even when you're offline, making it ideal for situations with limited or unreliable internet access.

How do I choose the right LLM for my needs?

The choice of LLM depends on your specific application and requirements:

- Model Size: Smaller models (like Llama 3 8B) require less computational power and are suitable for personal use, while larger models (like Llama 3 70B) offer greater capabilities but require more powerful hardware.

- Task: Different LLMs are trained on different datasets, so you should choose one that aligns with your specific use case, whether it's text generation, translation, or question answering.

- Performance: Consider the speed, efficiency, and accuracy of the model in relation to your application's demands.

Can I run LLMs on a standard computer?

You can run smaller LLMs on a standard computer with a dedicated GPU, like the NVIDIA 3080_10GB. However, larger models might require more specialized hardware.

Is the NVIDIA 3080_10GB good for gaming besides LLMs?

Absolutely! The NVIDIA 3080_10GB is a highly acclaimed gaming graphics card, offering excellent performance for modern games. It's a versatile choice for both gaming and LLM experimentation.

Keywords

NVIDIA 3080_10GB, Llama 3, LLM, Local LLM, Benchmark, Token Speed, Quantization, Q4, F16, Key-Value Caching, Multi-Query Attention, GPU, Graphics Card, Performance, Generation, Processing, LLMs, Gaming, Budget, Model Size, Performance Requirements, Privacy, Speed, Offline Access, Data, Cloud Services, Datasets, Application, Task, Accuracy, Standard Computer, Specialized Hardware, Gaming Performance