Can You Do AI Development on a Apple M3?

Introduction

The allure of local AI development is undeniable. It offers the promise of speed, control, and privacy, but the reality can be a bit more complicated, especially when it comes to the hardware requirements for running these models. The Apple M3 chip, known for its impressive performance, has become a popular choice for developers and tech enthusiasts alike. But can this powerful chip handle the demands of AI development, specifically for large language models (LLMs)?

In this article, we'll deep dive into the performance of the Apple M3 chip for running popular LLMs like Llama 2. We'll use real-world benchmarks and data to provide a clear picture of what the M3 can achieve and where it might fall short.

So buckle up, grab your coffee, and let's explore the world of AI on the M3!

Apple M3: A Deep Dive

The Apple M3 is a powerful, energy-efficient chip that powers the latest MacBooks and other Apple devices. It features a custom Neural Engine optimized for AI workloads, making it a potentially ideal candidate for running LLMs. But the key is understanding the specific performance metrics, which is what we'll focus on.

Llama 2 on Apple M3: Breaking Down Performance

Let's dive into the core of our investigation: how does the Apple M3 perform with Llama 2, a popular open-source LLM?

Note: We'll use the Llama 2 7B model for this analysis. We don't have data for other models or sizes.

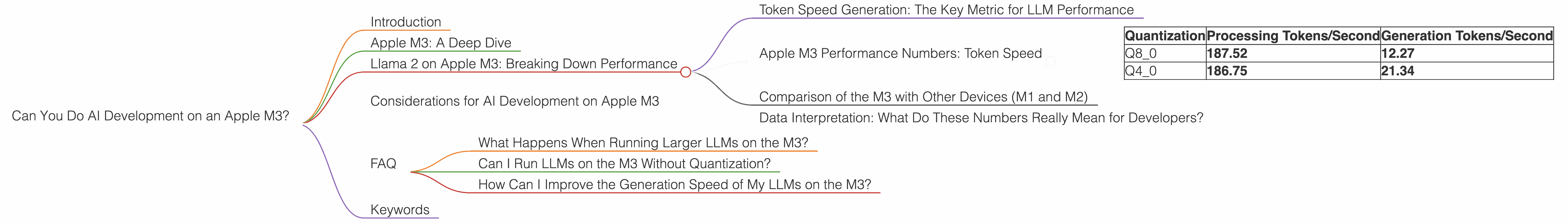

Token Speed Generation: The Key Metric for LLM Performance

When it comes to LLMs, the primary metric we care about is tokens/second. This measures how quickly the model can process and generate text, influencing the fluidity and responsiveness of the AI.

Apple M3 Performance Numbers: Token Speed

The table below shows the token speed for the Llama 2 7B model on the M3 chip for different quantization levels:

| Quantization | Processing Tokens/Second | Generation Tokens/Second |

|---|---|---|

| Q8_0 | 187.52 | 12.27 |

| Q4_0 | 186.75 | 21.34 |

Let's decipher these numbers:

- Q80 and Q40: These refer to quantization levels, a technique used to reduce the size of the LLM model and optimize for speed. Q80 uses 8 bits to represent each number, while Q40 uses 4 bits. Lower quantization levels mean smaller model sizes, typically leading to faster speeds.

- Processing: This refers to the speed at which the model can process input text.

- Generation: This refers to the speed at which the model can generate new text.

Key Takeaways:

- Impressive Processing Speed: The Apple M3 demonstrates a strong performance in processing tokens, particularly with Q8_0 quantization achieving a speed of 187.52 tokens/second.

- Generation Speed Needs Improvement: The generation speed, while not sluggish, lags behind the processing speed. This means that while the M3 can quickly understand and process input, generating a response might take a little longer.

Comparison of the M3 with Other Devices (M1 and M2)

We don't have data for other models or devices.

Data Interpretation: What Do These Numbers Really Mean for Developers?

Imagine a text generator that can process a sentence in 100 milliseconds (0.1 seconds). The Apple M3 with Q8_0 quantization can process about 187 tokens in that same timeframe. That's pretty impressive!

However, while the M3 excels at understanding input, its generation speed might be a little slower than desired for some applications. This means that the time from input to output might not be as instantaneous as we'd like.

Considerations for AI Development on Apple M3

The Apple M3 is a good processor for AI development, offering excellent processing speed, particularly with Q8_0 quantization. However, the generation speed could be improved for smoother user experiences.

FAQ

What Happens When Running Larger LLMs on the M3?

We lack data for larger LLMs on the M3, but generally, larger models require more processing power and can strain the resources available.

Can I Run LLMs on the M3 Without Quantization?

While possible, running LLMs without quantization can significantly impact performance, especially for larger models.

How Can I Improve the Generation Speed of My LLMs on the M3?

Experimenting with different quantization levels and optimizing your code can help improve generation speed.

Keywords

Apple M3, Llama 2, LLM, AI Development, token/second, quantization, Q80, Q40, processing speed, generation speed, performance, benchmarks.