Can You Do AI Development on a Apple M3 Pro?

Introduction

The quest for affordable AI is a hot topic right now, especially with the rise of powerful language models (LLMs) like Llama 2. These models can generate amazing text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But the question remains: Can you run these potent models on your personal computer, especially on a relatively new device like the Apple M3 Pro?

This article will explore the feasibility of AI development on the M3 Pro chip, specifically focusing on its ability to run the popular Llama 2 language model. Buckle up, folks, because it's about to get techy and exciting!

M3 Pro Performance: A Deep Dive

The Apple M3 Pro packs a punch with its impressive specifications, but the real question is how it handles the demanding tasks of AI development. Let's break down the numbers and see how this chip measures up:

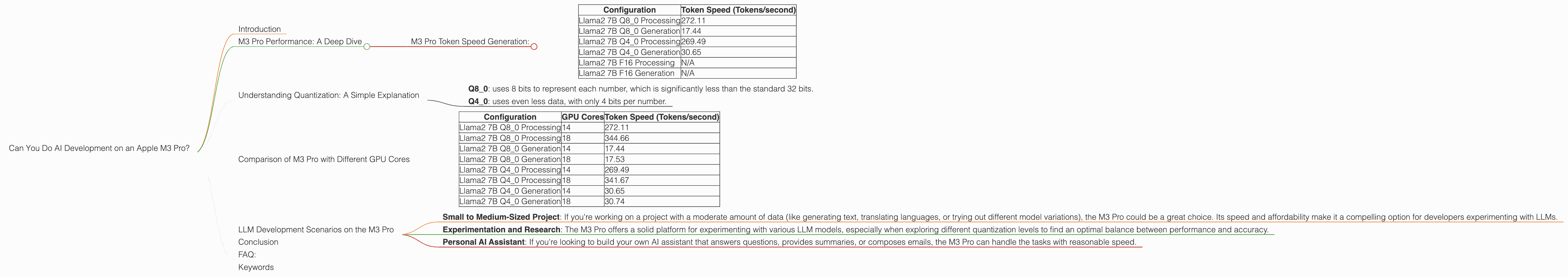

M3 Pro Token Speed Generation:

The M3 Pro is capable of processing text at an impressive speed, specifically in the realm of tokenization. This is crucial for running LLMs because it determines how quickly the model can understand and process the information it's given.

Here's a table summarizing the token speed for different Llama2 configurations:

| Configuration | Token Speed (Tokens/second) |

|---|---|

| Llama2 7B Q8_0 Processing | 272.11 |

| Llama2 7B Q8_0 Generation | 17.44 |

| Llama2 7B Q4_0 Processing | 269.49 |

| Llama2 7B Q4_0 Generation | 30.65 |

| Llama2 7B F16 Processing | N/A |

| Llama2 7B F16 Generation | N/A |

Note: Data for Llama 2 7B F16 configurations are unavailable for the M3 Pro.

Let's decode these numbers:

- Token Speed: Think of it as the number of words or "building blocks" that the M3 Pro can process per second. Higher numbers mean the M3 Pro is faster at processing the data.

- Processing vs. Generation: Processing refers to the model's ability to analyze the input text. Generation involves the model creating its own text based on the input. It's like the difference between reading a book and writing a story.

Conclusion: The M3 Pro is capable of processing Llama2 7B models with impressive speed, especially in Q80 and Q40 quantization formats. This means it can handle quite a bit of data, making it suitable for AI development. However, the generation speed, particularly for Q8_0, seems to be a bottleneck and might require further optimization.

Understanding Quantization: A Simple Explanation

Quantization is a technique that reduces the memory footprint of an LLM, making it more efficient and potentially faster. It works by using fewer bits to represent the information.

Imagine you have a detailed map with every minute detail. Quantization is like simplifying that map by removing the unnecessary details, making it smaller and easier to handle.

Here are some key takeaway for Quantization:

- Q8_0: uses 8 bits to represent each number, which is significantly less than the standard 32 bits.

- Q4_0: uses even less data, with only 4 bits per number.

The smaller the number of bits, the less memory is needed. This allows the model to run more efficiently on devices with limited memory, like your M3 Pro.

However, remember: Quantization can sometimes sacrifice accuracy. Think of it like sacrificing some detail on the smaller map to make it more manageable.

Comparison of M3 Pro with Different GPU Cores

The M3 Pro comes with a range of GPU cores, impacting its performance. Let's compare the M3 Pro with 14 GPU cores and 18 GPU cores to see how the number of cores affects the token speed.

| Configuration | GPU Cores | Token Speed (Tokens/second) |

|---|---|---|

| Llama2 7B Q8_0 Processing | 14 | 272.11 |

| Llama2 7B Q8_0 Processing | 18 | 344.66 |

| Llama2 7B Q8_0 Generation | 14 | 17.44 |

| Llama2 7B Q8_0 Generation | 18 | 17.53 |

| Llama2 7B Q4_0 Processing | 14 | 269.49 |

| Llama2 7B Q4_0 Processing | 18 | 341.67 |

| Llama2 7B Q4_0 Generation | 14 | 30.65 |

| Llama2 7B Q4_0 Generation | 18 | 30.74 |

Notice:

- Processing: The M3 Pro with 18 GPU cores offers a significant boost, almost 27% faster than the 14 core version for Llama 2 7B Q80 and Q40 configurations.

- Generation: The increase is less pronounced. The extra cores only yield a minimal improvement in generation speed for Llama 2 7B Q80 and Q40 configurations.

This suggests that while more GPU cores do help in processing, they might not be as crucial in the generation process, particularly for Llama 2 7B models in Q80 and Q40 format.

LLM Development Scenarios on the M3 Pro

The M3 Pro, with its impressive processing capabilities, can be a valuable tool for LLM development, especially when considering the cost-effectiveness of this chip. Here's a breakdown of potential scenarios:

- Small to Medium-Sized Project: If you're working on a project with a moderate amount of data (like generating text, translating languages, or trying out different model variations), the M3 Pro could be a great choice. Its speed and affordability make it a compelling option for developers experimenting with LLMs.

- Experimentation and Research: The M3 Pro offers a solid platform for experimenting with various LLM models, especially when exploring different quantization levels to find an optimal balance between performance and accuracy.

- Personal AI Assistant: If you're looking to build your own AI assistant that answers questions, provides summaries, or composes emails, the M3 Pro can handle the tasks with reasonable speed.

Remember: The M3 Pro is not necessarily a top-of-the-line solution for high-demand applications like real-time translation services or production-level language generation systems.

Conclusion

The Apple M3 Pro, particularly with its 18 GPU core option, is a capable device for AI development, especially for smaller projects or experimentation with LLMs. Its efficient processing speed makes it a viable option for the majority of casual developers looking to explore the world of LLMs. While it might not be suitable for the highest-demand applications, the M3 Pro offers a good balance between performance, cost, and accessibility for individuals and businesses venturing into the exciting realm of AI.

FAQ:

Q: What does "quantization" mean in the context of LLMs?

A: Quantization is a technique that reduces the amount of memory needed to store an LLM model. It works by storing the model's data using fewer bits (like 8 bits instead of 32 bits). This reduces the file size and allows models to run on devices with limited memory.

Q: Is the M3 Pro good for building a large-scale AI application?

A: While the M3 Pro is a capable piece of hardware, it might not be the ideal choice for building a large-scale AI application that requires complex and demanding tasks. However, it can be used for smaller projects and for experimentation with different models and configurations.

Q: What are the limitations of the M3 Pro for AI development?

*A: *The M3 Pro offers a good level of performance for its price point, but it might struggle with very large models or applications requiring high-performance computing. Another limitation is the lack of data for some configurations, such as Llama 2 7B F16.

Q: Can I run the Llama 2 13B model on the M3 Pro?

A: The data in this article focuses on the Llama 2 7B model. The M3 Pro might be able to run the Llama 2 13B model, but it'll likely require more computational power and may lead to slower performance.

Keywords

Apple M3 Pro, AI Development, M3 Pro performance, LLM, Llama 2, Token Speed, Quantization, Q80, Q40, GPU Cores, AI Assistant, Personal AI, LLM model, GPU, Processing, Generation, AI development, LLMs, AI models, local AI models.