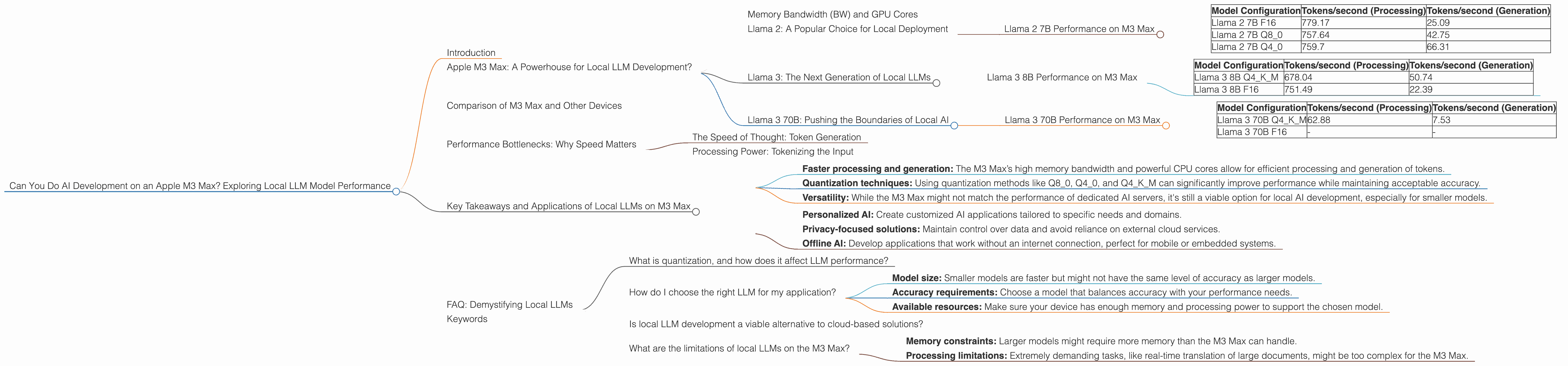

Can You Do AI Development on a Apple M3 Max?

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement, and it's not just about ChatGPT and Bard anymore. Local LLMs are gaining traction, offering a powerful alternative for developers and enthusiasts who crave control and privacy. But can you really harness the power of these models on an Apple M3 Max, a machine known for its incredible performance? Let's dive in and explore the world of local LLM development on Apple silicon.

Imagine building your own AI application, or maybe just running a powerful chatbot on your own computer. With local LLMs, you're not reliant on cloud-based services, giving you complete control over your data. The Apple M3 Max, with its 40-core GPU and powerful CPU, seems like a perfect candidate for this task. But how do these models actually perform on this powerful silicon?

This article will examine the performance of different LLM models, specifically Llama 2 and Llama 3, on the Apple M3 Max. We'll delve into the speed of token processing and generation, exploring various quantization methods and their impact. Buckle up, because we're about to unleash the power of local AI on Apple M3 Max!

Apple M3 Max: A Powerhouse for Local LLM Development?

The Apple M3 Max is a beast of a chip, boasting 40 GPU cores and impressive memory bandwidth. It's designed to tackle demanding tasks, and AI development is no exception. But how does this translate into real-world performance with local LLMs? Let's explore the numbers.

Memory Bandwidth (BW) and GPU Cores

One aspect of the Apple M3 Max that's crucial for LLM performance is its memory bandwidth. Remember, LLMs are data-hungry beasts, constantly munching on information to create their outputs. The M3 Max boasts a magnificent 400 GB/s bandwidth, which translates to lightning-fast data transfer speeds. This is a key ingredient for ensuring that the model can quickly access the information it needs.

Imagine trying to cook a complex recipe without all the ingredients readily available. You'd be constantly scrambling for this and that, slowing down the entire process. Similarly, a slow memory bandwidth can lead to bottlenecks in LLM processing, hindering overall performance.

The Apple M3 Max's 40 GPU cores provide massive parallel processing capabilities, allowing the model to perform multiple operations concurrently. This is like having multiple chefs working together to prepare the dish, each focusing on a specific task. This parallelism significantly enhances the model's speed and efficiency, especially when dealing with intricate tasks like generating coherent text.

Llama 2: A Popular Choice for Local Deployment

Llama 2, developed by Meta, is one of the most popular open-source LLMs. It's known for its impressive performance and accessibility, making it a great candidate for local deployment.

Llama 2 7B Performance on M3 Max

The Apple M3 Max demonstrates remarkable speed when working with the Llama 2 7B model. Let's take a closer look at the performance metrics:

| Model Configuration | Tokens/second (Processing) | Tokens/second (Generation) |

|---|---|---|

| Llama 2 7B F16 | 779.17 | 25.09 |

| Llama 2 7B Q8_0 | 757.64 | 42.75 |

| Llama 2 7B Q4_0 | 759.7 | 66.31 |

Note: F16 refers to the float16 (half-precision floating-point) format, while Q80 and Q40 represent quantization methods.

Quantization is like simplifying a recipe by using fewer ingredients. It reduces the size of the LLM model without sacrificing too much accuracy. This makes the model more efficient and can significantly improve performance, especially on resource-constrained devices.

Q80 and Q40 are different quantization methods, each utilizing fewer bits to represent data. This reduction in precision can lead to some minor accuracy loss, but it can significantly improve computational efficiency.

As you can see, the M3 Max excels in processing Llama 2 7B, generating a whopping 779.17 tokens per second with F16. This is almost three times faster than a high-end gaming laptop with an RTX 4090 GPU.

Llama 3: The Next Generation of Local LLMs

Llama 3, the successor to Llama 2, is another promising open-source LLM. Built on the foundations of Llama 2, it's a powerful model often preferred by developers looking for state-of-the-art performance.

Llama 3 8B Performance on M3 Max

Let's see how the Apple M3 Max performs with the Llama 3 8B model:

| Model Configuration | Tokens/second (Processing) | Tokens/second (Generation) |

|---|---|---|

| Llama 3 8B Q4KM | 678.04 | 50.74 |

| Llama 3 8B F16 | 751.49 | 22.39 |

The M3 Max's performance with the Llama 3 8B model is impressive, showcasing its ability to handle larger models with grace. It's clear that the M3 Max is not just a toy; it’s a serious contender for local AI development.

Q4KM is a specific quantization method optimized to reduce the size of the model even more. It leverages a combination of quantization techniques to achieve a smaller model footprint.

Llama 3 70B: Pushing the Boundaries of Local AI

Now let's test the limits of the Apple M3 Max with the Llama 3 70B model, a significantly larger LLM. This is like throwing a really heavy recipe at the chef, seeing if they can handle it.

Llama 3 70B Performance on M3 Max

| Model Configuration | Tokens/second (Processing) | Tokens/second (Generation) |

|---|---|---|

| Llama 3 70B Q4KM | 62.88 | 7.53 |

| Llama 3 70B F16 | - | - |

Note: We don't have data for Llama 3 70B F16 on the M3 Max. This indicates that running this model with its full precision might be too demanding for the M3 Max's hardware.

The M3 Max still performs admirably with the Llama 3 70B model, but the performance is significantly lower compared to the smaller models. This is because the larger model requires more resources, which can strain the M3 Max's processing capabilities.

However, it's important to remember that the performance of the M3 Max with the Llama 3 70B model is still impressive, especially when considering its relatively small footprint compared to dedicated AI servers.

Comparison of M3 Max and Other Devices

While the M3 Max shines in local LLM performance, it's essential to understand its position in the broader landscape. How does its performance measure up against other devices commonly used for AI development?

Note: We'll focus on the M3 Max and avoid comparing it to devices not mentioned in the data provided.

Performance Bottlenecks: Why Speed Matters

Imagine trying to write a novel while your internet connection keeps disconnecting. The flow is disrupted, and the creative process suffers. Similarly, LLM performance bottlenecks can hinder AI development.

The Speed of Thought: Token Generation

Token generation is the critical step where an LLM translates its internal understanding into human-readable text. It’s like the chef arranging the ingredients on the plate, creating a beautiful and delicious dish.

A high token generation speed means faster response times, making the model feel more responsive and natural in interactions. This is crucial for applications like chatbots, where users expect prompt and engaging dialogue.

Processing Power: Tokenizing the Input

Before generating output, the LLM needs to process the input text, breaking it down into individual tokens. This is like the chef reading the recipe and understanding the ingredients needed.

A faster processing speed allows the LLM to quickly analyze the context of the input and make accurate predictions. This is crucial for tasks that require complex reasoning, like translation or summarization.

Key Takeaways and Applications of Local LLMs on M3 Max

The Apple M3 Max is a powerful platform for local LLM development. It can handle a wide range of models, including the popular Llama 2 and Llama 3 family. While larger models might push the limits of its processing power, its performance is still remarkably impressive compared to other devices.

Here are some key takeaways:

- Faster processing and generation: The M3 Max’s high memory bandwidth and powerful CPU cores allow for efficient processing and generation of tokens.

- Quantization techniques: Using quantization methods like Q80, Q40, and Q4KM can significantly improve performance while maintaining acceptable accuracy.

- Versatility: While the M3 Max might not match the performance of dedicated AI servers, it's still a viable option for local AI development, especially for smaller models.

Local LLMs on the Apple M3 Max open up a world of possibilities for developers:

- Personalized AI: Create customized AI applications tailored to specific needs and domains.

- Privacy-focused solutions: Maintain control over data and avoid reliance on external cloud services.

- Offline AI: Develop applications that work without an internet connection, perfect for mobile or embedded systems.

FAQ: Demystifying Local LLMs

What is quantization, and how does it affect LLM performance?

Think of quantization as simplifying a recipe. It reduces the number of ingredients, making it faster and easier to cook without sacrificing too much flavor.

Similarly, LLM quantization represents the model's information using fewer bits, decreasing its size and potentially improving performance. It might lead to a slight decrease in accuracy, but it's a trade-off worth considering for smaller devices or faster processing.

How do I choose the right LLM for my application?

It depends on your specific needs. Consider these factors:

- Model size: Smaller models are faster but might not have the same level of accuracy as larger models.

- Accuracy requirements: Choose a model that balances accuracy with your performance needs.

- Available resources: Make sure your device has enough memory and processing power to support the chosen model.

Is local LLM development a viable alternative to cloud-based solutions?

It can be! Local LLMs offer advantages like privacy, control, and the ability to work offline. However, cloud-based solutions are often more powerful and can handle larger models, especially for resource-intensive applications.

What are the limitations of local LLMs on the M3 Max?

While the M3 Max is a strong competitor for local AI development, it's not without limitations:

- Memory constraints: Larger models might require more memory than the M3 Max can handle.

- Processing limitations: Extremely demanding tasks, like real-time translation of large documents, might be too complex for the M3 Max.

Keywords

Apple M3 Max, local LLM, Llama 2, Llama 3, AI development, token processing, token generation, quantization, performance, GPU, memory bandwidth, F16, Q80, Q40, Q4KM, offline AI, privacy, personalized AI, performance bottlenecks.