Can You Do AI Development on a Apple M2 Ultra?

Introduction

The world of AI is buzzing with excitement, and at the heart of this revolution are large language models (LLMs). These powerful AI systems can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. However, running LLMs on your computer can be resource-intensive. That's where powerful CPUs and GPUs come in, and Apple's M2 Ultra chip stands out as a potential game-changer for AI development.

Think of the M2 Ultra as a supercharged brain for your computer. It's designed to handle demanding tasks like video editing, 3D rendering, and yes, even AI development. But can it truly handle the heavyweight demands of LLMs? Let's dive into the details and see what the numbers tell us!

Apple M2 Ultra: A Powerful Chip for AI?

The Apple M2 Ultra chip packs a punch with its impressive 24-core CPU, 76-core GPU, and 96GB of unified memory. This translates to a massive performance boost, especially for tasks requiring parallel processing. But how does this translate to real-world performance with different LLM models? Let's compare different LLM models on the M2 Ultra and see how it holds up.

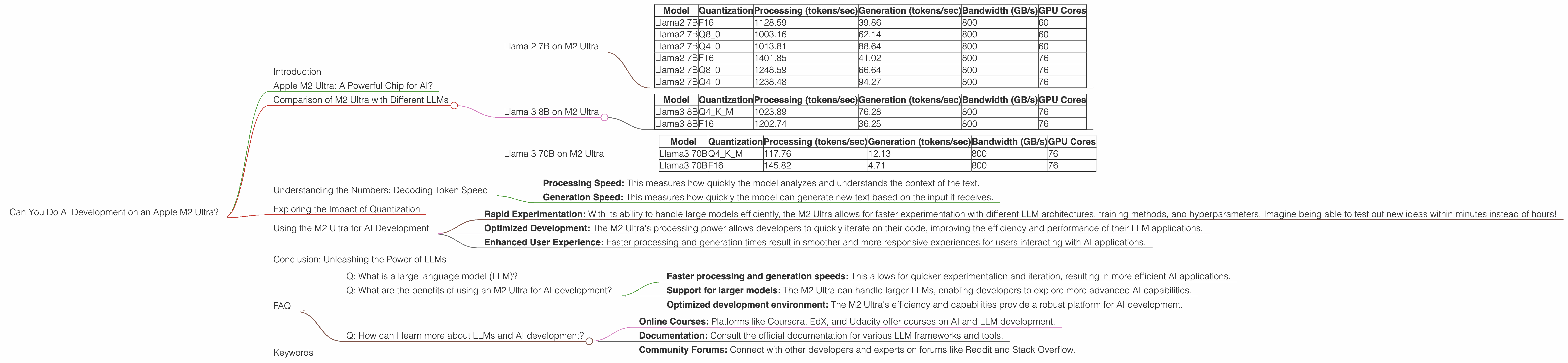

Comparison of M2 Ultra with Different LLMs

Here's a breakdown of the M2 Ultra's performance with different LLMs, focusing on "Llama 2" and "Llama 3" models. For each model, we'll look at different quantization levels - F16 (16-bit floating point), Q80 (8-bit integer), and Q40 (4-bit integer). Quantization is a technique that reduces the size of the LLM model, which can speed up processing and make them more efficient.

Think of it like this: Imagine you're trying to store a mountain of books in a small apartment. You need to find ways to compress the books, like using smaller fonts or removing unnecessary pictures, just like how quantization "compresses" the model to be more efficient.

Llama 2 7B on M2 Ultra

| Model | Quantization | Processing (tokens/sec) | Generation (tokens/sec) | Bandwidth (GB/s) | GPU Cores |

|---|---|---|---|---|---|

| Llama2 7B | F16 | 1128.59 | 39.86 | 800 | 60 |

| Llama2 7B | Q8_0 | 1003.16 | 62.14 | 800 | 60 |

| Llama2 7B | Q4_0 | 1013.81 | 88.64 | 800 | 60 |

| Llama2 7B | F16 | 1401.85 | 41.02 | 800 | 76 |

| Llama2 7B | Q8_0 | 1248.59 | 66.64 | 800 | 76 |

| Llama2 7B | Q4_0 | 1238.48 | 94.27 | 800 | 76 |

Llama 3 8B on M2 Ultra

| Model | Quantization | Processing (tokens/sec) | Generation (tokens/sec) | Bandwidth (GB/s) | GPU Cores |

|---|---|---|---|---|---|

| Llama3 8B | Q4KM | 1023.89 | 76.28 | 800 | 76 |

| Llama3 8B | F16 | 1202.74 | 36.25 | 800 | 76 |

Llama 3 70B on M2 Ultra

| Model | Quantization | Processing (tokens/sec) | Generation (tokens/sec) | Bandwidth (GB/s) | GPU Cores |

|---|---|---|---|---|---|

| Llama3 70B | Q4KM | 117.76 | 12.13 | 800 | 76 |

| Llama3 70B | F16 | 145.82 | 4.71 | 800 | 76 |

Key Takeaways:

- M2 Ultra excels with Llama 2 7B: The M2 Ultra demonstrates strong performance with Llama 2 7B, achieving impressive token speeds for both processing and generation, especially in the Q4_0 quantization mode.

- Llama 3 scales well: The M2 Ultra can efficiently handle both 8B and 70B Llama 3 models, showcasing its versatility for various LLM sizes.

- Quantization matters: Lower quantization levels (Q4_0) generally translate to a faster token generation speed, achieving a significant improvement.

Understanding the Numbers: Decoding Token Speed

Token speed refers to how many tokens the model can process or generate per second. Imagine a machine churning out words—the higher the token speed, the faster it's churning out those words!

- Processing Speed: This measures how quickly the model analyzes and understands the context of the text.

- Generation Speed: This measures how quickly the model can generate new text based on the input it receives.

Exploring the Impact of Quantization

Quantization helps to condense the information stored in an LLM, which makes it more efficient and faster. It's like compressing a video file to make it smaller so it loads faster. While quantization can sometimes impact the accuracy of the model, the trade-off of speed can often outweigh the slight loss in accuracy.

Using the M2 Ultra for AI Development

The M2 Ultra's performance with various LLMs opens up exciting possibilities for AI development. Here's how it can benefit developers:

- Rapid Experimentation: With its ability to handle large models efficiently, the M2 Ultra allows for faster experimentation with different LLM architectures, training methods, and hyperparameters. Imagine being able to test out new ideas within minutes instead of hours!

- Optimized Development: The M2 Ultra's processing power allows developers to quickly iterate on their code, improving the efficiency and performance of their LLM applications.

- Enhanced User Experience: Faster processing and generation times result in smoother and more responsive experiences for users interacting with AI applications.

Conclusion: Unleashing the Power of LLMs

The Apple M2 Ultra demonstrates remarkable potential for AI development, especially when it comes to running and experimenting with LLMs. The chip's powerful architecture and efficient memory management make it a suitable platform for developers seeking to advance the field of AI.

FAQ

Q: What is a large language model (LLM)?

A: An LLM is a type of artificial intelligence that is trained on massive amounts of text data. It can generate text, translate languages, and answer your questions in a comprehensive and informative way. Think of it like a very advanced AI chatbot.

Q: What are the benefits of using an M2 Ultra for AI development?

A: The M2 Ultra offers several advantages for AI developers:

- Faster processing and generation speeds: This allows for quicker experimentation and iteration, resulting in more efficient AI applications.

- Support for larger models: The M2 Ultra can handle larger LLMs, enabling developers to explore more advanced AI capabilities.

- Optimized development environment: The M2 Ultra's efficiency and capabilities provide a robust platform for AI development.

Q: How can I learn more about LLMs and AI development?

A: There are plenty of resources available online to help you get started:

- Online Courses: Platforms like Coursera, EdX, and Udacity offer courses on AI and LLM development.

- Documentation: Consult the official documentation for various LLM frameworks and tools.

- Community Forums: Connect with other developers and experts on forums like Reddit and Stack Overflow.

Keywords

LLM, AI, Apple M2 Ultra, Llama 2, Llama 3, Token Speed, Processing Speed, Generation Speed, Quantization, F16, Q80, Q40, AI Development, GPU, CPU, Bandwidth, GPU Cores, Performance Comparison,