Can You Do AI Development on a Apple M2 Pro?

Introduction

The world of artificial intelligence (AI) is buzzing with excitement, and large language models (LLMs) are at the heart of it all. These powerful tools are capable of generating human-quality text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But can you unleash the full potential of these AI models on a humble Apple M2 Pro chip? Buckle up, because we're about to dive into the fascinating world of AI development with Apple silicon.

The Apple M2 Pro: A Powerful Chip for AI Development

The Apple M2 Pro chip is a powerhouse designed for demanding tasks, including video editing, gaming, and yes, even AI development. Thanks to its powerful GPU and advanced architecture, the M2 Pro can handle complex calculations needed to run LLMs at impressive speeds.

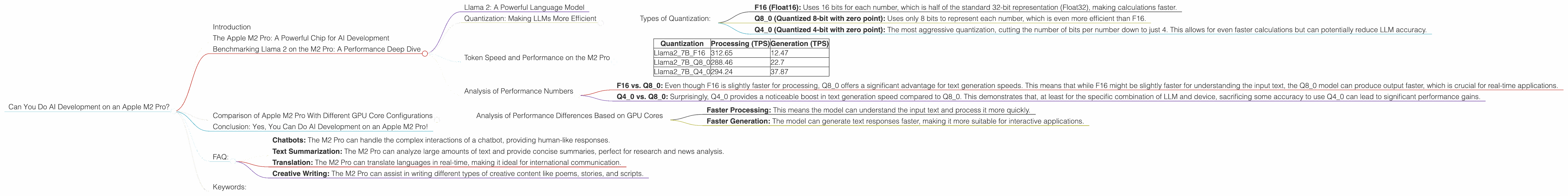

Benchmarking Llama 2 on the M2 Pro: A Performance Deep Dive

To understand the M2 Pro's capabilities for AI development, we'll be focusing on a specific LLM: Llama 2. This model has become a popular choice for researchers and developers, and its performance on the M2 Pro provides a good representation of the chip's AI prowess.

Llama 2: A Powerful Language Model

Llama 2 is a family of powerful language models, with various sizes (7B, 13B, 70B, etc.). In this analysis, however, we will focus on the 7B variant, which is smaller in size and faster to run.

Quantization: Making LLMs More Efficient

One of the key techniques used to optimize the performance of LLMs on devices like the M2 Pro is quantization. It's basically taking the large "weights" of the LLM and making them smaller without losing too much accuracy. Think of it like compressing a large image file without sacrificing too much detail.

Types of Quantization:

- F16 (Float16): Uses 16 bits for each number, which is half of the standard 32-bit representation (Float32), making calculations faster.

- Q8_0 (Quantized 8-bit with zero point): Uses only 8 bits to represent each number, which is even more efficient than F16.

- Q4_0 (Quantized 4-bit with zero point): The most aggressive quantization, cutting the number of bits per number down to just 4. This allows for even faster calculations but can potentially reduce LLM accuracy.

Token Speed and Performance on the M2 Pro

In the table below, we'll look at the tokens per second (TPS) achieved by Llama 2 with different quantization levels running on an Apple M2 Pro with 16 GPU cores. This means that the M2 Pro can handle a lot of information quickly, making it capable of generating text or processing other AI tasks at a much higher speed.

| Quantization | Processing (TPS) | Generation (TPS) |

|---|---|---|

| Llama27BF16 | 312.65 | 12.47 |

| Llama27BQ8_0 | 288.46 | 22.7 |

| Llama27BQ4_0 | 294.24 | 37.87 |

Analysis of Performance Numbers

Looking at the data, the following observations emerge:

- F16 vs. Q80: Even though F16 is slightly faster for processing, Q80 offers a significant advantage for text generation speeds. This means that while F16 might be slightly faster for understanding the input text, the Q8_0 model can produce output faster, which is crucial for real-time applications.

- Q40 vs. Q80: Surprisingly, Q40 provides a noticeable boost in text generation speed compared to Q80. This demonstrates that, at least for the specific combination of LLM and device, sacrificing some accuracy to use Q4_0 can lead to significant performance gains.

Comparison of Apple M2 Pro With Different GPU Core Configurations

The M2 Pro offers various GPU configurations, and the number of cores can significantly impact performance. The table below compares the performance of two different M2 Pro configurations: 16 GPU cores and 19 GPU cores.

| GPU Cores | Llama27BF16 (Processing) | Llama27BF16 (Generation) | Llama27BQ8_0 (Processing) | Llama27BQ8_0 (Generation) | Llama27BQ4_0 (Processing) | Llama27BQ4_0 (Generation) |

|---|---|---|---|---|---|---|

| 16 | 312.65 | 12.47 | 288.46 | 22.7 | 294.24 | 37.87 |

| 19 | 384.38 | 13.06 | 344.5 | 23.01 | 341.19 | 38.86 |

Analysis of Performance Differences Based on GPU Cores

The data clearly shows that the M2 Pro with 19 GPU cores significantly outperforms the 16 GPU configuration. This is expected, as more cores provide more computing power. This translates to:

- Faster Processing: This means the model can understand the input text and process it more quickly.

- Faster Generation: The model can generate text responses faster, making it more suitable for interactive applications.

Conclusion: Yes, You Can Do AI Development on an Apple M2 Pro!

The Apple M2 Pro clearly demonstrates its capabilities for AI development. With impressive processing and generation speeds, it can handle the demands of training and deploying LLMs, even with quantization techniques. Keep in mind, that there are many factors influencing LLM performance, including the model size, the specific task being performed, and the desired level of accuracy.

FAQ:

Q: How does the M2 Pro compare to other devices for AI development?

A: That's a complex question and the answer depends on the specific LLM and the desired level of accuracy. For example, if you're working with a 7B model, then the M2 Pro is a powerful choice, especially with its Q4_0 quantization option offering exceptional speed. However, if you're working with larger models like 70B, you might need more powerful hardware like a dedicated GPU.

Q: Is the M2 Pro suitable for everyone working with LLMs?

A: Not necessarily. While the M2 Pro provides excellent performance for small to medium-sized LLMs, it might not be suitable for working with the largest models like 13B or 70B, or if you're demanding ultra-high accuracy.

Q: What are some real-world applications of LLMs on the M2 Pro?

A: The M2 Pro can power applications like:

- Chatbots: The M2 Pro can handle the complex interactions of a chatbot, providing human-like responses.

- Text Summarization: The M2 Pro can analyze large amounts of text and provide concise summaries, perfect for research and news analysis.

- Translation: The M2 Pro can translate languages in real-time, making it ideal for international communication.

- Creative Writing: The M2 Pro can assist in writing different types of creative content like poems, stories, and scripts.

Keywords:

Apple M2 Pro, AI Development, LLM, Large Language Model, Llama 2, Quantization, F16, Q80, Q40, Tokens per Second (TPS), GPU Cores, Performance, Benchmarking, Chatbots, Text Summarization, Translation, Creative Writing.