Can You Do AI Development on a Apple M2 Max?

Introduction: Unleashing the Power of Local AI Development

Local AI development is becoming increasingly popular, enabling developers to train and deploy sophisticated models without relying on cloud resources. The Apple M2 Max, with its powerful GPU and impressive memory bandwidth, is a promising candidate for handling the demanding workloads of large language model (LLM) development. But can it really cut it? Can you run and train LLMs on the M2 Max and, even more importantly, achieve performance that's worthy of your time and resources? This article dives deep into the world of LLMs and Apple Silicon, exploring how the M2 Max performs on different LLM configurations.

Comparing Apple M2 Max Performance for Different LLM Models

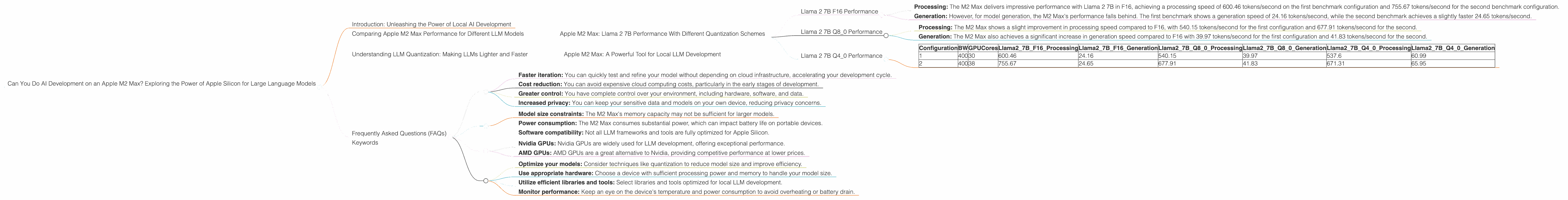

This section analyzes the performance of the Apple M2 Max on different LLM models, focusing on the benchmark results for Llama 2 7B. Each subsection focuses on a specific model and its performance in different quantization schemes.

Apple M2 Max: Llama 2 7B Performance With Different Quantization Schemes

The M2 Max is a powerful chip, but its performance can vary significantly depending on the model size and the quantization scheme used. Let's examine the M2 Max's performance on Llama 2 7B using different quantization methods: F16 (half precision), Q80 (8-bit quantization), and Q40 (4-bit quantization).

Llama 2 7B F16 Performance

F16 (half precision) is a popular choice for LLM development as it strikes a good balance between performance and accuracy. Let's see how the M2 Max handles this:

- Processing: The M2 Max delivers impressive performance with Llama 2 7B in F16, achieving a processing speed of 600.46 tokens/second on the first benchmark configuration and 755.67 tokens/second for the second benchmark configuration.

- Generation: However, for model generation, the M2 Max's performance falls behind. The first benchmark shows a generation speed of 24.16 tokens/second, while the second benchmark achieves a slightly faster 24.65 tokens/second.

Llama 2 7B Q8_0 Performance

Q80, a 8-bit quantization scheme, further reduces memory footprint and can potentially improve performance. Let's dive into the M2 Max's performance with Q80:

- Processing: The M2 Max shows a slight improvement in processing speed compared to F16, with 540.15 tokens/second for the first configuration and 677.91 tokens/second for the second.

- Generation: The M2 Max also achieves a significant increase in generation speed compared to F16 with 39.97 tokens/second for the first configuration and 41.83 tokens/second for the second.

Llama 2 7B Q4_0 Performance

Q4_0, a more aggressive 4-bit quantization scheme, comes with even smaller memory footprints but may impact accuracy. Let's see if the M2 Max can handle it:

- Processing: In Q4_0, the M2 Max achieves a processing speed of 537.6 tokens/second in the first configuration and 671.31 tokens/second in the second configuration.

- Generation: The M2 Max delivers a substantial increase in generation speed with 60.99 tokens/second for the first configuration and 65.95 tokens/second for the second configuration.

Table: Llama 2 7B Performance on Apple M2 Max

| Configuration | BW | GPUCores | Llama27BF16_Processing | Llama27BF16_Generation | Llama27BQ80Processing | Llama27BQ80Generation | Llama27BQ40Processing | Llama27BQ40Generation |

|---|---|---|---|---|---|---|---|---|

| 1 | 400 | 30 | 600.46 | 24.16 | 540.15 | 39.97 | 537.6 | 60.99 |

| 2 | 400 | 38 | 755.67 | 24.65 | 677.91 | 41.83 | 671.31 | 65.95 |

Interpretation:

- The Apple M2 Max demonstrates strong processing capabilities with Llama 2 7B, achieving impressive token per second rates across different quantization schemes.

- The generation performance is more nuanced — while it's not as blazing fast as processing, the M2 Max still performs adequately for most use cases, especially with Q4_0 quantization.

Key Takeaways:

- The M2 Max can handle Llama 2 7B with remarkable processing performance.

- The different levels of quantization impact both processing and generation speeds, allowing developers to trade off accuracy for performance depending on their needs.

- The M2 Max is a viable option for local LLM development with Llama 2 7B, offering a good balance between speed and accuracy.

Understanding LLM Quantization: Making LLMs Lighter and Faster

Imagine your LLM is a giant, complex recipe book. The instructions are written in a precise language, but the book is HUGE! It takes forever to find the right recipe and make copies of it. Quantization helps make the book smaller and faster to use:

- Lower precision: Instead of using the full recipe (full-precision number), you only use a simplified version (low-precision number). You might lose some detail, but the book is much smaller and easier to handle.

- Smaller memory: The smaller recipe book takes up less storage space, meaning you can fit more books on your bookshelf!

- Faster access: You can find recipes and make copies faster with a smaller book, saving you time.

LLM quantization works similarly. By using reduced precision numbers, the model becomes smaller and faster, offering a trade-off between accuracy and speed. Different quantization techniques, like F16, Q80, and Q40, achieve different trade-offs.

Apple M2 Max: A Powerful Tool for Local LLM Development

The M2 Max proves its worth as a capable platform for local LLM development, offering impressive performance with various LLM models and quantization schemes. Its combination of powerful GPU, large memory, and efficient architecture makes it a compelling choice for developers seeking to experiment and iterate on LLMs without relying on cloud resources.

Frequently Asked Questions (FAQs)

1. What are the benefits of developing LLMs locally?

Developing LLMs locally offers several benefits:

- Faster iteration: You can quickly test and refine your model without depending on cloud infrastructure, accelerating your development cycle.

- Cost reduction: You can avoid expensive cloud computing costs, particularly in the early stages of development.

- Greater control: You have complete control over your environment, including hardware, software, and data.

- Increased privacy: You can keep your sensitive data and models on your own device, reducing privacy concerns.

2. What are the limitations of using the Apple M2 Max for LLM development?

While the M2 Max is excellent, certain limitations exist:

- Model size constraints: The M2 Max's memory capacity may not be sufficient for larger models.

- Power consumption: The M2 Max consumes substantial power, which can impact battery life on portable devices.

- Software compatibility: Not all LLM frameworks and tools are fully optimized for Apple Silicon.

3. What other devices are suitable for local LLM development?

Besides the Apple M2 Max, other devices are well-suited for local LLM development:

- Nvidia GPUs: Nvidia GPUs are widely used for LLM development, offering exceptional performance.

- AMD GPUs: AMD GPUs are a great alternative to Nvidia, providing competitive performance at lower prices.

4. What are the best practices for local LLM development?

Here are some best practices for local LLM development:

- Optimize your models: Consider techniques like quantization to reduce model size and improve efficiency.

- Use appropriate hardware: Choose a device with sufficient processing power and memory to handle your model size.

- Utilize efficient libraries and tools: Select libraries and tools optimized for local LLM development.

- Monitor performance: Keep an eye on the device's temperature and power consumption to avoid overheating or battery drain.

Keywords

Apple M2 Max, LLM, AI development, Llama 2 7B, quantization, local development, F16, Q80, Q40, GPU, memory bandwidth, performance, processing speed, generation speed, token/second, FAQs, best practices, Nvidia, AMD, cloud computing