Can You Do AI Development on a Apple M1?

Introduction: The Rise of Local LLMs and the Apple M1's Potential

The world of artificial intelligence (AI) is experiencing a revolutionary shift with the advent of Large Language Models (LLMs). These AI systems, trained on massive datasets, possess incredible abilities to generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. Previously, accessing LLMs required powerful cloud computing infrastructure, making it inaccessible to many developers. However, the landscape is changing with the emergence of "local LLMs" – models that can run directly on your computer.

This article explores the capabilities of the Apple M1 chip, a powerful processor found in many Macs and iPads, for AI and LLM development. We'll analyze the M1's performance with different LLM models, focusing on its ability to run these models locally. Joining us on this journey of discovery will be the Llama 2 and Llama 3 families of LLMs! Prepare to witness the Apple M1 put its processing power to the test!

Apple M1: A Powerful Chip for AI Development

The Apple M1 chip, released in 2020, is a significant leap forward in mobile computing. It boasts an impressive combination of CPU, GPU, and Neural Engine, making it a formidable contender for AI development. But how does it stack up against the demands of powerful LLMs like the Llama 2 and Llama 3? Let's dive into the details.

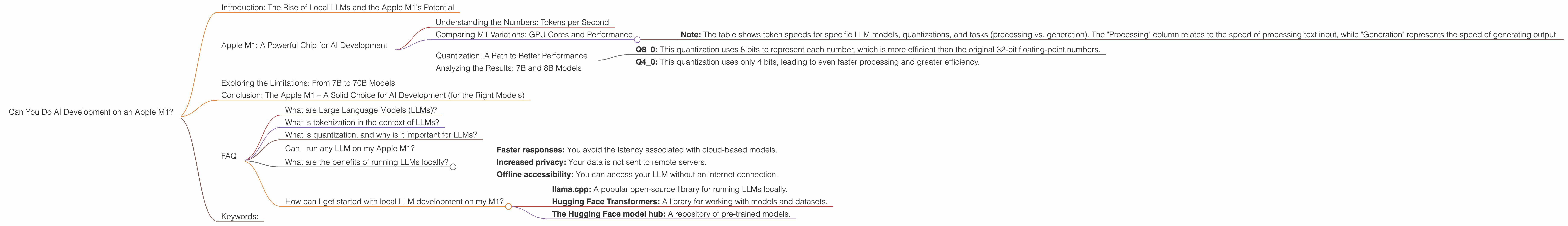

Understanding the Numbers: Tokens per Second

To understand the performance of the Apple M1 in running LLMs, we need to look at its "tokens per second" (TPS) – essentially, the speed at which it can process text data. This is a crucial metric because LLMs process language in the form of tokens, which are like individual units of meaning in text. The higher the TPS, the faster the model can process information and generate responses.

Comparing M1 Variations: GPU Cores and Performance

The Apple M1 comes in various configurations, each with a different number of GPU cores. This impacts the chip's performance, particularly for tasks involving parallel processing like LLM inference. Let's take a look at the performance of the M1 with 7 and 8 GPU cores:

| ** | Apple M1 Configuration | GPU Cores | Llama2 7B Q8_0 TPS (Processing) | Llama2 7B Q8_0 TPS (Generation) | Llama2 7B Q4_0 TPS (Processing) | Llama2 7B Q4_0 TPS (Generation) | Llama3 8B Q4KM TPS (Processing) | Llama3 8B Q4KM TPS (Generation) | ** |

|---|---|---|---|---|---|---|---|---|---|

| M1 (7 GPU Cores) | 7 | 108.21 | 7.92 | 107.81 | 14.19 | 87.26 | 9.72 | ||

| M1 (8 GPU Cores) | 8 | 117.25 | 7.91 | 117.96 | 14.15 | Not Available | Not Available |

- Note: The table shows token speeds for specific LLM models, quantizations, and tasks (processing vs. generation). The "Processing" column relates to the speed of processing text input, while "Generation" represents the speed of generating output.

As you can see, the M1 with 8 GPU cores delivers a slight performance boost in most tested scenarios. This indicates that more GPU cores lead to faster processing and generation for these LLMs.

Quantization: A Path to Better Performance

Quantization is a technique used to optimize LLMs for faster performance and reduced memory usage. Think of it as using smaller numbers to represent the same information, which makes the model "lighter" and faster. In the table above, we see Llama models running with different quantization levels – Q80 and Q40.

- Q8_0: This quantization uses 8 bits to represent each number, which is more efficient than the original 32-bit floating-point numbers.

- Q4_0: This quantization uses only 4 bits, leading to even faster processing and greater efficiency.

Analyzing the Results: 7B and 8B Models

The table reveals that the Apple M1 demonstrates impressive performance with the Llama 2 7B and Llama 3 8B models, achieving high token processing speeds. The M1 can easily handle these models locally, making it a viable option for developers looking to experiment with AI applications.

However, it's worth noting that we don’t have data for performance with the Llama 2 7B F16, Llama 3 70B models and larger variants on the M1. These models require significantly more processing power and may not be suitable for running smoothly on the M1 chip.

Exploring the Limitations: From 7B to 70B Models

While the M1 excels with 7B and 8B models, larger LLMs present a different challenge. The Llama 3 70B model, for instance, requires a higher level of computational horsepower to run efficiently. This limitation is primarily due to the M1's GPU, which is not as powerful as dedicated GPUs found in gaming desktops or high-end workstations.

A helpful analogy is: think of the M1’s GPU as a smaller engine compared to the powerful engines in bigger vehicles. Smaller engines work well for regular driving, but they may struggle with demanding tasks like towing heavy loads.

Conclusion: The Apple M1 – A Solid Choice for AI Development (for the Right Models)

The Apple M1 chip offers a compelling platform for local AI development, particularly when working with smaller LLM models like Llama 2 7B and Llama 3 8B. Its combination of CPU, GPU, and Neural Engine combined with efficient quantization techniques, allows for efficient operation and relatively fast responses.

Larger models often require more computational resources, exceeding the capabilities of the M1. However, the M1 remains a potent tool for developers exploring the exciting world of local LLMs. With advancements in AI chip technology, we can expect even more powerful processors that empower developers to run even larger models locally in the future.

FAQ

What are Large Language Models (LLMs)?

LLMs are AI systems trained on massive datasets of text and code. They can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

What is tokenization in the context of LLMs?

Tokenization is the process of breaking down text into smaller units called tokens. Think of it as converting a sentence into individual words or punctuation marks. LLMs use tokenization to process text data effectively.

What is quantization, and why is it important for LLMs?

Quantization is a technique for reducing the size of LLMs by using smaller numbers to represent the same information. This results in faster processing and less memory usage, making LLMs more efficient to run locally.

Can I run any LLM on my Apple M1?

Not all LLMs can run smoothly on the Apple M1, especially larger models like the Llama 3 70B. The M1 chip's GPU may not be powerful enough to handle the complex calculations required by these models.

What are the benefits of running LLMs locally?

Running LLMs locally offers several advantages:

- Faster responses: You avoid the latency associated with cloud-based models.

- Increased privacy: Your data is not sent to remote servers.

- Offline accessibility: You can access your LLM without an internet connection.

How can I get started with local LLM development on my M1?

There are various resources available to help you get started with local LLM development on your M1:

- llama.cpp: A popular open-source library for running LLMs locally.

- Hugging Face Transformers: A library for working with models and datasets.

- The Hugging Face model hub: A repository of pre-trained models.

Keywords:

Apple M1, LLM, Large Language Model, Llama 2, Llama 3, Token Speed, Quantization, Local AI Development, GPU Cores, Inference, AI Chip, Performance, 7B model, 8B model, 70B model, Processing, Generation.