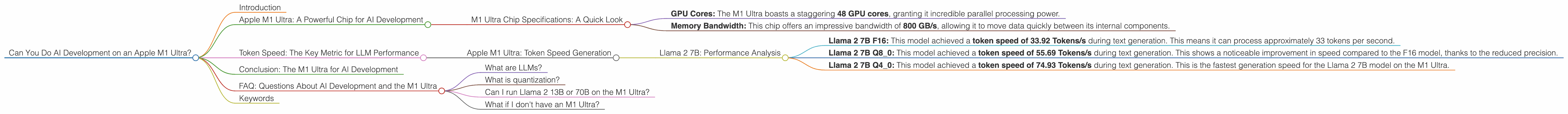

Can You Do AI Development on a Apple M1 Ultra?

Introduction

The world of AI is changing rapidly. Large Language Models (LLMs) like Llama 2, are becoming increasingly powerful, allowing us to do amazing things with language, code, and even images. But these models also require significant computing power. This is where the Apple M1 Ultra comes in.

This article will delve into the power of the M1 Ultra chip and its performance with different Llama 2 models. We'll compare the efficiency of this chip with various quantization levels and analyze its strengths and limitations in AI development.

Apple M1 Ultra: A Powerful Chip for AI Development

The Apple M1 Ultra is a powerful chip designed for high-performance computing and AI applications. Its impressive architecture combines a massive number of cores and high memory bandwidth, making it a potent contender for tackling demanding AI tasks.

M1 Ultra Chip Specifications: A Quick Look

- GPU Cores: The M1 Ultra boasts a staggering 48 GPU cores, granting it incredible parallel processing power.

- Memory Bandwidth: This chip offers an impressive bandwidth of 800 GB/s, allowing it to move data quickly between its internal components.

These specifications position the M1 Ultra as a potential powerhouse for AI workloads. But how does it actually perform when we throw LLMs at it? Let's dive into the numbers.

Token Speed: The Key Metric for LLM Performance

Understanding how fast an LLM can process tokens is crucial. Tokens are the fundamental building blocks of text, representing individual words or parts of words. The faster a chip can process tokens, the faster it can understand and generate text, translate languages, and perform other AI tasks.

Apple M1 Ultra: Token Speed Generation

To measure the performance of the Apple M1 Ultra with different LLM models, we'll focus on tokens per second (Tokens/s). Faster token processing speeds translate into quicker responses from the AI model.

Llama 2 7B: Performance Analysis

The data provided shows that the M1 Ultra can achieve impressive token speeds when running Llama 2 7B models. Let's break down the performance for different quantization levels:

- Llama 2 7B F16: This model achieved a token speed of 33.92 Tokens/s during text generation. This means it can process approximately 33 tokens per second.

- Llama 2 7B Q8_0: This model achieved a token speed of 55.69 Tokens/s during text generation. This shows a noticeable improvement in speed compared to the F16 model, thanks to the reduced precision.

- Llama 2 7B Q4_0: This model achieved a token speed of 74.93 Tokens/s during text generation. This is the fastest generation speed for the Llama 2 7B model on the M1 Ultra.

Important: There's currently no data available for other Llama 2 models (like 13B, 70B) on the M1 Ultra.

Quantization: A Simple Explanation

Think of quantization like compressing a video file. By using less precise numbers to represent the model's data, we make the file smaller and faster to process. This comes with the trade-off of slightly reduced accuracy.

Conclusion: The M1 Ultra for AI Development

Based on the available data, the Apple M1 Ultra has the potential to be a powerful tool for AI development, especially for smaller LLMs like Llama 2 7B. Its GPU cores and high memory bandwidth provide the necessary horsepower to achieve impressive token speeds.

However, it's essential to acknowledge the lack of data for larger models like Llama 2 13B and 70B. The performance of the M1 Ultra with these models remains unknown.

FAQ: Questions About AI Development and the M1 Ultra

What are LLMs?

LLMs, or Large Language Models, are artificial intelligence models trained on massive amounts of text data. They can understand and generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

What is quantization?

Quantization is a technique used to reduce the size of LLM models by using less precise numbers. It involves compressing the model's data, allowing it to run faster on devices with limited resources.

Can I run Llama 2 13B or 70B on the M1 Ultra?

We don't have data for the M1 Ultra running these larger models. It's possible that they might be too resource-intensive for this chip. Further research and testing are needed.

What if I don't have an M1 Ultra?

There are various other devices that are capable of running LLMs. You can explore the performance of different GPUs and CPUs for a better understanding of the options available to you.

Keywords

Apple M1 Ultra, AI development, LLM models, Llama 2, token speed, quantization, F16, Q80, Q40, performance, GPU cores, memory bandwidth, AI workload.