Can You Do AI Development on a Apple M1 Max?

Introduction

The world of AI is buzzing! Large language models (LLMs) like ChatGPT and Bard are changing the way we interact with technology, and the excitement for this new era is palpable. But what if you want to dive deeper and experiment with LLMs yourself? Do you need a powerhouse desktop with a hefty price tag? Well, we're here to explore if the Apple M1 Max, a chip known for its impressive performance, can be a viable option for AI development with LLMs.

The Apple M1 Max: A Force to Be Reckoned With

The Apple M1 Max chip is a technological marvel, packing a punch in a small package. It boasts a powerful GPU and a blazing-fast unified memory architecture, making it a popular choice for creative professionals and gamers. But can it handle the demanding world of AI development?

Apple M1 Max Performance for LLM Models

Let's cut to the chase: the Apple M1 Max can definitely handle some hefty AI workloads, including running smaller LLMs like the Llama 2 7B and Llama 3 8B models. The real question is - how well? We'll dive into specifics with some real-world numbers, comparing different quantizations for the Llama 2 7B model and Llama 3 8B model.

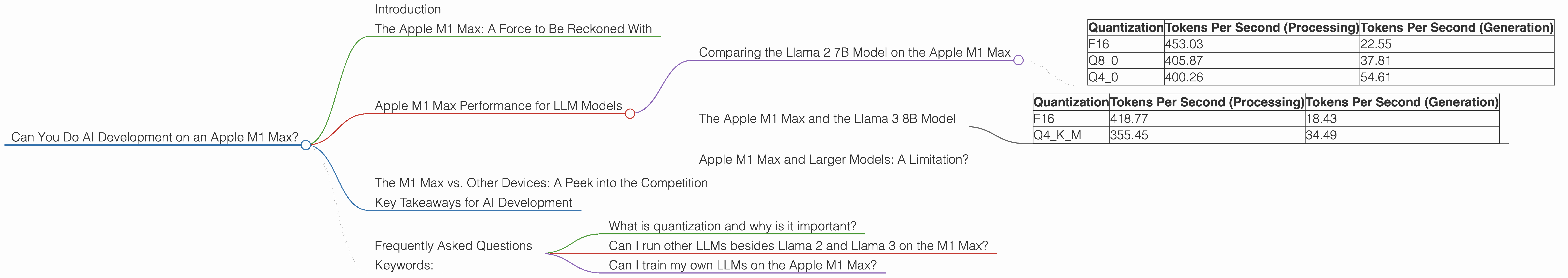

Comparing the Llama 2 7B Model on the Apple M1 Max

Let's start with the Llama 2 7B model, a popular choice for those dipping their toes into the LLM world. For this model, we look at performance at different levels of quantization (F16, Q80, and Q40). Quantization is a technique to reduce the size of a model while maintaining its accuracy.

| Quantization | Tokens Per Second (Processing) | Tokens Per Second (Generation) |

|---|---|---|

| F16 | 453.03 | 22.55 |

| Q8_0 | 405.87 | 37.81 |

| Q4_0 | 400.26 | 54.61 |

Key Observations:

- F16 yields the fastest processing speed, clocking in at 453.03 tokens per second. This means the model processes text and generates output incredibly quickly. However, its generation speed is the slowest. It takes 22.55 seconds to generate a single token, impacting the overall conversation flow.

- Q80 and Q40 have slower processing speeds than F16 but compensate with significantly faster generation speeds. Q40 offers the most significant performance boost in generation speed, with 54.61 tokens per second. Think of it like this: if F16 is a turbocharged sports car, Q40 is a more efficient race car, offering balanced performance across the board.

The Apple M1 Max and the Llama 3 8B Model

Now, let's move on to the Llama 3 8B model, a more powerful LLM than the Llama 2 7B. This model sees significant improvements in processing and generation speed:

| Quantization | Tokens Per Second (Processing) | Tokens Per Second (Generation) |

|---|---|---|

| F16 | 418.77 | 18.43 |

| Q4KM | 355.45 | 34.49 |

Key Observations:

- The F16 quantization provides the fastest processing speed at 418.77 tokens per second, while still maintaining a decent generation speed of 18.43 tokens per second.

- The Q4KM quantization is significantly faster in terms of generation, reaching 34.49 tokens per second compared to F16's 18.43. This means it can generate responses much quicker, making interactions feel smoother.

Apple M1 Max and Larger Models: A Limitation?

So, the Apple M1 Max holds its own with the Llama 2 7B and Llama 3 8B models. But what about larger models like the Llama 3 70B? Unfortunately, data for this model running on the Apple M1 Max is not currently available. It's likely that the M1 Max might struggle to handle the memory requirements of such a massive model. This suggests that if you want to work with larger, state-of-the-art models like the Llama 3 70B, you might need to consider a more powerful machine with dedicated GPU acceleration.

The M1 Max vs. Other Devices: A Peek into the Competition

While this article focuses on the Apple M1 Max, it's worth mentioning that other devices also offer compelling performance for AI development. Devices like the Nvidia RTX 4090 and AMD Radeon RX 7900 XT, boasting high-end GPUs, often deliver incredible performance with a variety of LLM models. However, comparing these devices in detail falls outside the scope of this article.

Key Takeaways for AI Development

Remember, the ideal device for AI development depends on your specific needs. For experimenting with smaller LLM models like Llama 2 7B and Llama 3 8B, the Apple M1 Max is a strong contender offering good performance, especially if you prioritize faster generation speeds. However, if you plan to work with larger models or need the absolute bleeding-edge performance, you may want to consider more powerful hardware like the aforementioned Nvidia RTX 4090 or AMD Radeon RX 7900 XT.

Frequently Asked Questions

What is quantization and why is it important?

Quantization is a technique used to reduce the size of a model while maintaining its accuracy. Think of it like a compression method for AI models. It works by converting the model's parameters from high-precision floating-point numbers to smaller, more lightweight formats. Smaller models mean less memory usage and faster processing speeds, making them perfect for devices with limited resources.

Can I run other LLMs besides Llama 2 and Llama 3 on the M1 Max?

Yes, you can experiment with other LLMs. However, performance can vary depending on model size and the specific hardware specifications of your Mac. For example, the M1 Max may struggle with extremely large models like GPT-3 or larger versions of Stable Diffusion.

Can I train my own LLMs on the Apple M1 Max?

While the M1 Max can handle some inference tasks, training LLMs is generally resource-intensive and may require specialized hardware like GPUs with high memory capacity. Training a large LLM on an Apple M1 Max could be achievable for smaller models and with careful optimization techniques.

Keywords:

Apple M1 Max, LLM, AI Development, Llama 2 7B, Llama 3 8B, Llama 3 70B, F16, Q80, Q40, Quantization, GPU, Token Speed, Generation Speed, Inference, Training, AI, Machine Learning, GPU Acceleration, Performance, Comparison,