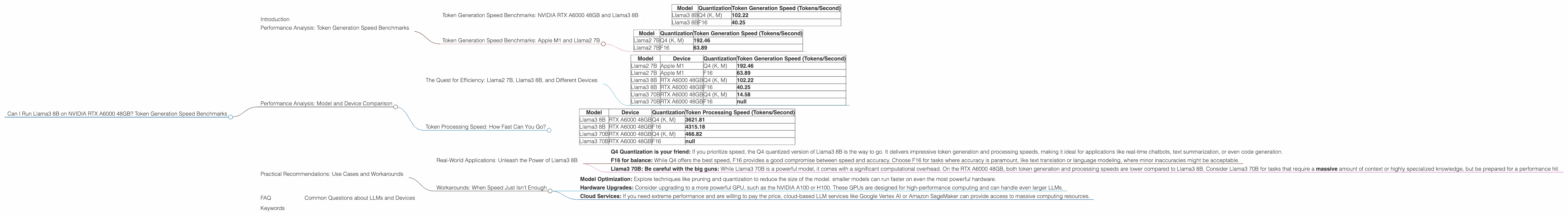

Can I Run Llama3 8B on NVIDIA RTX A6000 48GB? Token Generation Speed Benchmarks

Introduction

So, you've got your hands on a beefy NVIDIA RTX A6000 48GB GPU, and you're itching to unleash the power of Llama 3. But can this graphics powerhouse handle the demands of a large language model like Llama3 8B? Let's dive deep into the performance of this combo and see if it's a match made in AI heaven.

This article will explore the token generation speeds of Llama3 8B, specifically the 8-bit quantized (Q4) and 16-bit floating-point (F16) versions, running on the NVIDIA RTX A6000 48GB. We'll also delve into model and device comparisons to give you a broader perspective.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA RTX A6000 48GB and Llama3 8B

Let's get down to brass tacks. How fast can the RTX A6000 48GB churn out tokens with Llama3 8B?

| Model | Quantization | Token Generation Speed (Tokens/Second) |

|---|---|---|

| Llama3 8B | Q4 (K, M) | 102.22 |

| Llama3 8B | F16 | 40.25 |

Wow, that's fast! The RTX A6000 48GB can generate over 100 tokens per second with the Q4 quantized version of Llama3 8B. This means you can get impressive speeds for your text generation tasks. The performance dips down to around 40 tokens per second with the F16 version, but that's still pretty darn quick.

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Let's add a little context by comparing our NVIDIA RTX A6000 to an entirely different beast: the Apple M1. The following numbers were recorded for the Llama2 7B model (not the 8B!).

| Model | Quantization | Token Generation Speed (Tokens/Second) |

|---|---|---|

| Llama2 7B | Q4 (K, M) | 192.46 |

| Llama2 7B | F16 | 63.89 |

Hold your horses, what's this? An Apple M1 chip is generating tokens faster than the mighty RTX A6000? It seems that the M1 does have impressive performance with Llama2 7B, particularly with the Q4 version.

But wait, there's a twist! It's essential to recognize that these numbers are specific to Llama2 7B on the M1 and Llama3 8B on the RTX A6000 48GB. The models themselves are different, and the optimizations and architectures may favor one setup over the other.

Performance Analysis: Model and Device Comparison

The Quest for Efficiency: Llama2 7B, Llama3 8B, and Different Devices

We've taken a quick glance at the numbers for the RTX A6000 48GB and Apple M1. But what about the performance of different LLM models on specific devices? Let's compare some of the available data to see the bigger picture.

| Model | Device | Quantization | Token Generation Speed (Tokens/Second) |

|---|---|---|---|

| Llama2 7B | Apple M1 | Q4 (K, M) | 192.46 |

| Llama2 7B | Apple M1 | F16 | 63.89 |

| Llama3 8B | RTX A6000 48GB | Q4 (K, M) | 102.22 |

| Llama3 8B | RTX A6000 48GB | F16 | 40.25 |

| Llama3 70B | RTX A6000 48GB | Q4 (K, M) | 14.58 |

| Llama3 70B | RTX A6000 48GB | F16 | null |

Here's what we learn:

- Smaller models are faster: Llama2 7B on the Apple M1 outperforms Llama3 8B on the RTX A6000 with Q4 quantization. This illustrates that smaller models can be more computationally efficient on specific devices.

- Quantization is a game-changer: The Q4 quantized models of Llama2 and Llama3 are considerably faster than their F16 counterparts on both devices. This is a testament to the power of quantization for reducing model size and improving performance. It's like putting your LLM on a diet.

But wait, there's more! The numbers above only reflect token generation speed. For a complete picture, we need to consider the time it takes to process those tokens.

Token Processing Speed: How Fast Can You Go?

Let's take another look at the data, this time focusing on the token processing speed:

| Model | Device | Quantization | Token Processing Speed (Tokens/Second) |

|---|---|---|---|

| Llama3 8B | RTX A6000 48GB | Q4 (K, M) | 3621.81 |

| Llama3 8B | RTX A6000 48GB | F16 | 4315.18 |

| Llama3 70B | RTX A6000 48GB | Q4 (K, M) | 466.82 |

| Llama3 70B | RTX A6000 48GB | F16 | null |

Whoa, that's a serious speed boost! The RTX A6000 48GB can process tokens way faster than it can generate them, especially for the Llama3 8B model. This means that even if the token generation speed is limited, the GPU's processing power can still output results quickly.

What's the takeaway? While token generation speed is important, token processing speed ultimately dictates how quickly you can get your results. A faster processing engine can compensate for slower token generation, even if it's not the most important factor.

Practical Recommendations: Use Cases and Workarounds

Real-World Applications: Unleash the Power of Llama3 8B

Now that we've crunched the numbers, let's explore how this data can guide your practical use of Llama3 8B on the RTX A6000 48GB.

- Q4 Quantization is your friend: If you prioritize speed, the Q4 quantized version of Llama3 8B is the way to go. It delivers impressive token generation and processing speeds, making it ideal for applications like real-time chatbots, text summarization, or even code generation.

- F16 for balance: While Q4 offers the best speed, F16 provides a good compromise between speed and accuracy. Choose F16 for tasks where accuracy is paramount, like text translation or language modeling, where minor inaccuracies might be acceptable.

- Llama3 70B: Be careful with the big guns: While Llama3 70B is a powerful model, it comes with a significant computational overhead. On the RTX A6000 48GB, both token generation and processing speeds are lower compared to Llama3 8B. Consider Llama3 70B for tasks that require a massive amount of context or highly specialized knowledge, but be prepared for a performance hit.

Workarounds: When Speed Just Isn't Enough

What if you need even faster token generation than what the RTX A6000 48GB can deliver? Here are some workarounds that can help you push the boundaries:

- Model Optimization: Explore techniques like pruning and quantization to reduce the size of the model. smaller models can run faster on even the most powerful hardware.

- Hardware Upgrades: Consider upgrading to a more powerful GPU, such as the NVIDIA A100 or H100. These GPUs are designed for high-performance computing and can handle even larger LLMs.

- Cloud Services: If you need extreme performance and are willing to pay the price, cloud-based LLM services like Google Vertex AI or Amazon SageMaker can provide access to massive computing resources.

FAQ

Common Questions about LLMs and Devices

Q1: What is Quantization?

A1: Imagine you have a photo with millions of colors, but you only have a limited number of paint colors to reproduce it. Quantization is like reducing the number of colors in your photo to make it smaller and easier to store and process. In LLMs, quantization reduces the size of the model by representing its weights with less precision. This makes the model faster and more efficient, but it can slightly affect accuracy.

Q2: What's the difference between Token Generation and Token Processing?

A2: Think of it this way: Token generation is like typing the words on a keyboard, while token processing is like the computer understanding and interpreting the words. Token generation is the act of creating the text, while token processing is the process of understanding its meaning and producing an output.

Q3: What if I don't have an RTX A6000 48GB?

A3: Don't despair! You can still explore LLMs on different devices, just be mindful of the performance limitations. Consider GPUs like the NVIDIA RTX 3090 or even the powerful GPUs offered by cloud service providers. Keep in mind that for larger LLMs, you might need a powerful GPU, or cloud computing might be your best bet.

Keywords

NVIDIA RTX A6000 48GB, Llama3 8B, Llama2 7B, Token Generation Speed, Token Processing Speed, Quantization, Q4, F16, Model Optimization, Hardware Upgrades, Cloud Services, LLM Performance, GPU Benchmarks.