Can I Run Llama3 8B on NVIDIA RTX 5000 Ada 32GB? Token Generation Speed Benchmarks

Introduction

You've got your hands on a powerful NVIDIA RTX 5000 Ada 32GB and you're itching to run a hefty language model (LLM) like Llama 3. The question is, will your hardware handle the task? We're going to dive into the fascinating world of local LLM performance, focusing specifically on the token generation speeds of Llama3 8B on the RTX 5000 Ada.

Think of token generation as the LLM's typing speed. The faster it generates tokens, the quicker it can churn out text, translate languages, summarize articles, or even write code.

This article will be your guide to understanding the performance of Llama3 8B on the RTX 5000 Ada 32GB. We'll break down the results, compare different configurations, and offer practical recommendations for using your awesome GPU to its full potential.

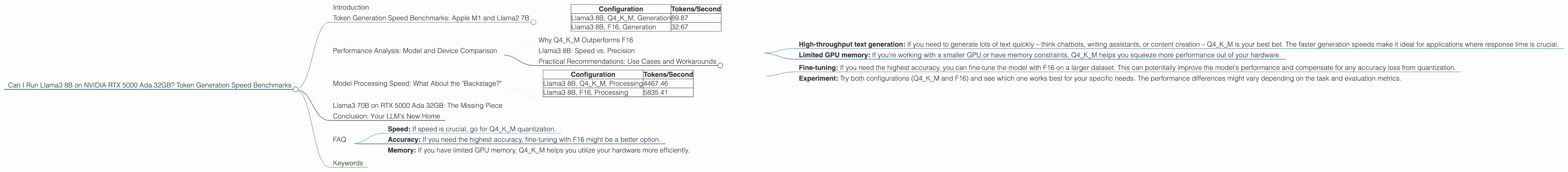

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Our first stop is the token generation speed, aka how many tokens your LLM can produce per second. Let's cut to the chase:

| Configuration | Tokens/Second |

|---|---|

| Llama3 8B, Q4KM, Generation | 89.87 |

| Llama3 8B, F16, Generation | 32.67 |

What does this mean?

- Q4KM: This refers to the quantization of the model. It's like compressing the model to make it smaller and run faster on your hardware.

- F16: This is half-precision floating point, a way to represent numbers that uses less memory than standard precision.

- Generation: This is the process of generating new text.

Here's the TL;DR: Llama3 8B with Q4KM quantization is significantly faster than using F16. It's capable of generating nearly three times the number of tokens per second.

Performance Analysis: Model and Device Comparison

Why Q4KM Outperforms F16

The reason Q4KM reigns supreme is that it utilizes a smaller model size. Remember, the smaller the model, the less data it needs to process, resulting in a speed boost.

Think of it like this: If you're trying to quickly sort through a pile of papers, it's naturally going to be faster if the pile is smaller. Quantization essentially shrinks the "pile of papers" that the LLM needs to work with.

Llama3 8B: Speed vs. Precision

Now, you might be thinking, "Okay, Q4KM is faster, but does it impact the quality of the output?" It's a valid question!

Generally, Q4KM can result in a slight drop in accuracy compared to F16. However, the difference is often subtle and might not be noticeable for many applications.

Think of it like this: The difference between Q4KM and F16 is similar to the difference between a high-resolution picture and a slightly compressed version. Sure, the compressed version might not be as sharp, but it's perfectly acceptable for everyday use.

Practical Recommendations: Use Cases and Workarounds

Use Cases

- High-throughput text generation: If you need to generate lots of text quickly – think chatbots, writing assistants, or content creation – Q4KM is your best bet. The faster generation speeds make it ideal for applications where response time is crucial.

- Limited GPU memory: If you're working with a smaller GPU or have memory constraints, Q4KM helps you squeeze more performance out of your hardware.

Workarounds

- Fine-tuning: If you need the highest accuracy, you can fine-tune the model with F16 on a larger dataset. This can potentially improve the model's performance and compensate for any accuracy loss from quantization.

- Experiment: Try both configurations (Q4KM and F16) and see which one works best for your specific needs. The performance differences might vary depending on the task and evaluation metrics.

Model Processing Speed: What About the "Backstage?"

While token generation is the main event when it comes to LLMs, there's another important factor to consider: model processing speed. This refers to the time it takes for the LLM to process the input text before generating any output.

| Configuration | Tokens/Second |

|---|---|

| Llama3 8B, Q4KM, Processing | 4467.46 |

| Llama3 8B, F16, Processing | 5835.41 |

Whoa, hold on! We see a surprising trend here: F16 processing is faster than Q4KM processing. This is due to the nature of floating-point operations - F16 uses less data and therefore achieves faster processing times.

Think of it like this: It's analogous to running a race where you have to carry a heavy bag. The person with the lighter bag might be faster at carrying it (analogous to processing the input), but they might be slower at running (generating the output) once they reach the final destination.

Practical Implications:

- If you're dealing with short inputs and your primary concern is getting responses quickly, F16 might be a better choice despite its slower generation speed.

- For longer inputs, the overall speed is heavily influenced by generation speed.

Llama3 70B on RTX 5000 Ada 32GB: The Missing Piece

Unfortunately, we don't have data for Llama3 70B on this specific GPU configuration. This is because the benchmarks for this particular setup are not publicly available at present.

This doesn't mean you can't run Llama3 70B on the RTX 5000 Ada 32GB. It simply means that we don't have specific performance figures for it just yet.

Conclusion: Your LLM's New Home

You've got a powerhouse GPU, and now you have the insights to select the right LLM configuration for your needs. Whether you're focused on speed, accuracy, or finding the perfect balance between the two, you're now equipped to make informed decisions.

Remember, the world of LLMs is constantly evolving, with new benchmarks and model releases coming out all the time. Stay tuned for future updates and keep exploring the exciting possibilities of running LLMs locally!

FAQ

Q: How do I choose the right LLM configuration for my needs?

A: It depends on your priorities:

- Speed: If speed is crucial, go for Q4KM quantization.

- Accuracy: If you need the highest accuracy, fine-tuning with F16 might be a better option.

- Memory: If you have limited GPU memory, Q4KM helps you utilize your hardware more efficiently.

Q: Can I run Llama3 70B on the RTX 5000 Ada 32GB?

A: We don't have data for Llama3 70B on this specific GPU, but it's likely possible. Further research and experimentation may reveal its performance capabilities.

Q: What is quantization?

A: Quantization is a technique used to reduce the size of a model by representing its weights (the numerical parameters that define the model) using a smaller number of bits. This makes the model take up less memory and allows it to run faster on hardware with limited resources.

Q: What are tokens?

A: Tokens are the basic building blocks of text. They can be individual words, punctuation marks, or even sub-word units like prefixes or suffixes. LLMs process text by breaking it down into tokens and then generating tokens as output.

Keywords

LLM, Llama3, Llama3 8B, NVIDIA RTX 5000 Ada 32GB, token generation speed, quantization, Q4KM, F16, performance benchmarks, model processing speed, use cases, workarounds, GPU, machine learning, natural language processing, AI, deep learning