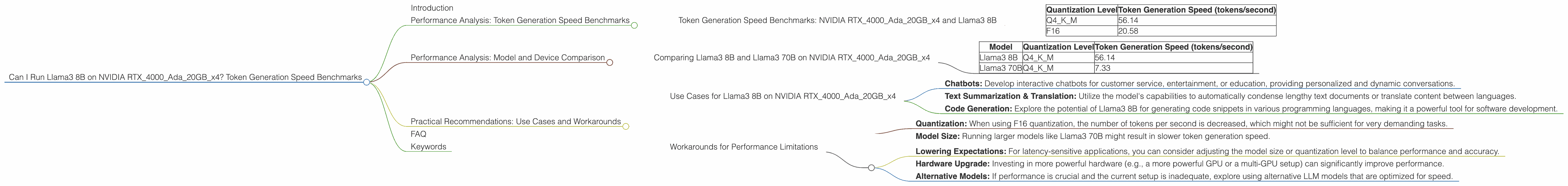

Can I Run Llama3 8B on NVIDIA RTX 4000 Ada 20GB x4? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is evolving rapidly, and with it, the demand for powerful hardware to run these models locally is escalating. LLMs are incredibly complex algorithms that require significant computational resources. This article delves into the performance of Llama3 8B, one of the popular open-source LLMs, on a specific hardware configuration: NVIDIA RTX4000Ada20GBx4. We'll examine token generation speed benchmarks and compare their performance across different model quantization levels.

Imagine being able to run a "mini" version of a powerful language model like ChatGPT on your own machine! No more relying on internet connectivity, no more waiting for responses from servers. It's all happening right there, on your computer. That's the promise of local LLM models, and this article is your guide to understanding how they perform on a specific hardware setup. Let's dive in!

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA RTX4000Ada20GBx4 and Llama3 8B

The token generation speed refers to how quickly the model can process and generate text. This is crucial for real-time applications like chatbots, where user responses need to be quick and natural. Let's see how Llama3 8B performs on our NVIDIA RTX4000Ada20GBx4 setup:

| Quantization Level | Token Generation Speed (tokens/second) |

|---|---|

| Q4KM | 56.14 |

| F16 | 20.58 |

Key Takeaways:

- Quantization Matters: There's a significant difference in performance based on the quantization level. Q4KM, which involves compressing the model's weights to 4-bit precision, delivers a much faster token generation speed compared to F16 (half precision).

- High Performance: Even with F16 quantization, the NVIDIA RTX4000Ada20GBx4 is capable of generating tokens at a respectable speed. This implies that the hardware is well-suited for running LLMs locally.

Performance Analysis: Model and Device Comparison

Comparing Llama3 8B and Llama3 70B on NVIDIA RTX4000Ada20GBx4

It's interesting to compare the performance of Llama3 8B with its larger sibling, Llama3 70B, on the same hardware. This tells us how scaling up the model size impacts performance.

| Model | Quantization Level | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama3 8B | Q4KM | 56.14 |

| Llama3 70B | Q4KM | 7.33 |

Key Takeaways:

- Size Matters: The larger Llama3 70B model, despite its impressive capabilities, experiences a significant decrease in token generation speed compared to Llama3 8B. This is a common trend in LLMs; increasing the model size often comes at the cost of performance.

- Trade-offs: The choice between using a larger or smaller model depends on your application's requirements. If speed is paramount, a smaller model might be the better choice. For tasks requiring extensive knowledge and complex reasoning, a larger model might be necessary.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B on NVIDIA RTX4000Ada20GBx4

The combination of Llama3 8B and the NVIDIA RTX4000Ada20GBx4 presents exciting possibilities for running LLMs locally. Here are some potential use cases:

- Chatbots: Develop interactive chatbots for customer service, entertainment, or education, providing personalized and dynamic conversations.

- Text Summarization & Translation: Utilize the model's capabilities to automatically condense lengthy text documents or translate content between languages.

- Code Generation: Explore the potential of Llama3 8B for generating code snippets in various programming languages, making it a powerful tool for software development.

Workarounds for Performance Limitations

While the performance of Llama3 8B on the NVIDIA RTX4000Ada20GBx4 is impressive, there are situations where you might encounter performance limitations:

- Quantization: When using F16 quantization, the number of tokens per second is decreased, which might not be sufficient for very demanding tasks.

- Model Size: Running larger models like Llama3 70B might result in slower token generation speed.

Here are some workarounds for handling these limitations:

- Lowering Expectations: For latency-sensitive applications, you can consider adjusting the model size or quantization level to balance performance and accuracy.

- Hardware Upgrade: Investing in more powerful hardware (e.g., a more powerful GPU or a multi-GPU setup) can significantly improve performance.

- Alternative Models: If performance is crucial and the current setup is inadequate, explore using alternative LLM models that are optimized for speed.

FAQ

Q: What is quantization?

A: Quantization is a technique used to compress the weights of a neural network, reducing its memory footprint. This allows for faster processing and less memory usage. Think of it like compressing a large image file; you lose some detail but gain a smaller file size.

Q: Why is token generation speed important?

*A: * You can think of tokens as Lego bricks for language. LLMs break down text into these tokens to understand and process it. The faster the model can generate these tokens, the faster it can respond to prompts and generate new text.

Q: What are the limitations of running LLMs locally?

*A: * Local LLMs are limited by the available hardware. If your device isn't powerful enough, the model might run slowly or even crash. Also, training a large LLM locally is very resource-intensive and often not practical.

Q: Are there any other software options for running LLMs locally?

A: * Yes, there are other software options available like: * *llama.cpp: This is a C++ implementation of Llama that allows you to run the model on your CPU or GPU. * GPT-NeoX: This is a library for running GPT-Neo models, a popular family of open-source language models, on your CPU or GPU. * Hugging Face Transformers: This is a popular library for working with all kinds of NLP models, including LLMs. It provides access to various pre-trained models and tools for fine-tuning them.

Keywords

Llama3 8B, NVIDIA RTX4000Ada20GBx4, Token Generation Speed, Quantization, Local LLMs, Large Language Models, Performance Benchmarks, NLP, AI, Open Source, GPU, Deep Learning, LLMs on Desktop, Model Size, Practical Recommendations, Chatbots, Text Summarization, Code Generation, Conversational AI, GPU Performance, Hardware Limitations