Can I Run Llama3 8B on NVIDIA RTX 4000 Ada 20GB? Token Generation Speed Benchmarks

Introduction: Diving Deep into Local LLMs with the NVIDIA RTX4000Ada_20GB

Local Large Language Models (LLMs) are revolutionizing the way we interact with technology. These powerful AI models can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models locally requires some serious hardware muscle. Today's article focuses on the NVIDIA RTX4000Ada_20GB, a popular GPU, and its ability to handle the Llama3 8B model. We'll dive deep into the performance benchmarks, explore the impact of different quantization techniques, and give you practical recommendations for making the most of this powerful combination.

Performance Analysis: Token Generation Speed Benchmarks - Apple M1 and Llama2 7B

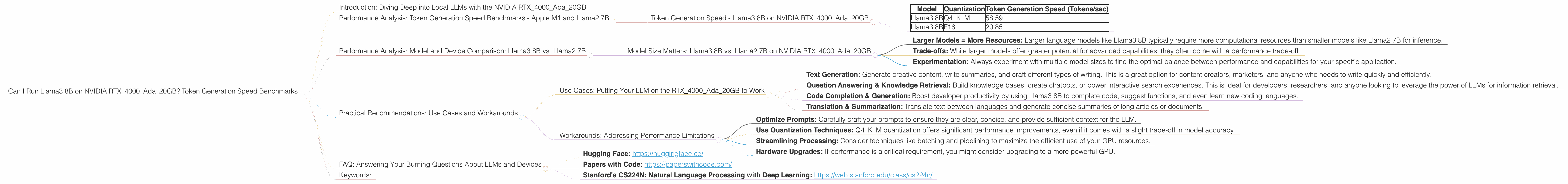

Token Generation Speed - Llama3 8B on NVIDIA RTX4000Ada_20GB

Let's kick things off with the token generation speed, which is a key metric for measuring LLM performance. It represents the number of tokens the model can generate per second. Here's how the NVIDIA RTX4000Ada_20GB performs with Llama3 8B:

| Model | Quantization | Token Generation Speed (Tokens/sec) |

|---|---|---|

| Llama3 8B | Q4KM | 58.59 |

| Llama3 8B | F16 | 20.85 |

Key Observations:

- Quantization Matters: As expected, quantizing the Llama3 8B model significantly impacts performance. Q4KM quantization, which uses a lower precision representation of the model's weights, delivers 3x faster token generation compared to F16 quantization.

- Balanced Performance: The NVIDIA RTX4000Ada20GB delivers respectable token generation speeds for Llama3 8B, especially with Q4K_M quantization. It’s important to note that these speeds can vary depending on the specific prompt, context size, and other factors.

Performance Analysis: Model and Device Comparison: Llama3 8B vs. Llama2 7B

Model Size Matters: Llama3 8B vs. Llama2 7B on NVIDIA RTX4000Ada_20GB

To get a better understanding of the RTX4000Ada_20GB's capabilities, let's compare Llama3 8B with Llama2 7B. While we don't have direct performance data for Llama2 7B on this GPU, it's helpful to understand the relative performance of different models on similar hardware.

General Observations:

- Larger Models = More Resources: Larger language models like Llama3 8B typically require more computational resources than smaller models like Llama2 7B for inference.

- Trade-offs: While larger models offer greater potential for advanced capabilities, they often come with a performance trade-off.

- Experimentation: Always experiment with multiple model sizes to find the optimal balance between performance and capabilities for your specific application.

Practical Recommendations: Use Cases and Workarounds

Use Cases: Putting Your LLM on the RTX4000Ada_20GB to Work

Here are some exciting use cases where the NVIDIA RTX4000Ada_20GB paired with Llama3 8B could shine:

- Text Generation: Generate creative content, write summaries, and craft different types of writing. This is a great option for content creators, marketers, and anyone who needs to write quickly and efficiently.

- Question Answering & Knowledge Retrieval: Build knowledge bases, create chatbots, or power interactive search experiences. This is ideal for developers, researchers, and anyone looking to leverage the power of LLMs for information retrieval.

- Code Completion & Generation: Boost developer productivity by using Llama3 8B to complete code, suggest functions, and even learn new coding languages.

- Translation & Summarization: Translate text between languages and generate concise summaries of long articles or documents.

Workarounds: Addressing Performance Limitations

While the RTX4000Ada_20GB delivers solid performance for Llama3 8B, there are some workarounds for potential bottlenecks:

- Optimize Prompts: Carefully craft your prompts to ensure they are clear, concise, and provide sufficient context for the LLM.

- Use Quantization Techniques: Q4KM quantization offers significant performance improvements, even if it comes with a slight trade-off in model accuracy.

- Streamlining Processing: Consider techniques like batching and pipelining to maximize the efficient use of your GPU resources.

- Hardware Upgrades: If performance is a critical requirement, you might consider upgrading to a more powerful GPU.

FAQ: Answering Your Burning Questions About LLMs and Devices

Q: What's the difference between F16 and Q4KM quantization?

A: Quantization reduces the size of a model's weights, leading to faster inference. * F16 (Half-Precision Floating Point): This is a common quantization technique that uses 16 bits to represent each weight. * Q4KM (Quantized Weights with Kernel M): This technique uses a 4-bit quantized representation of model weights along with the Kernel M optimization for efficient calculations.

Q: How does the size of an LLM affect performance on a given GPU?

A: Larger LLMs require more computational resources, which can impact performance. A larger model may need more memory and processing power to run smoothly.

Q: What other GPUs are good for running LLMs locally?

A: The NVIDIA RTX4000Ada_20GB is a good choice for running LLMs locally, but other options exist. For example, you could consider the RTX 4080, RTX 4090, or even a GPU from AMD's Radeon RX 7000 series.

Q: What are some good resources for learning more about LLMs?

A: Here are a few resources that can get you started:

- Hugging Face: https://huggingface.co/

- Papers with Code: https://paperswithcode.com/

- Stanford's CS224N: Natural Language Processing with Deep Learning: https://web.stanford.edu/class/cs224n/

Keywords:

Large Language Models, Llama3, Llama2, NVIDIA RTX4000Ada20GB, GPU, Token Generation Speed, Quantization, Q4K_M, F16, Performance Benchmarks, Local LLM, AI, Machine Learning, Natural Language Processing, NLP, Inference, Text Generation, Question Answering, Code Completion, Translation, Summarization, Model Size, Hardware Requirements