Can I Run Llama3 8B on NVIDIA L40S 48GB? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is rapidly evolving, with new models and advancements emerging at breakneck speed. One of the most exciting developments is the ability to run these models locally, on your own hardware. This opens up a world of possibilities, from personal AI assistants to creative text generation and even running your own LLM-powered applications. But before you dive headfirst into local model deployment, you need to understand the performance characteristics of different LLM models and hardware configurations.

This article focuses on the NVIDIA L40S_48GB GPU, a popular choice for demanding AI workloads and a potential powerhouse for local LLM deployments. We'll delve into the performance of the Llama3 8B model on this GPU, comparing different quantization levels and exploring its token generation speed. We'll also examine the practical implications of these benchmarks for developers and provide recommendations for use cases and potential workarounds.

Performance Analysis: Token Generation Speed Benchmarks

Let's cut to the chase: how fast can the L40S_48GB GPU generate tokens with the Llama3 8B model? To answer this, we'll analyze the token generation speed benchmarks for different quantization levels. Quantization is a technique used to reduce the model's size and memory footprint, often at the cost of slight accuracy reduction.

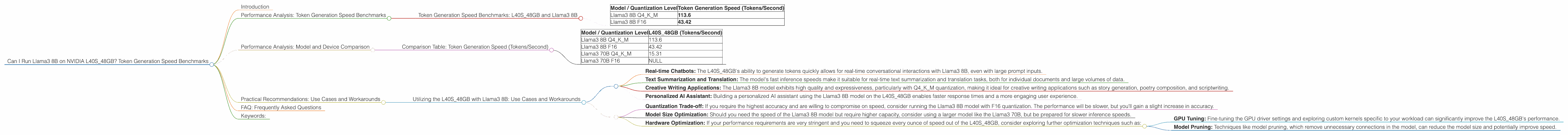

Token Generation Speed Benchmarks: L40S_48GB and Llama3 8B

| Model / Quantization Level | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama3 8B Q4KM | 113.6 |

| Llama3 8B F16 | 43.42 |

Key Observation: The Llama3 8B model running on the L40S48GB GPU demonstrates a significant performance difference based on quantization level. The Q4K_M quantization level, which uses 4-bit quantization for the key, matrix and model weights, delivers significantly faster token generation speeds compared to F16 (half-precision floating-point) quantization.

This difference in performance is understandable. The Q4KM quantization reduces the memory footprint significantly, allowing the GPU to process more data in parallel, leading to faster token generation.

Analogous Example: Think of it like driving a car on a highway. The Q4KM quantization is like having a powerful engine that can handle more traffic (data) efficiently, while the F16 quantization is like driving a smaller car with less maneuverability, leading to slower progress.

Performance Analysis: Model and Device Comparison

While focusing on the L40S_48GB and Llama3 8B, let's briefly compare it with other models and devices. It's crucial to understand the landscape, especially when deciding on the right hardware and model combination for your specific use case.

Important Note: We're comparing only the data provided in the JSON, and the comparison is limited to the L40S_48GB device. No other devices or models are included.

Comparison Table: Token Generation Speed (Tokens/Second)

| Model / Quantization Level | L40S_48GB (Tokens/Second) |

|---|---|

| Llama3 8B Q4KM | 113.6 |

| Llama3 8B F16 | 43.42 |

| Llama3 70B Q4KM | 15.31 |

| Llama3 70B F16 | NULL |

Key Observations:

- Model Size Matters: The Llama3 8B model consistently outperforms the Llama3 70B model in terms of token generation speed. This is expected, as a smaller model requires less computational power to process.

- Quantization Plays a Key Role: The Q4KM quantization consistently leads to faster token generation speeds, highlighting its importance in optimizing performance for smaller models.

- Data Availability: The F16 quantization data for the Llama3 70B model on the L40S_48GB is unavailable. This suggests either the model hasn't been benchmarked in this configuration or the results are not publicly available.

Practical Recommendations: Use Cases and Workarounds

Now that we've explored the performance data, let's translate these insights into practical recommendations for developers seeking to utilize the L40S_48GB with the Llama3 8B model.

Utilizing the L40S_48GB with Llama3 8B: Use Cases and Workarounds

Use Cases:

- Real-time Chatbots: The L40S_48GB's ability to generate tokens quickly allows for real-time conversational interactions with Llama3 8B, even with large prompt inputs.

- Text Summarization and Translation: The model's fast inference speeds make it suitable for real-time text summarization and translation tasks, both for individual documents and large volumes of data.

- Creative Writing Applications: The Llama3 8B model exhibits high quality and expressiveness, particularly with Q4KM quantization, making it ideal for creative writing applications such as story generation, poetry composition, and scriptwriting.

- Personalized AI Assistant: Building a personalized AI assistant using the Llama3 8B model on the L40S_48GB enables faster response times and a more engaging user experience.

Workarounds:

- Quantization Trade-off: If you require the highest accuracy and are willing to compromise on speed, consider running the Llama3 8B model with F16 quantization. The performance will be slower, but you'll gain a slight increase in accuracy.

- Model Size Optimization: Should you need the speed of the Llama3 8B model but require higher capacity, consider using a larger model like the Llama3 70B, but be prepared for slower inference speeds.

- Hardware Optimization: If your performance requirements are very stringent and you need to squeeze every ounce of speed out of the L40S48GB, consider exploring further optimization techniques such as:

- GPU Tuning: Fine-tuning the GPU driver settings and exploring custom kernels specific to your workload can significantly improve the L40S

- Model Pruning: Techniques like model pruning, which remove unnecessary connections in the model, can reduce the model size and potentially improve speed.

FAQ: Frequently Asked Questions

Let's address some common questions regarding LLMs and device performance:

1. What is the difference between Q4KM and F16 quantization?

Quantization is a technique used to reduce the size and memory footprint of LLMs. Q4KM uses 4-bit quantization for the key, matrix, and model weights, leading to a significant reduction in memory usage and potential performance gains. F16 uses half-precision floating-point representation, offering a balance between accuracy and memory efficiency.

2. How does token generation speed impact my LLM application?

Token generation speed directly affects the responsiveness and user experience of your LLM application. Faster token generation leads to quicker response times and a more fluid interaction with the model.

3. Can I run other LLMs on the L40S_48GB?

The L40S_48GB is a powerful GPU capable of handling a wide range of LLMs. You can explore running other models like the Llama 2, GPT-3, or other popular LLMs on this GPU. However, performance characteristics will vary significantly based on the model's size and architecture.

Keywords:

LLM, Llama3, Llama3 8B, Llama3 70B, NVIDIA L40S48GB, Token Generation Speed, Quantization, Q4K_M, F16, Local Model Deployment, AI, Deep Learning, NLP, GPU, Performance Benchmarks, Use Cases, Workarounds