Can I Run Llama3 8B on NVIDIA A40 48GB? Token Generation Speed Benchmarks

Introduction

The world of Large Language Models (LLMs) is exploding, and with it, the quest for faster and more efficient ways to run them locally. Whether you're a developer building AI-powered applications or a tech enthusiast exploring the frontiers of language processing, knowing how different LLMs perform on specific hardware is crucial.

In this deep dive, we'll focus on NVIDIA A40_48GB GPUs and their capabilities for running Llama3 8B, a powerful, open-source LLM that's making waves in the AI community. We'll examine token generation speed benchmarks, delve into different quantization techniques, and provide practical recommendations for choosing the right set-up for your specific needs.

Performance Analysis: Token Generation Speed Benchmarks

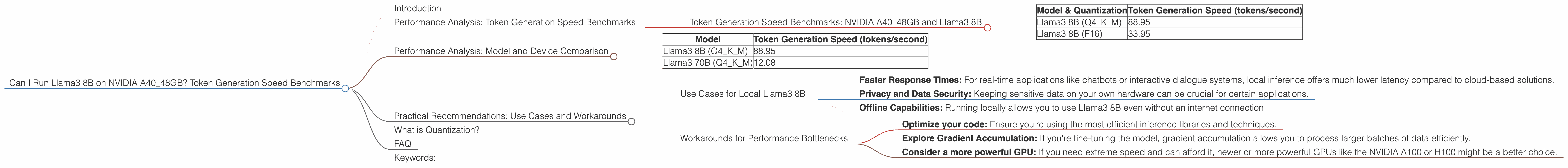

Token Generation Speed Benchmarks: NVIDIA A40_48GB and Llama3 8B

The following table showcases the token generation speed, measured in tokens per second, for Llama3 8B running on NVIDIA A40_48GB with different quantization strategies:

| Model & Quantization | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B (Q4KM) | 88.95 |

| Llama3 8B (F16) | 33.95 |

Key Takeaways:

- Quantization Matters: As you can see, the quantization method used for the model has a significant impact on performance. Q4KM (4-bit quantization with kernel and matrix storage) delivers a significantly faster token generation speed compared to F16 (half-precision floating-point).

- A4048GB's Power: Even for demanding LLMs like Llama3 8B, the A4048GB GPU delivers impressive performance, capable of generating tens of thousands of tokens per second.

Performance Analysis: Model and Device Comparison

It's interesting to see how Llama3 8B running on the A4048GB compares to other models on the same device. Unfortunately, we don't have data for other Llama3 models (like 70B) with F16 quantization on the A4048GB. However, we can look at the available data for Q4KM quantization:

| Model | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B (Q4KM) | 88.95 |

| Llama3 70B (Q4KM) | 12.08 |

This data shows that while the larger Llama3 70B can still be run on the A40_48GB, its token generation speed is significantly lower due to the increased model size.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Local Llama3 8B

Running Llama3 8B locally gives you several advantages, especially when you need:

- Faster Response Times: For real-time applications like chatbots or interactive dialogue systems, local inference offers much lower latency compared to cloud-based solutions.

- Privacy and Data Security: Keeping sensitive data on your own hardware can be crucial for certain applications.

- Offline Capabilities: Running locally allows you to use Llama3 8B even without an internet connection.

Workarounds for Performance Bottlenecks

If you find that the performance of Llama3 8B on your A40_48GB is still not meeting your requirements, here are some options:

- Optimize your code: Ensure you're using the most efficient inference libraries and techniques.

- Explore Gradient Accumulation: If you're fine-tuning the model, gradient accumulation allows you to process larger batches of data efficiently.

- Consider a more powerful GPU: If you need extreme speed and can afford it, newer or more powerful GPUs like the NVIDIA A100 or H100 might be a better choice.

What is Quantization?

Imagine you have a massive library of books, each representing a number in a computer's memory. These numbers can be very precise, with lots of decimal places. Quantization is like using a smaller dictionary with fewer words to represent those numbers, making the library more compact and efficient. This reduces the amount of memory needed to store the model, leading to faster processing.

FAQ

Q: Is Llama3 8B the best model for my use case?

A: The best model depends on your specific needs. Consider factors like the complexity of the task, desired accuracy, and available resources.

Q: Can I run Llama3 8B on a smaller GPU?

A: It might be possible, but performance will vary depending on the GPU's memory and processing power. You might need to use lower-precision quantization or smaller models for optimal results.

Q: What about other LLMs?

A: This article focused on Llama3 8B, but numerous other LLMs are out there. It's important to research the performance of different models on your desired hardware before making a decision.

Keywords:

Local LLMs, Llama3 8B, NVIDIA A4048GB, GPU, Token Generation Speed, Quantization, Q4K_M, F16, Performance, Benchmark, Inference, AI, Machine Learning, Deep Learning, Open Source, Development,