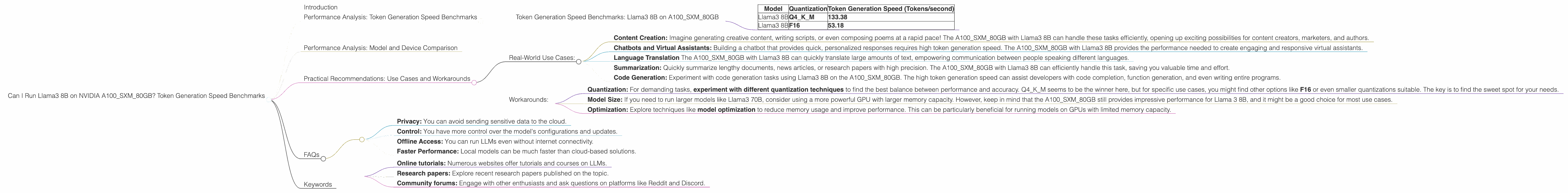

Can I Run Llama3 8B on NVIDIA A100 SXM 80GB? Token Generation Speed Benchmarks

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement, and for good reason! These incredible AI systems are capable of understanding and generating human-like text, revolutionizing fields like natural language processing, content creation, and even coding. But a crucial question arises: how do these powerful LLMs perform in real-world scenarios, especially when running locally on specific hardware?

This deep dive focuses on the NVIDIA A100SXM80GB GPU and its capabilities in handling the Llama3 8B model. We'll explore the token generation speed using Llama.cpp, a popular open-source library for running LLMs locally. Imagine Llama3 8B as a super-smart parrot, and token generation speed as its ability to spit out words per second. The faster the token generation, the faster Llama3 8B can churn out text, translate languages, or answer your questions.

Buckle up, because we're diving deep into the numbers and analyzing the performance of Llama3 8B on the A100SXM80GB, comparing different quantization methods, and exploring practical use cases.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: Llama3 8B on A100SXM80GB

Let's get down to the nitty-gritty and see what numbers we're dealing with.

| Model | Quantization | Token Generation Speed (Tokens/second) |

|---|---|---|

| Llama3 8B | Q4KM | 133.38 |

| Llama3 8B | F16 | 53.18 |

What do these numbers tell us?

- The Llama3 8B model with Q4KM quantization achieves a remarkable 133.38 tokens per second on the A100SXM80GB. That's like having a super-fast typist who can write over 130 words every second!

- Using F16 quantization, the token generation speed drops significantly to 53.18 tokens per second. This is still a respectable speed, but it's almost 2.5 times slower than the Q4KM version.

Quantization, the Secret Sauce:

Quantization is a technique used to reduce the size of LLM models without sacrificing too much accuracy. Imagine compressing a high-resolution photo without losing too much detail. In this case, Q4KM quantization represents a huge advantage in terms of performance, allowing the A100SXM80GB to churn through tokens at an impressive rate. This is mainly due to the efficient memory access and reduced computation required for the smaller data representation.

Think of this as a sprinter carrying a lighter weight - they can run much faster! Quantization does the same for LLMs, making them move and process information much quicker!

Performance Analysis: Model and Device Comparison

Unfortunately, we lack benchmarks for other Llama models and devices. However, the results we have for the A100SXM80GB and Llama 3 8B show a powerful combination.

Practical Recommendations: Use Cases and Workarounds

Real-World Use Cases:

- Content Creation: Imagine generating creative content, writing scripts, or even composing poems at a rapid pace! The A100SXM80GB with Llama3 8B can handle these tasks efficiently, opening up exciting possibilities for content creators, marketers, and authors.

- Chatbots and Virtual Assistants: Building a chatbot that provides quick, personalized responses requires high token generation speed. The A100SXM80GB with Llama3 8B provides the performance needed to create engaging and responsive virtual assistants.

- Language Translation The A100SXM80GB with Llama3 8B can quickly translate large amounts of text, empowering communication between people speaking different languages.

- Summarization: Quickly summarize lengthy documents, news articles, or research papers with high precision. The A100SXM80GB with Llama3 8B can efficiently handle this task, saving you valuable time and effort.

- Code Generation: Experiment with code generation tasks using Llama3 8B on the A100SXM80GB. The high token generation speed can assist developers with code completion, function generation, and even writing entire programs.

Workarounds:

- Quantization: For demanding tasks, experiment with different quantization techniques to find the best balance between performance and accuracy. Q4KM seems to be the winner here, but for specific use cases, you might find other options like F16 or even smaller quantizations suitable. The key is to find the sweet spot for your needs.

- Model Size: If you need to run larger models like Llama3 70B, consider using a more powerful GPU with larger memory capacity. However, keep in mind that the A100SXM80GB still provides impressive performance for Llama 3 8B, and it might be a good choice for most use cases.

- Optimization: Explore techniques like model optimization to reduce memory usage and improve performance. This can be particularly beneficial for running models on GPUs with limited memory capacity.

FAQs

Q: How do I choose the right LLM model for my specific needs?

A: The choice of the LLM model depends on the task at hand and your hardware capabilities. For example, if you need a model for complex tasks like code generation, a larger model like Llama3 70B might be necessary. However, if you're working on tasks like simple chatbots or content generation, a smaller model like Llama3 8B might be sufficient.

Q: What are the benefits of using a local LLM model instead of cloud-based solutions?

A: Local LLM models offer several benefits, including:

- Privacy: You can avoid sending sensitive data to the cloud.

- Control: You have more control over the model's configurations and updates.

- Offline Access: You can run LLMs even without internet connectivity.

- Faster Performance: Local models can be much faster than cloud-based solutions.

*Q: Can I use the A100_SXM_80GB to run other LLMs? *

A: The A100SXM80GB is a powerful GPU that can handle various LLMs, but it's important to check the model's resource requirements and the A100SXM80GB's capabilities. For example, it may be able to handle larger models like Llama 3 70B with the right optimization techniques and quantization levels.

Q: Can I run LLMs on my own computer?

A: Yes, you can run LLMs on your own computer, but you'll need a powerful GPU with sufficient memory capacity. Consider your hardware capabilities and the requirements of the LLM model you want to use.

Q: What are some good resources for learning more about LLMs?

A: There are various resources available to learn more about LLMs, including:

- Online tutorials: Numerous websites offer tutorials and courses on LLMs.

- Research papers: Explore recent research papers published on the topic.

- Community forums: Engage with other enthusiasts and ask questions on platforms like Reddit and Discord.

Keywords

NVIDIA A100SXM80GB, Llama3 8B, LLM, Large Language Model, Token Generation Speed, Quantization, Q4KM, F16, Performance, Benchmarks, Content Creation, Chatbots, Virtual Assistants, Language Translation, Summarization, Code Generation, Use Cases, Workarounds, GPU, Hardware, Optimization, Local Models, Cloud-Based Solutions.