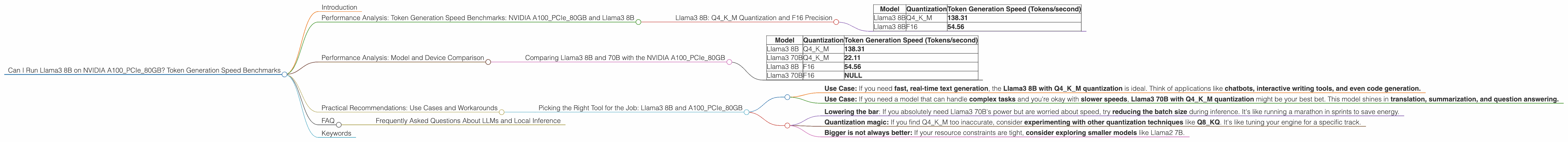

Can I Run Llama3 8B on NVIDIA A100 PCIe 80GB? Token Generation Speed Benchmarks

Introduction

The world of Large Language Models (LLMs) is exploding, with new models released almost daily. One of the most popular and talked-about models is Llama3, developed by Meta. But can you run it on your local machine, and if so, how fast is it?

This article dives deep into the performance of Llama3 8B on a NVIDIA A100PCIe80GB GPU. We'll analyze token generation speed benchmarks, compare performance with different models, and provide practical recommendations for use cases. So, buckle up, and let's get geeky!

Performance Analysis: Token Generation Speed Benchmarks: NVIDIA A100PCIe80GB and Llama3 8B

Llama3 8B: Q4KM Quantization and F16 Precision

One of the most exciting aspects of LLMs is their ability to generate text. But how fast can they generate it? This is where token generation speed comes in.

We looked at the performance of Llama3 8B on the A100PCIe80GB GPU, focusing on two different quantization levels:

- Q4KM: This quantization technique represents weights with 4 bits, which significantly reduces memory footprint and speeds up inference.

- F16: This is a more standard approach using 16-bit floating-point precision.

Here's how the A100PCIe80GB performed with Llama3 8B:

| Model | Quantization | Token Generation Speed (Tokens/second) |

|---|---|---|

| Llama3 8B | Q4KM | 138.31 |

| Llama3 8B | F16 | 54.56 |

Analysis:

- Q4KM is the clear winner, boasting significantly faster token generation speeds compared to F16.

- Think of it like this: Q4KM is like using a super-charged engine, while F16 is like driving with an old, rusty engine.

- Quantization is like compression: It shrinks the model size and increases speed but can slightly compromise accuracy.

Performance Analysis: Model and Device Comparison

Comparing Llama3 8B and 70B with the NVIDIA A100PCIe80GB

Let's see how Llama3 8B compares to its bigger brother, Llama3 70B, on the same A100PCIe80GB GPU.

| Model | Quantization | Token Generation Speed (Tokens/second) |

|---|---|---|

| Llama3 8B | Q4KM | 138.31 |

| Llama3 70B | Q4KM | 22.11 |

| Llama3 8B | F16 | 54.56 |

| Llama3 70B | F16 | NULL |

Analysis:

- Llama3 8B is significantly faster than Llama3 70B.

- Bigger models are like heavier cars: They have more power but are also slower.

- We don't have the F16 performance for Llama3 70B on the A100PCIe80GB, so we cannot compare them directly.

Practical Recommendations: Use Cases and Workarounds

Picking the Right Tool for the Job: Llama3 8B and A100PCIe80GB

Now that we've seen the performance data, let's talk about how to leverage it for real-world use cases:

- Use Case: If you need fast, real-time text generation, the Llama3 8B with Q4KM quantization is ideal. Think of applications like chatbots, interactive writing tools, and even code generation.

- Use Case: If you need a model that can handle complex tasks and you're okay with slower speeds, Llama3 70B with Q4KM quantization might be your best bet. This model shines in translation, summarization, and question answering.

What if your requirements don't fit neatly into the above? Here's how to navigate the challenges:

- Lowering the bar: If you absolutely need Llama3 70B's power but are worried about speed, try reducing the batch size during inference. It's like running a marathon in sprints to save energy.

- Quantization magic: If you find Q4KM too inaccurate, consider experimenting with other quantization techniques like Q8_KQ. It's like tuning your engine for a specific track.

- Bigger is not always better: If your resource constraints are tight, consider exploring smaller models like Llama2 7B.

FAQ

Frequently Asked Questions About LLMs and Local Inference

Q: What are LLMs?

A: Large Language Models (LLMs) are powerful artificial intelligence models trained on massive amounts of text data. They can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

Q: How do I run an LLM on my local machine?

A: You can use libraries like llama.cpp and transformers (from Hugging Face) to load and run LLMs locally. These libraries can leverage your CPU or GPU for faster inference.

Q: What if my GPU is not fast enough?

A: Cloud-based LLMs like GPT-3 and LaMDA can be used for more demanding tasks, although these services come with pricing plans.

Q: Will local LLMs replace cloud-based LLMs?

A: It's unlikely that local LLMs will completely replace cloud-based LLMs. Both options have their strengths and weaknesses. Local LLMs offer greater control and privacy but might be less powerful than cloud-based LLMs. The future of LLMs will likely be a hybrid approach, leveraging both local and cloud-based resources.

Keywords

llama3, LLM, local, GPU, NVIDIA, A100PCIe80GB, token generation speed, benchmarks, performance, quantization, Q4KM, F16, Llama3 8B, Llama3 70B, use cases, practical recommendations, inference, deep dive, geek, developer, AI, chatbot, writing tool, code generation, translation, summarization, question answering, resources, cloud-based, llama.cpp, transformers, GPT-3, LaMDA, hybrid.