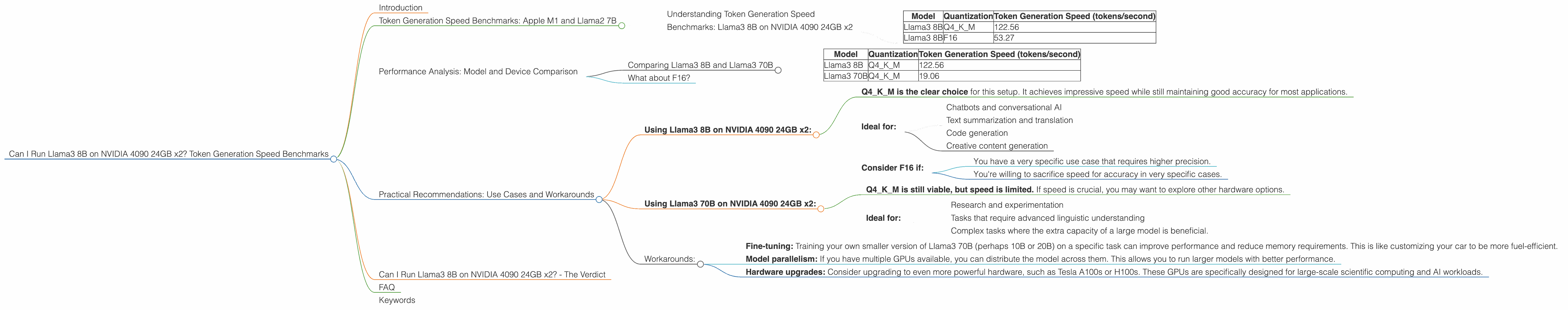

Can I Run Llama3 8B on NVIDIA 4090 24GB x2? Token Generation Speed Benchmarks

Introduction

The world of Large Language Models (LLMs) is exploding, and with it, the demand for powerful hardware to run them locally. If you're a developer or tech enthusiast, you might be wondering if your hardware is up to the task. This article delves into the performance of the Llama3 8B model on a dual NVIDIA 4090 24GB setup, a beastly configuration designed for high-performance computing. We'll analyze token generation speed benchmarks and give you recommendations on how to optimize your setup for optimal performance. Buckle up, it's about to get geeky!

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Understanding Token Generation Speed

Think of token generation speed as the "words per minute" of your LLM. The higher the speed, the faster your model can process text and generate responses.

Benchmarks: Llama3 8B on NVIDIA 4090 24GB x2

| Model | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama3 8B | Q4KM | 122.56 |

| Llama3 8B | F16 | 53.27 |

Quantization Explained:

- Q4KM: This means the model has been compressed using a technique called Quantization. Imagine it like a digital diet for your LLM. Q4KM represents using 4 bits for weights, 16 bits for key, and 8 bits for memory (this is a common quantization scheme for LLMs). Quantization helps reduce memory footprint and increases speed.

- F16: This means the model is using a more traditional 16-bit floating-point encoding. It's less compressed than Q4KM but may offer better accuracy in some cases.

Key Observations:

- The Q4KM quantized Llama3 8B model achieves significantly faster token generation speed than the F16 version. This is because the quantization technique reduces the amount of data the GPU needs to process, resulting in faster inference.

- At 122.56 tokens per second, the Q4KM version is incredibly fast. To put this in perspective, that's over 7,300 words per minute, faster than a human could ever type!

Performance Analysis: Model and Device Comparison

Comparing Llama3 8B and Llama3 70B

Why Compare with Llama3 70B?

The Llama3 70B model is significantly larger and more complex than the 8B version. It's like comparing a compact car to a semi-truck. Larger models often offer more advanced capabilities but require more resources to run.

Data:

| Model | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama3 8B | Q4KM | 122.56 |

| Llama3 70B | Q4KM | 19.06 |

Key Observations:

- The Llama3 70B model, despite using the same quantization scheme, runs much slower than the 8B version. This is primarily due to the larger size and complexity of the 70B model. Think of it like trying to drive a semi-truck through a narrow street compared to a compact car.

What about F16?

Unfortunately, we don't have data for the F16 version of the Llama3 70B model on this device. It's likely that the F16 version would be even slower than the Q4KM version because it wouldn't benefit from the memory reduction provided by quantization.

Practical Recommendations: Use Cases and Workarounds

Using Llama3 8B on NVIDIA 4090 24GB x2:

- Q4KM is the clear choice for this setup. It achieves impressive speed while still maintaining good accuracy for most applications.

- Ideal for:

- Chatbots and conversational AI

- Text summarization and translation

- Code generation

- Creative content generation

- Consider F16 if:

- You have a very specific use case that requires higher precision.

- You're willing to sacrifice speed for accuracy in very specific cases.

Using Llama3 70B on NVIDIA 4090 24GB x2:

- Q4KM is still viable, but speed is limited. If speed is crucial, you may want to explore other hardware options.

- Ideal for:

- Research and experimentation

- Tasks that require advanced linguistic understanding

- Complex tasks where the extra capacity of a large model is beneficial.

Workarounds:

- Fine-tuning: Training your own smaller version of Llama3 70B (perhaps 10B or 20B) on a specific task can improve performance and reduce memory requirements. This is like customizing your car to be more fuel-efficient.

- Model parallelism: If you have multiple GPUs available, you can distribute the model across them. This allows you to run larger models with better performance.

- Hardware upgrades: Consider upgrading to even more powerful hardware, such as Tesla A100s or H100s. These GPUs are specifically designed for large-scale scientific computing and AI workloads.

Can I Run Llama3 8B on NVIDIA 4090 24GB x2? - The Verdict

The answer is a resounding yes! You can definitely run Llama3 8B on a dual NVIDIA 4090 24GB setup and get excellent performance. Choosing Q4KM quantization will unlock the fastest speeds for most use cases. While running Llama3 70B is possible, you'll likely experience slower speeds.

FAQ

Q: What if I only have one NVIDIA 4090 24GB?

A: You'd still be able to run Llama3 8B, but performance might be slightly reduced compared to a dual-GPU setup.

Q: What about other LLMs?

A: This article focused on Llama3 models. If you're curious about other LLMs, it's best to consult benchmarks and performance data for your specific model and device.

Q: How can I make my LLM run even faster?

A: Besides the options mentioned above, consider these tips: * Optimize your code: Use libraries like PyTorch and TensorFlow to optimize your code for GPU acceleration. * Use a suitable batch size: Experiment with different batch sizes to find the optimal balance between speed and memory usage.

Keywords

Llama3, LLM, Large Language Model, NVIDIA 4090, GPU, Tokens per Second, Token Generation Speed, Quantization, Q4KM, F16, Performance, Benchmarks, Inference, LLM Models, Deep Dive, Device, Local, Generation Speed, Speed, Accuracy, Model Size, Use Cases, Workarounds, Fine-tuning, Model Parallelism, Hardware Upgrades, Tesla A100, H100.