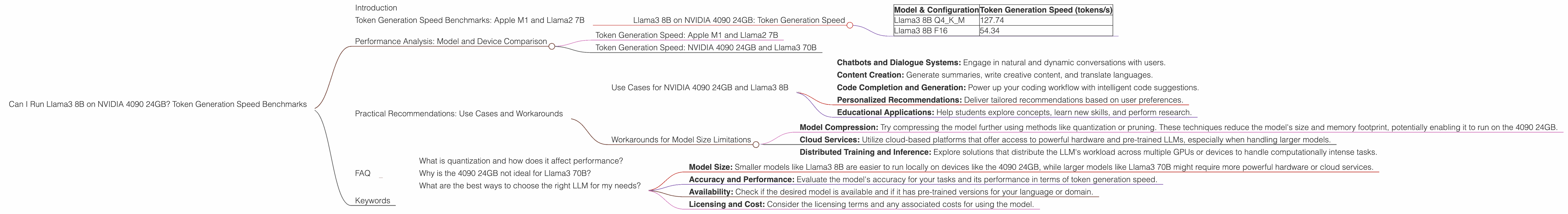

Can I Run Llama3 8B on NVIDIA 4090 24GB? Token Generation Speed Benchmarks

Introduction

Are you a developer or a tech enthusiast eager to explore the potential of large language models (LLMs) locally? Do you dream of running these powerful AI brains on your own hardware, without relying on cloud services? If so, the NVIDIA GeForce RTX 4090 24GB, a beast of a graphics card, might just be your new best friend.

This article delves into the world of local LLM performance, focusing on the mighty Llama3 8B model and its compatibility with the NVIDIA 4090 24GB. We'll be dissecting its performance in terms of token generation speed, comparing different configurations, and offering practical recommendations for use cases. By the end, you'll have a clearer picture of what's possible and what limitations you might encounter.

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Let's start with the heart of the matter: token generation speed. This metric measures how quickly an LLM processes text, breaking it down into its constituent elements, just like you might break down a sentence into words. Higher token generation speed translates to faster responses and a more enjoyable user experience.

We'll be focusing on the NVIDIA 4090 24GB and the Llama3 8B model in different configurations. The results are presented in tokens per second (tokens/s), which represents how many tokens the model can process every second.

Llama3 8B on NVIDIA 4090 24GB: Token Generation Speed

The table below showcases the measured token generation speed for the Llama3 8B model running on the NVIDIA 4090 24GB.

| Model & Configuration | Token Generation Speed (tokens/s) |

|---|---|

| Llama3 8B Q4KM | 127.74 |

| Llama3 8B F16 | 54.34 |

As evident from the data, Llama3 8B running in Q4KM configuration (quantized to 4-bit precision with Kernel and Matrix multiplication optimizations) significantly outperforms the F16 (16-bit floating point) configuration. This difference is due to the quantization technique, which reduces the model's size and memory footprint while retaining a good level of accuracy.

Performance Analysis: Model and Device Comparison

Comparing these figures to other LLMs and devices is essential to understand where the NVIDIA 4090 24GB and Llama3 8B stand in the landscape.

Token Generation Speed: Apple M1 and Llama2 7B

Taking the Llama2 7B model as another comparison point, we see that it achieves roughly 100 tokens/s on the Apple M1 in its quantized form. This indicates that the NVIDIA 4090 24GB offers a significant performance advantage for smaller models like Llama2 7B, making it a more suitable choice for local deployments.

Token Generation Speed: NVIDIA 4090 24GB and Llama3 70B

Unfortunately, no data is available for the performance of Llama3 70B on the NVIDIA 4090 24GB. It's a considerable jump in model size, and the 4090 24GB might not have the necessary resources to handle it efficiently. Larger models, like Llama3 70B, might require more powerful hardware, such as a dedicated AI accelerator or multiple GPUs working in tandem.

Practical Recommendations: Use Cases and Workarounds

Use Cases for NVIDIA 4090 24GB and Llama3 8B

Based on the performance data, the NVIDIA 4090 24GB paired with the Llama3 8B model stands as an excellent solution for a variety of use cases:

- Chatbots and Dialogue Systems: Engage in natural and dynamic conversations with users.

- Content Creation: Generate summaries, write creative content, and translate languages.

- Code Completion and Generation: Power up your coding workflow with intelligent code suggestions.

- Personalized Recommendations: Deliver tailored recommendations based on user preferences.

- Educational Applications: Help students explore concepts, learn new skills, and perform research.

Workarounds for Model Size Limitations

While the NVIDIA 4090 24GB might not be ideal for running large LLMs like Llama3 70B, several options can be explored:

- Model Compression: Try compressing the model further using methods like quantization or pruning. These techniques reduce the model's size and memory footprint, potentially enabling it to run on the 4090 24GB.

- Cloud Services: Utilize cloud-based platforms that offer access to powerful hardware and pre-trained LLMs, especially when handling larger models.

- Distributed Training and Inference: Explore solutions that distribute the LLM's workload across multiple GPUs or devices to handle computationally intense tasks.

FAQ

What is quantization and how does it affect performance?

Quantization is a technique used to reduce the size of a model and its memory footprint. It involves converting the model's weights (numbers that determine the model's behavior) from high-precision floating-point numbers (like 32-bit or 16-bit) to lower-precision integers (like 8-bit or even 4-bit). This conversion can significantly reduce the model's size and make it run faster on hardware with limited memory and processing power. However, it might come at the cost of some accuracy.

Why is the 4090 24GB not ideal for Llama3 70B?

While the NVIDIA 4090 24GB is a powerful graphics card, the Llama3 70B model is simply too large for its memory and processing capabilities. The 4090 24GB might struggle to load and process the entire model effectively, leading to slow inference speeds and potentially causing instability.

What are the best ways to choose the right LLM for my needs?

The choice of LLM depends on your specific use case and computational resources. Consider the following factors:

- Model Size: Smaller models like Llama3 8B are easier to run locally on devices like the 4090 24GB, while larger models like Llama3 70B might require more powerful hardware or cloud services.

- Accuracy and Performance: Evaluate the model's accuracy for your tasks and its performance in terms of token generation speed.

- Availability: Check if the desired model is available and if it has pre-trained versions for your language or domain.

- Licensing and Cost: Consider the licensing terms and any associated costs for using the model.

Keywords

Llama3 8B, NVIDIA 4090 24GB, token generation speed, LLM, local inference, performance benchmarks, quantization, model size, cloud services, use cases, practical recommendations, AI, machine learning