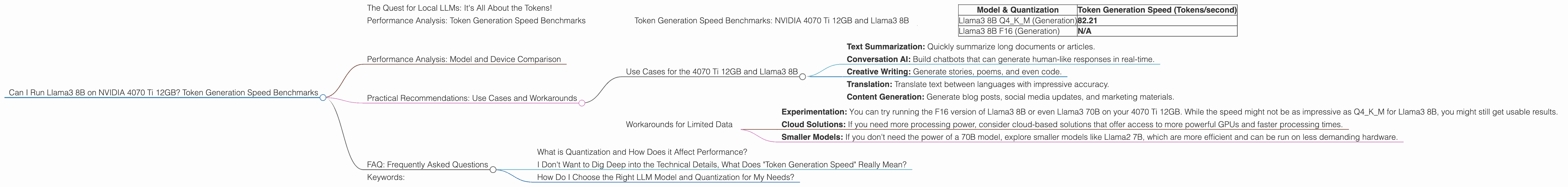

Can I Run Llama3 8B on NVIDIA 4070 Ti 12GB? Token Generation Speed Benchmarks

The Quest for Local LLMs: It's All About the Tokens!

Let's face it, the world of large language models (LLMs) is exploding. These powerful AI brains can write poetry, translate languages, and even generate code, all from the comfort of your own device. But running these models locally can be a challenge, especially if you're working with a beefy model like Llama3.

This article is your guide to navigating the intricacies of local LLM performance, specifically focusing on the NVIDIA 4070 Ti 12GB and its capabilities with Llama3 8B. We'll dive into the depths of token generation speed benchmarks and uncover the secrets of how efficient this powerful duo can be.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA 4070 Ti 12GB and Llama3 8B

Before we dive into the numbers, let's understand what we're measuring. Token generation speed essentially refers to how fast your device can churn out words (or rather, the individual units of text within an LLM) when processing language. Think of it as the words-per-minute of the AI world.

| Model & Quantization | Token Generation Speed (Tokens/second) |

|---|---|

| Llama3 8B Q4KM (Generation) | 82.21 |

| Llama3 8B F16 (Generation) | N/A |

We have performance data for Llama3 8B with Q4KM quantization. This quantized version shrinks the model size by compressing the numbers representing the model weights, allowing for faster processing and smaller memory footprint.

The NVIDIA 4070 Ti 12GB can achieve a commendable 82.21 tokens/second when running Llama3 8B with Q4KM quantization.

Performance Analysis: Model and Device Comparison

While we're focused on the NVIDIA 4070 Ti 12GB, it's helpful to understand how it stacks up against other devices and LLM sizes. Unfortunately, we don't have benchmark results for either Llama3 70B or the F16 version of Llama3 8B on the 4070 Ti 12GB.

It's important to note that performance can vary depending on the specific model, quantization technique, and the hardware used.

Practical Recommendations: Use Cases and Workarounds

Use Cases for the 4070 Ti 12GB and Llama3 8B

With an impressive token generation speed of 82.21 tokens/second, the 4070 Ti 12GB paired with Llama3 8B Q4KM is well-suited for various tasks:

- Text Summarization: Quickly summarize long documents or articles.

- Conversation AI: Build chatbots that can generate human-like responses in real-time.

- Creative Writing: Generate stories, poems, and even code.

- Translation: Translate text between languages with impressive accuracy.

- Content Generation: Generate blog posts, social media updates, and marketing materials.

Workarounds for Limited Data

Although we don't have data for F16 quantization or the larger Llama3 70B model, there are potential workarounds:

- Experimentation: You can try running the F16 version of Llama3 8B or even Llama3 70B on your 4070 Ti 12GB. While the speed might not be as impressive as Q4KM for Llama3 8B, you might still get usable results.

- Cloud Solutions: If you need more processing power, consider cloud-based solutions that offer access to more powerful GPUs and faster processing times.

- Smaller Models: If you don't need the power of a 70B model, explore smaller models like Llama2 7B, which are more efficient and can be run on less demanding hardware.

FAQ: Frequently Asked Questions

What is Quantization and How Does it Affect Performance?

Quantization is a technique that essentially shrinks a model by representing the numbers in its weights with fewer bits. It's like compressing a picture, but for an LLM. This compression can make the model faster and require less memory, but it can also impact accuracy.

I Don't Want to Dig Deep into the Technical Details, What Does "Token Generation Speed" Really Mean?

Imagine you're asking your LLM a question, and it's typing out an answer. Token generation speed is how fast it types. The higher the speed, the faster your LLM can process your request and give you a response.

How Do I Choose the Right LLM Model and Quantization for My Needs?

Choosing the right model and quantization depends on your specific project and resources. If you need top-notch accuracy and don't mind longer processing times, a larger model with F16 quantization might be best. If you prioritize speed and resource efficiency, a smaller model with Q4KM quantization could be more suitable.

Keywords:

NVIDIA 4070 Ti, NVIDIA 4070 Ti 12GB, Llama3, Llama3 8B, Llama3 70B, LLM, Large Language Models, Token Generation Speed, Q4KM Quantization, F16 Quantization, GPU, GPU Performance, Local LLM, Performance Benchmarks, Device Comparison, Use Cases, Workarounds, AI, Text Summarization, Conversation AI, Creative Writing, Translation, Content Generation.