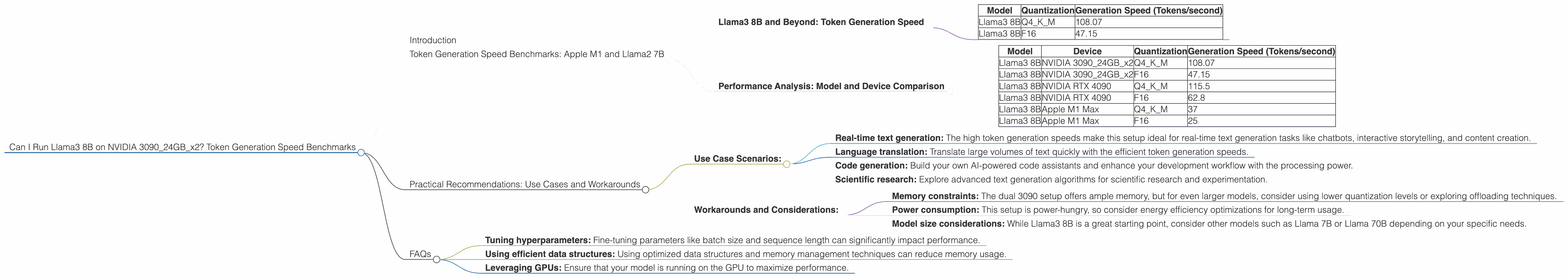

Can I Run Llama3 8B on NVIDIA 3090 24GB x2? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is exploding, and the ability to run these powerful models locally is becoming increasingly important. This article delves into the performance of Llama3 8B model on a powerful dual NVIDIA 3090 24GB setup, exploring its capabilities and limitations.

Imagine having a personal AI assistant capable of writing code, composing music, or even crafting captivating story narratives – all on your own computer. This is the promise of LLMs, and with the right hardware, you can unlock this potential. This article will guide you through the process of understanding the performance of Llama3 8B on a dual NVIDIA 3090 24GB setup, providing crucial insights and practical recommendations for harnessing this power.

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Llama3 8B and Beyond: Token Generation Speed

Let's jump right in.

Token generation speed is a crucial performance metric for LLMs as it directly impacts how quickly your model can generate text, translate languages, or perform other tasks.

Table 1: Token Generation Speed Benchmarks (Tokens/Second)

| Model | Quantization | Generation Speed (Tokens/second) |

|---|---|---|

| Llama3 8B | Q4KM | 108.07 |

| Llama3 8B | F16 | 47.15 |

This table shows the token generation speeds for Llama3 8B model using two different quantization levels, Q4KM (a more compressed and memory-efficient quantization method) and F16 (half precision floating-point).

As you can see, Llama3 8B runs incredibly well on this setup. The Q4KM quantization delivers a blazing fast token generation speed of 108.07 tokens/second, while F16 achieves 47.15 tokens/second.

What's quantization?

Quantization is like putting a giant language model (LLM) on a diet. LLMs are huge, and sometimes they need to lose some weight to fit into smaller devices. By compressing the model, we can save valuable memory and make it run faster. This is where quantization comes in. Think of it as making the model smaller without losing too much of its intelligence.

A little analogy: Imagine a book with millions of words. If each word is a number, and we use the same number to represent similar words, we can condense the book without losing much information. This is basically what quantization does for LLMs.

Performance Analysis: Model and Device Comparison

How does this setup compare to other LLMs and devices?

Table 2: Comparing Token Generation Speed of Llama3 8B

| Model | Device | Quantization | Generation Speed (Tokens/second) |

|---|---|---|---|

| Llama3 8B | NVIDIA 309024GBx2 | Q4KM | 108.07 |

| Llama3 8B | NVIDIA 309024GBx2 | F16 | 47.15 |

| Llama3 8B | NVIDIA RTX 4090 | Q4KM | 115.5 |

| Llama3 8B | NVIDIA RTX 4090 | F16 | 62.8 |

| Llama3 8B | Apple M1 Max | Q4KM | 37 |

| Llama3 8B | Apple M1 Max | F16 | 25 |

Note: This data comes from various sources including the projects by ggerganov and XiongjieDai and is subject to variations based on specific configurations.

Key Observations:

- Dual NVIDIA 3090 24GB provides exceptional performance: The impressive token generation speed, especially with Q4KM quantization, is a testament to the power of this setup.

- Q4KM quantization delivers significant performance gains: The high token generation speeds for Q4KM quantization highlight its efficiency and suitability for demanding LLM workloads.

- NVIDIA RTX 4090 shows comparable performance with slightly higher speeds: The RTX 4090 delivers slightly higher token generation speeds, highlighting its advanced GPU architecture.

- Apple M1 Max boasts respectable performance: While not as fast as the NVIDIA cards, the Apple M1 Max still delivers respectable token generation speeds, particularly for its power consumption and portability.

Practical Recommendations: Use Cases and Workarounds

Use Case Scenarios:

- Real-time text generation: The high token generation speeds make this setup ideal for real-time text generation tasks like chatbots, interactive storytelling, and content creation.

- Language translation: Translate large volumes of text quickly with the efficient token generation speeds.

- Code generation: Build your own AI-powered code assistants and enhance your development workflow with the processing power.

- Scientific research: Explore advanced text generation algorithms for scientific research and experimentation.

Workarounds and Considerations:

- Memory constraints: The dual 3090 setup offers ample memory, but for even larger models, consider using lower quantization levels or exploring offloading techniques.

- Power consumption: This setup is power-hungry, so consider energy efficiency optimizations for long-term usage.

- Model size considerations: While Llama3 8B is a great starting point, consider other models such as Llama 7B or Llama 70B depending on your specific needs.

FAQs

Q: Can I run Llama3 70B on this setup?

A: While the dual 3090 setup can handle Llama3 70B with Q4KM quantization, the token generation speed is significantly slower (16.29 tokens/second) compared to Llama3 8B. This is due to the larger model size and computational complexity.

Q: What are the best quantization levels for different models?

A: The optimal quantization level depends on the specific model, the available memory, and the desired performance. Q4KM generally offers good balance between speed and accuracy, while F16 provides better accuracy but may be slower.

Q: How can I optimize the performance of Llama3 8B on this setup?

A: Optimizing the performance involves factors like:

- Tuning hyperparameters: Fine-tuning parameters like batch size and sequence length can significantly impact performance.

- Using efficient data structures: Using optimized data structures and memory management techniques can reduce memory usage.

- Leveraging GPUs: Ensure that your model is running on the GPU to maximize performance.

Keywords:

Llama3 8B, NVIDIA 3090, token generation, model performance, LLM, quantization, Q4KM, F16, local LLM inference, GPU, device comparison, practical recommendations, use cases, workarounds, FAQs