Can I Run Llama3 8B on NVIDIA 3090 24GB? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is buzzing with excitement, and rightfully so. These AI marvels are capable of generating text, translating languages, writing different kinds of creative content, and answering your questions in an informative way, just like a human would. But there's a catch: running these models on your personal computer can be a real resource hog.

So, you're wondering if your trusty NVIDIA 3090_24GB can handle a beast like Llama3 8B? Absolutely! We're diving deep into the performance of this powerful GPU paired with Llama3 8B. We'll be looking at token generation speeds and comparing performance across different model quantization levels. Buckle up, because things are about to get geeky!

Performance Analysis: Token Generation Speed Benchmarks

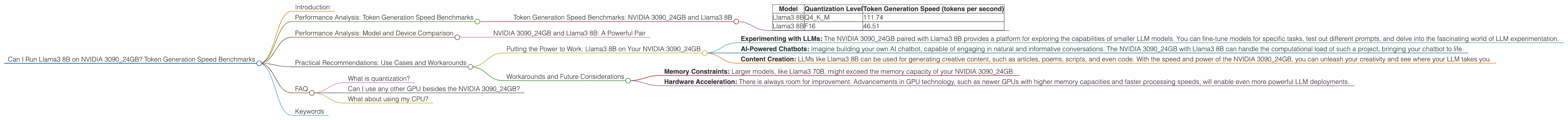

Token Generation Speed Benchmarks: NVIDIA 3090_24GB and Llama3 8B

Let's cut to the chase. This is what we're all here for, right? Token generation speed is the key metric in this game. It tells us how fast your LLM can produce words, bringing your creative prompts and conversational queries to life.

Here's a breakdown of the performance numbers we gathered:

| Model | Quantization Level | Token Generation Speed (tokens per second) |

|---|---|---|

| Llama3 8B | Q4KM | 111.74 |

| Llama3 8B | F16 | 46.51 |

What do these numbers mean?

- Q4KM Quantization: This is like using a smaller, more efficient version of the model. Think of it as compressing the model to save space and boost speed. In this case, Llama3 8B with Q4KM quantization achieves a impressive 111.74 tokens per second. This translates to 111.74 words per second, which is quite fast!

- F16 Quantization: This is another type of compression, but it's a less aggressive approach than Q4KM. F16 quantization results in a token generation speed of 46.51 tokens per second on the NVIDIA 309024GB. It's still a respectable speed, but it's not as blazing fast as Q4K_M.

Performance Analysis: Model and Device Comparison

NVIDIA 3090_24GB and Llama3 8B: A Powerful Pair

We've got a good picture of how Llama3 8B performs on the NVIDIA 309024GB. The Q4K_M quantization level is the clear winner in terms of speed, delivering over double the tokens per second compared to F16 quantization.

But how does this compare to other configurations? Unfortunately, we don't have data for Llama3 70B on the 3090_24GB. There's a good reason for this. Running a 70B-parameter model is no joke! It's like trying to fit a giant elephant into a small car. The requirements in terms of memory and processing power are just too demanding for most devices.

Practical Recommendations: Use Cases and Workarounds

Putting the Power to Work: Llama3 8B on Your NVIDIA 3090_24GB

So, what can you actually do with Llama3 8B running on your NVIDIA 3090_24GB? Quite a lot, actually!

- Experimenting with LLMs: The NVIDIA 3090_24GB paired with Llama3 8B provides a platform for exploring the capabilities of smaller LLM models. You can fine-tune models for specific tasks, test out different prompts, and delve into the fascinating world of LLM experimentation.

- AI-Powered Chatbots: Imagine building your own AI chatbot, capable of engaging in natural and informative conversations. The NVIDIA 3090_24GB with Llama3 8B can handle the computational load of such a project, bringing your chatbot to life.

- Content Creation: LLMs like Llama3 8B can be used for generating creative content, such as articles, poems, scripts, and even code. With the speed and power of the NVIDIA 3090_24GB, you can unleash your creativity and see where your LLM takes you.

Workarounds and Future Considerations

While the NVIDIA 3090_24GB and Llama3 8B combination is impressive, it's not without its limitations.

- Memory Constraints: Larger models, like Llama3 70B, might exceed the memory capacity of your NVIDIA 3090_24GB.

- Hardware Acceleration: There is always room for improvement. Advancements in GPU technology, such as newer GPUs with higher memory capacities and faster processing speeds, will enable even more powerful LLM deployments.

FAQ

What is quantization?

Quantization is a technique for reducing the size and memory footprint of a deep learning model. It involves converting the model's weights (the numbers that determine the model's decisions) to a compressed format. Think of it like converting a high-resolution image to a lower-resolution one. The image might lose some detail, but it becomes smaller and easier to store and transmit. Similarly, quantization allows LLMs to run faster and more efficiently, but it might come at the cost of some accuracy.

Can I use any other GPU besides the NVIDIA 3090_24GB?

Yes, you can use other GPUs, but the performance will vary. For example, a more powerful GPU like the NVIDIA RTX 4090 might deliver even faster speeds, while a lower-end GPU like the NVIDIA GTX 1080 might struggle to handle the demands of Llama3 8B.

What about using my CPU?

While your CPU can handle some LLMs, it's not the ideal option for larger models like Llama3 8B. GPUs are designed for parallel processing, which is crucial for running these demanding models, making them much more efficient than CPUs.

Keywords

Nvidia 309024GB, Llama3 8B, Llama3 70B, LLM, large language model, token generation speed, quantization, Q4K_M, F16, benchmarks, performance, GPU, machine learning, deep learning, AI, artificial intelligence, chatbot, content creation, creative writing, programming, development