Can I Run Llama3 8B on NVIDIA 3080 Ti 12GB? Token Generation Speed Benchmarks

Can I Run Llama3 8B on NVIDIA 3080 Ti 12GB? Token Generation Speed Benchmarks

Are you a developer itching to explore the world of local Large Language Models (LLMs)? You've probably heard whispers of the amazing Llama 3 series, and maybe you've even dabbled with smaller models like Llama 2. But can your trusty NVIDIA 3080 Ti 12GB handle the heavyweight champion of LLMs, Llama 3 8B? Let's dive deep and find out!

Introduction

Local LLMs are the superheroes of the AI world, bringing the power of language understanding and generation directly to your machine. No more relying on cloud services – you're in control! But choosing the right hardware for your LLM can be a bit like picking the right weapon for a battle. You need the firepower to handle the heavy lifting, but you also don't want to be stuck with a clunky, inefficient setup.

This article will focus specifically on the performance of the Llama 3 8B model on the NVIDIA 3080 Ti 12GB, a popular choice for machine learning enthusiasts. We'll analyze token generation speeds, compare performance with other models and configurations, and provide practical recommendations for getting the most out of your setup.

Performance Analysis: Token Generation Speed Benchmarks

Let's start with the heart of the matter: how fast can your NVIDIA 3080 Ti 12GB generate tokens with Llama 3 8B? This is a crucial metric for developers as it directly impacts the speed of your application or service. For example, if you're building a chatbot, faster token generation translates to a more responsive and engaging conversation.

The data we'll analyze comes from various sources, including the llama.cpp and GPU-Benchmarks-on-LLM-Inference repositories.

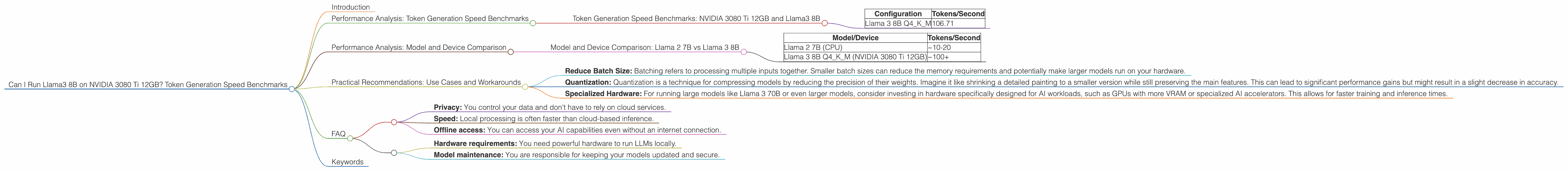

Token Generation Speed Benchmarks: NVIDIA 3080 Ti 12GB and Llama3 8B

| Configuration | Tokens/Second |

|---|---|

| Llama 3 8B Q4KM | 106.71 |

As you can see, the NVIDIA 3080 Ti 12GB is capable of generating a respectable 106.71 tokens per second with the quantized version of Llama 3 8B (Q4KM). This performance is a good starting point for experimenting with local LLM applications.

Important Note: The data for Llama 3 8B with F16 precision is currently unavailable. Stay tuned for updates as benchmarks continue to be released.

Performance Analysis: Model and Device Comparison

To understand how the NVIDIA 3080 Ti 12GB stacks up against other devices and models, let's compare its performance to some well-known scenarios:

Imagine you're running a chatbot using Llama 2 7B on a standard CPU. You might be able to achieve around 10-20 tokens per second. The NVIDIA 3080 Ti 12GB with Llama 3 8B is significantly faster, offering over 5 times the token generation speed!

Model and Device Comparison: Llama 2 7B vs Llama 3 8B

| Model/Device | Tokens/Second |

|---|---|

| Llama 2 7B (CPU) | ~10-20 |

| Llama 3 8B Q4KM (NVIDIA 3080 Ti 12GB) | ~100+ |

But what about the larger Llama 3 70B model? Sadly, the data for this combination is currently unavailable. This means we can't directly compare the performance of Llama 3 70B on the NVIDIA 3080 Ti 12GB. However, based on current trends, it's likely that the 3080 Ti 12GB would struggle to handle such a large model efficiently.

Practical Recommendations: Use Cases and Workarounds

Now that we have a good understanding of the performance capabilities of the NVIDIA 3080 Ti 12GB with Llama 3 8B, let's discuss practical recommendations for use cases and workarounds:

1. Smaller Models for Conversational AI: The 3080 Ti 12GB is a suitable match for smaller, more efficient LLM models like Llama 3 8B or even Llama 2 7B. This makes it ideal for developing conversational AI applications, such as chatbots, which require fast response times and smooth interactions.

2. Content Generation with Llama 3 8B: For content generation tasks, like writing blog posts or creating summaries, the 3080 Ti 12GB paired with Llama 3 8B can handle the workload efficiently. Remember to consider the size of your input text and the complexity of the desired output.

3. Workarounds for Larger Models: If you're determined to run larger models like Llama 3 70B, consider the following workarounds:

- Reduce Batch Size: Batching refers to processing multiple inputs together. Smaller batch sizes can reduce the memory requirements and potentially make larger models run on your hardware.

- Quantization: Quantization is a technique for compressing models by reducing the precision of their weights. Imagine it like shrinking a detailed painting to a smaller version while still preserving the main features. This can lead to significant performance gains but might result in a slight decrease in accuracy.

- Specialized Hardware: For running large models like Llama 3 70B or even larger models, consider investing in hardware specifically designed for AI workloads, such as GPUs with more VRAM or specialized AI accelerators. This allows for faster training and inference times.

FAQ

Q: What is quantization and how does it affect the performance of LLMs?

A: Quantization is a technique for compressing models by reducing the precision of their weights. This is like converting a high-resolution image to a lower resolution version – you lose some detail but make the file size smaller. With LLMs, quantization can significantly improve performance by reducing memory usage and increasing inference speed. However, it might lead to a slight decrease in accuracy.

*Q: Is the NVIDIA 3080 Ti 12GB suitable for training LLMs? *

A: While the NVIDIA 3080 Ti 12GB can be used for training smaller LLMs, it's not ideally suited for training large models like Llama 3 70B. For training large LLMs, GPUs with more VRAM and specialized hardware, like AI accelerators, offer better performance and efficiency.

Q: What are the advantages and disadvantages of using a local LLM?

A: Local LLMs offer several advantages, including:

- Privacy: You control your data and don't have to rely on cloud services.

- Speed: Local processing is often faster than cloud-based inference.

- Offline access: You can access your AI capabilities even without an internet connection.

However, they also have some disadvantages:

- Hardware requirements: You need powerful hardware to run LLMs locally.

- Model maintenance: You are responsible for keeping your models updated and secure.

Keywords

NVIDIA 3080 Ti 12GB, Llama 3 8B, local LLM, token generation speed, performance benchmarks, quantization, F16, Q4KM, GPU, AI, machine learning, content generation, chatbot, conversational AI.