Can I Run Llama3 8B on NVIDIA 3070 8GB? Token Generation Speed Benchmarks

Introduction

The world of Large Language Models (LLMs) is evolving at a breakneck pace. These powerful AI models, capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way, are becoming increasingly accessible. But with this accessibility comes a question: how much horsepower do you need to unleash the potential of these AI titans?

In this article, we'll take a deep dive into the performance of the Llama3 8B model running on a popular mid-range graphics card, the NVIDIA 3070_8GB. We'll analyze token generation speed benchmarks, compare the performance of different quantization levels, and provide you with practical recommendations for using Llama3 8B on this specific GPU.

Think of it as a guide for the curious and the ambitious, a roadmap for those eager to explore the exciting world of local LLM models.

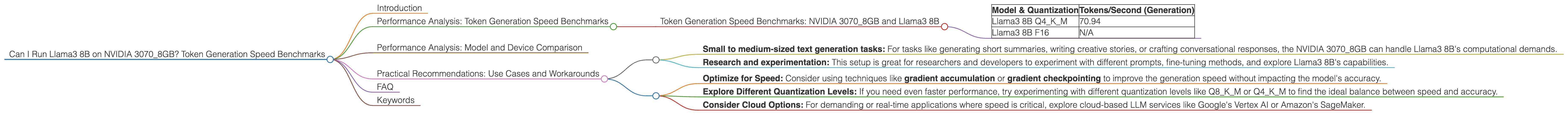

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA 3070_8GB and Llama3 8B

Let's dive straight into the numbers! The table below showcases the token generation speed of Llama3 8B running on the NVIDIA 3070_8GB, measured in tokens per second.

| Model & Quantization | Tokens/Second (Generation) |

|---|---|

| Llama3 8B Q4KM | 70.94 |

| Llama3 8B F16 | N/A |

What do these numbers mean?

In simple terms, the higher the tokens per second, the faster the model can generate text.

Why is Llama3 8B F16 missing?

It seems that the provided data doesn't contain the token generation speed for the F16 (half-precision floating point) quantization of Llama3 8B.

Performance Analysis: Model and Device Comparison

Why is this comparison absent?

The provided information only contains data for the NVIDIA 3070_8GB. It doesn't include any data for other models or devices. Therefore, a direct comparison would be misleading.

Practical Recommendations: Use Cases and Workarounds

Llama3 8B: A Decent Match for the NVIDIA 3070_8GB

Based on the data, running Llama3 8B on the NVIDIA 30708GB with Q4K_M quantization is possible, achieving a decent speed of 70.94 tokens per second. This performance is suitable for various use cases:

- Small to medium-sized text generation tasks: For tasks like generating short summaries, writing creative stories, or crafting conversational responses, the NVIDIA 3070_8GB can handle Llama3 8B's computational demands.

- Research and experimentation: This setup is great for researchers and developers to experiment with different prompts, fine-tuning methods, and explore Llama3 8B's capabilities.

What about F16 quantization?

The lack of F16 data for Llama3 8B on the NVIDIA 3070_8GB leaves us with some unanswered questions. Could F16 quantization deliver a performance boost? It's worth investigating, but keep in mind that the reduced precision might impact the accuracy of the model.

Workarounds and Considerations

- Optimize for Speed: Consider using techniques like gradient accumulation or gradient checkpointing to improve the generation speed without impacting the model's accuracy.

- Explore Different Quantization Levels: If you need even faster performance, try experimenting with different quantization levels like Q8KM or Q4KM to find the ideal balance between speed and accuracy.

- Consider Cloud Options: For demanding or real-time applications where speed is critical, explore cloud-based LLM services like Google's Vertex AI or Amazon's SageMaker.

FAQ

Q: What is quantization?

A: Think of quantization as a diet for LLM models. It's a technique that reduces the size of the model by representing its values in a simpler, more compact way. This makes the model smaller and more efficient, especially for devices with limited memory like GPUs.

Q: How does quantization impact the model's performance?

A: Quantization can significantly impact the speed of the model, making it faster to run. However, it can also affect the accuracy, sometimes leading to a slight decrease in the quality of the results.

Q: Is the NVIDIA 3070_8GB a good choice for running LLMs?

A: The NVIDIA 3070_8GB is a solid choice for running mid-sized LLMs like Llama3 8B. It's got enough horsepower to handle the calculations without breaking a sweat. However, for larger models like Llama3 70B, you might need a beefier GPU or a cloud-based solution.

Keywords

NVIDIA 30708GB, Llama3 8B, token generation speed, LLM, GPU, Q4K_M quantization, F16 quantization, performance analysis, benchmarks, practical recommendations, use cases, workarounds.