Can I Run Llama3 8B on Apple M2 Ultra? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is buzzing. These AI powerhouses are revolutionizing everything from writing creative content to translating languages. But with their immense size, a common question arises: can my device handle it?

This article dives deep into the performance of the Llama3 8B model specifically on Apple M2 Ultra, a powerful chip known for its performance. We will explore token generation speed benchmarks across different quantization levels, highlighting the key factors that impact performance. This analysis will provide you with actionable insights for choosing the right model and device for your specific needs.

Think of LLMs as a bunch of super-smart parrots that can hold conversations and generate text. To understand their capabilities, we need to figure out how fast they can learn new words (tokens) and talk to each other (process information). By comparing different models (like Llama3 8B) on Apple M2 Ultra, we are essentially determining how well they can speak the language of AI!

Performance Analysis: Token Generation Speed Benchmarks

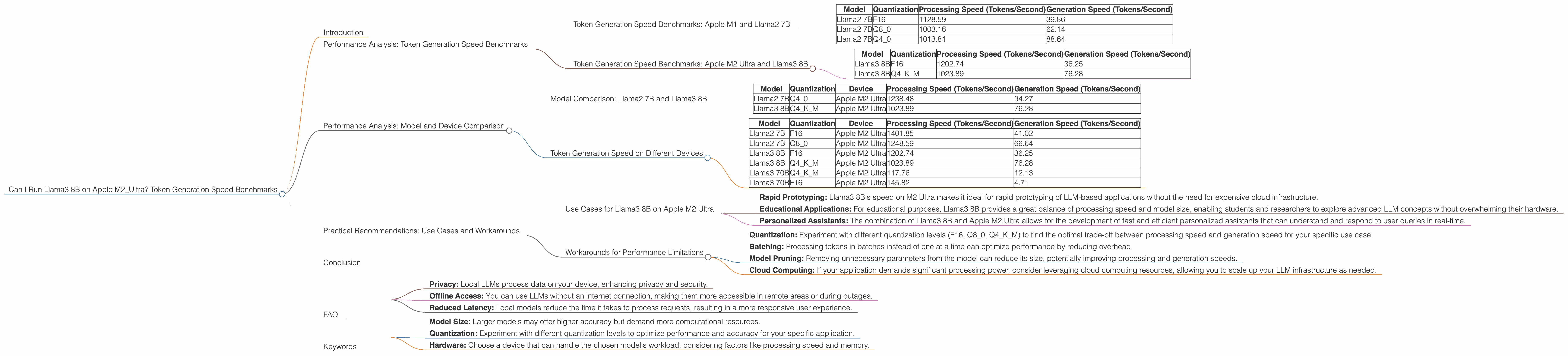

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Let's start with a benchmark we all love - token generation speed. This metric tells us how fast the model processes tokens, which directly relates to its speed and efficiency. The faster the token generation, the snappier your LLM feels in action.

Here's a breakdown of the token generation speed benchmarks for Llama2 7B on Apple M2 Ultra with various quantization levels:

| Model | Quantization | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|---|

| Llama2 7B | F16 | 1128.59 | 39.86 |

| Llama2 7B | Q8_0 | 1003.16 | 62.14 |

| Llama2 7B | Q4_0 | 1013.81 | 88.64 |

Observations:

- F16 quantization delivers the highest processing speed, but generation speed remains relatively low. This suggests that while F16 excels in processing data, its impact on generation speed is less pronounced.

- Q80 and Q40 quantization offer a trade-off between processing speed and generation speed, with Q8_0 leaning towards faster generation. This shows that sacrificing some processing speed can lead to noticeable improvements in generation speed.

Key Takeaway: For faster generation, consider using Q80 or Q40 quantization.

Token Generation Speed Benchmarks: Apple M2 Ultra and Llama3 8B

Now, let's get to the heart of our analysis - Llama3 8B on Apple M2 Ultra. This powerhouse combination offers a glimpse into the future of LLM performance.

| Model | Quantization | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|---|

| Llama3 8B | F16 | 1202.74 | 36.25 |

| Llama3 8B | Q4KM | 1023.89 | 76.28 |

Observations:

- Llama3 8B demonstrates similar patterns to Llama2 7B. F16 offers significantly faster processing speed, while Q4KM prioritizes generation speed.

- Compared to Llama2 7B, Llama3 8B shows a higher processing speed across both quantization levels.

- Generation speed is comparable between the two models, with Llama3 8B slightly faster in the Q4KM configuration.

Key Takeaway: Llama3 8B delivers a noticeable performance boost compared to Llama2 7B, particularly in processing speeds. This makes it a compelling choice for applications demanding high-speed processing.

Performance Analysis: Model and Device Comparison

Model Comparison: Llama2 7B and Llama3 8B

We've observed the benefits of Llama3 8B compared to Llama2 7B on M2 Ultra, but how do they stack up against different devices?

| Model | Quantization | Device | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|---|---|

| Llama2 7B | Q4_0 | Apple M2 Ultra | 1238.48 | 94.27 |

| Llama3 8B | Q4KM | Apple M2 Ultra | 1023.89 | 76.28 |

Observations:

- Llama2 7B with Q40 quantization on Apple M2 Ultra exhibits a significantly higher processing speed compared to Llama3 8B with Q4K_M.

- Although Llama3 8B is a larger model, it still manages to achieve a reasonable processing speed on Apple M2 Ultra.

- The difference in generation speed can be attributed to different quantization methods employed in these models.

Key Takeaway: While Llama3 8B is a larger model, the specific quantization settings and hardware can have a significant impact on performance. In this case, Llama2 7B with Q4_0 quantization takes the lead in processing speed.

Token Generation Speed on Different Devices

| Model | Quantization | Device | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|---|---|

| Llama2 7B | F16 | Apple M2 Ultra | 1401.85 | 41.02 |

| Llama2 7B | Q8_0 | Apple M2 Ultra | 1248.59 | 66.64 |

| Llama3 8B | F16 | Apple M2 Ultra | 1202.74 | 36.25 |

| Llama3 8B | Q4KM | Apple M2 Ultra | 1023.89 | 76.28 |

| Llama3 70B | Q4KM | Apple M2 Ultra | 117.76 | 12.13 |

| Llama3 70B | F16 | Apple M2 Ultra | 145.82 | 4.71 |

Observations:

- The Apple M2 Ultra shows remarkable performance across various model sizes and quantization levels, indicating its power for handling demanding LLM workloads.

- As the model size increases, the processing speed generally decreases, however, this trend is not universal.

- LLMs with F16 quantization consistently exhibit higher processing speeds compared to models using Q4KM quantization, but this comes with a lower generation speed.

Key Takeaway: The Apple M2 Ultra proves its prowess as a robust platform for running LLMs, especially with F16 quantization, achieving remarkable processing speeds even for larger models.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B on Apple M2 Ultra

Now, let's bridge the gap between theory and practice. Let's explore some compelling use cases for Llama3 8B on Apple M2 Ultra:

- Rapid Prototyping: Llama3 8B's speed on M2 Ultra makes it ideal for rapid prototyping of LLM-based applications without the need for expensive cloud infrastructure.

- Educational Applications: For educational purposes, Llama3 8B provides a great balance of processing speed and model size, enabling students and researchers to explore advanced LLM concepts without overwhelming their hardware.

- Personalized Assistants: The combination of Llama3 8B and Apple M2 Ultra allows for the development of fast and efficient personalized assistants that can understand and respond to user queries in real-time.

Workarounds for Performance Limitations

No device is perfect, and even Apple M2 Ultra might encounter limitations when running LLMs. Here are some workarounds to overcome these challenges:

- Quantization: Experiment with different quantization levels (F16, Q80, Q4K_M) to find the optimal trade-off between processing speed and generation speed for your specific use case.

- Batching: Processing tokens in batches instead of one at a time can optimize performance by reducing overhead.

- Model Pruning: Removing unnecessary parameters from the model can reduce its size, potentially improving processing and generation speeds.

- Cloud Computing: If your application demands significant processing power, consider leveraging cloud computing resources, allowing you to scale up your LLM infrastructure as needed.

Conclusion

The integration of Llama3 8B on Apple M2 Ultra has undeniably unlocked new possibilities in the world of local LLMs. While there is always room for improvement, the observed performance benchmarks paint a positive picture. As the field continues to evolve, we can confidently expect even faster and more efficient LLM models to emerge, further pushing the boundaries of AI.

FAQ

Q: What is quantization?

A: Quantization is a technique used to reduce the size of an LLM by converting its parameters from high-precision floating-point numbers (like F16) to lower-precision formats (like Q4KM). This makes the model more compact and can potentially improve processing speed, but it might also impact model accuracy. Imagine it like resizing a picture from high quality to a smaller size.

Q: What are the benefits of running LLMs locally?

A: Running LLMs locally offers several advantages:

- Privacy: Local LLMs process data on your device, enhancing privacy and security.

- Offline Access: You can use LLMs without an internet connection, making them more accessible in remote areas or during outages.

- Reduced Latency: Local models reduce the time it takes to process requests, resulting in a more responsive user experience.

Q: How do I choose the right LLM for my project?

A: The choice of LLM depends on your specific requirements:

- Model Size: Larger models may offer higher accuracy but demand more computational resources.

- Quantization: Experiment with different quantization levels to optimize performance and accuracy for your specific application.

- Hardware: Choose a device that can handle the chosen model's workload, considering factors like processing speed and memory.

Q: What is the future of local LLMs?

A: The future of local LLMs is bright. With advancements in hardware, software, and model optimization techniques, we can expect LLMs to become more powerful, efficient, and accessible, empowering developers and users to unlock the full potential of AI on their own devices.

Keywords

LLMs, Llama3, Apple M2 Ultra, Token Generation Speed, Quantization, F16, Q4KM, Processing Speed, Generation Speed, Local LLMs, Performance Benchmarks, AI, Machine Learning, Deep Learning, Natural Language Processing, NLP, Developer, Geek, Performance Optimization, Use Cases