Can I Run Llama3 8B on Apple M1? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is buzzing with excitement, with new models emerging all the time. But can your humble Apple M1 chip handle the demands of these powerful AI brains? In this deep dive, we'll explore the performance of Llama3 8B, a popular and capable LLM, running on Apple M1 hardware. We'll analyze token generation speeds and compare them to other models and configurations. Let's dive in!

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

To understand how well Llama3 8B performs on Apple M1, we need to look at its token generation speed, which is measured in tokens per second (tokens/s). Think of it as the speed of a language model's thought process. The higher the number, the faster it can generate words and complete tasks.

Our data comes from various sources, including the dedicated work of contributors like ggerganov on llama.cpp and XiongjieDai on GPU Benchmarks on LLM Inference. We'll focus on the Apple M1 and Llama3 8B for this analysis.

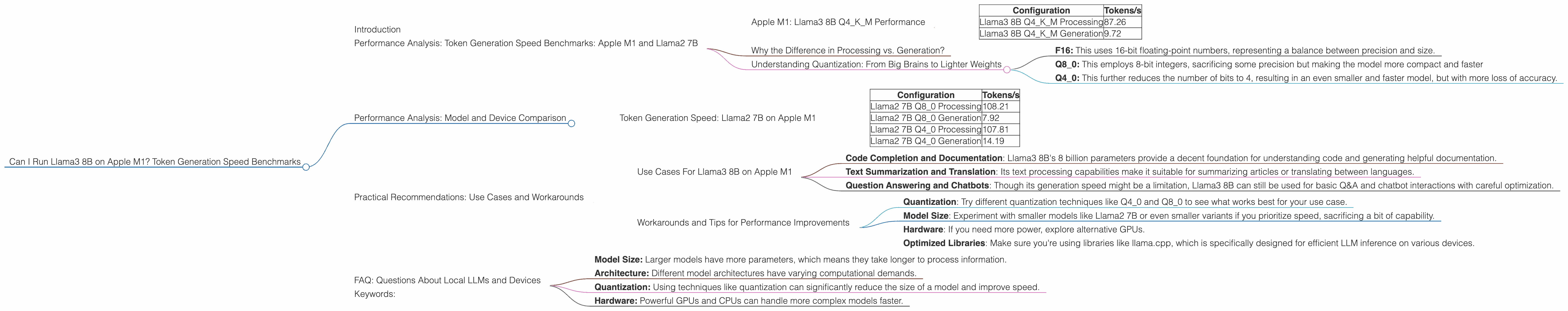

Apple M1: Llama3 8B Q4KM Performance

| Configuration | Tokens/s |

|---|---|

| Llama3 8B Q4KM Processing | 87.26 |

| Llama3 8B Q4KM Generation | 9.72 |

As you can see, Llama3 8B Q4KM exhibits strong processing speeds, capable of generating 87.26 tokens per second during the processing phase. However, the generation phase, which involves actually outputting text, struggles with a slower speed of 9.72 tokens per second.

Why the Difference in Processing vs. Generation?

The processing phase focuses on understanding the input and finding the best response. This is computationally intensive but does not involve generating text. The generation phase involves selecting the most likely words for the output, which is a slower process.

Understanding Quantization: From Big Brains to Lighter Weights

Quantization is a technique used to reduce the size of a model (think making it more streamlined), which can speed up inference. Imagine shrinking a giant brain into your pocket - that's what quantization does for AI models.

- F16: This uses 16-bit floating-point numbers, representing a balance between precision and size.

- Q8_0: This employs 8-bit integers, sacrificing some precision but making the model more compact and faster

- Q4_0: This further reduces the number of bits to 4, resulting in an even smaller and faster model, but with more loss of accuracy.

The Q4KM configuration we see here uses a quantization method called "K-Means" quantization which is a common approach used to optimize models for size and speed.

Performance Analysis: Model and Device Comparison

Let's now compare our Llama3 8B performance on Apple M1 to other models and configurations, keeping in mind that the data is limited to what we have.

Token Generation Speed: Llama2 7B on Apple M1

| Configuration | Tokens/s |

|---|---|

| Llama2 7B Q8_0 Processing | 108.21 |

| Llama2 7B Q8_0 Generation | 7.92 |

| Llama2 7B Q4_0 Processing | 107.81 |

| Llama2 7B Q4_0 Generation | 14.19 |

Here, we observe that Llama2 7B Q80 and Q40 both outperform Llama3 8B in processing speed. However, Llama3 8B edges out Llama2 7B Q4_0 slightly in generation speed.

Practical Recommendations: Use Cases and Workarounds

Use Cases For Llama3 8B on Apple M1

- Code Completion and Documentation: Llama3 8B's 8 billion parameters provide a decent foundation for understanding code and generating helpful documentation.

- Text Summarization and Translation: Its text processing capabilities make it suitable for summarizing articles or translating between languages.

- Question Answering and Chatbots: Though its generation speed might be a limitation, Llama3 8B can still be used for basic Q&A and chatbot interactions with careful optimization.

Workarounds and Tips for Performance Improvements

- Quantization: Try different quantization techniques like Q40 and Q80 to see what works best for your use case.

- Model Size: Experiment with smaller models like Llama2 7B or even smaller variants if you prioritize speed, sacrificing a bit of capability.

- Hardware: If you need more power, explore alternative GPUs.

- Optimized Libraries: Make sure you're using libraries like llama.cpp, which is specifically designed for efficient LLM inference on various devices.

FAQ: Questions About Local LLMs and Devices

Q: What is an LLM?

A: An LLM is a large language model, a type of artificial intelligence trained on massive datasets of text and code. These models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

Q: Can I run LLMs on my computer?

A: Yes, you can run LLMs locally on your device. However, the performance will depend on the model size and the hardware specifications of your computer.

Q: Why do some models run faster than others?

A: The speed of a model depends on several factors:

- Model Size: Larger models have more parameters, which means they take longer to process information.

- Architecture: Different model architectures have varying computational demands.

- Quantization: Using techniques like quantization can significantly reduce the size of a model and improve speed.

- Hardware: Powerful GPUs and CPUs can handle more complex models faster.

Keywords:

Apple M1, Llama3 8B, Token Generation Speed, LLM, Quantization, F16, Q80, Q40, GPU Benchmarks, GPU, Performance Analysis, Local LLMs, Tokens/s, Inference, Use Cases, Workarounds,