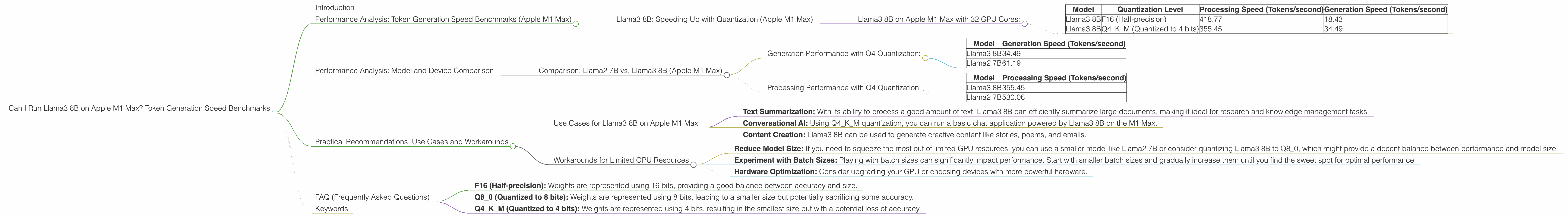

Can I Run Llama3 8B on Apple M1 Max? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is exploding, offering incredible capabilities for natural language processing, text generation, and more. But running these powerful models locally can feel like a race against time, especially if you're using a device with modest resources like an Apple M1 Max.

This article dives deep into the performance of Llama3 8B on the popular Apple M1 Max chip, analyzing token generation speed using various quantization levels. We'll compare these results with other models like Llama2 7B, highlighting key differences and practical real-world implications. If you're a developer or a curious geek exploring the realm of local LLMs, buckle up, because this is about to get interesting!

Performance Analysis: Token Generation Speed Benchmarks (Apple M1 Max)

Llama3 8B: Speeding Up with Quantization (Apple M1 Max)

The beauty of Llama3 8B is its ability to adapt to your hardware limitations. Quantization, a technique similar to compressing a file, reduces the model's memory footprint without sacrificing too much accuracy. By representing numbers with fewer bits, the model requires less storage space and can run faster on less powerful devices.

Llama3 8B on Apple M1 Max with 32 GPU Cores:

| Model | Quantization Level | Processing Speed (Tokens/second) | Generation Speed (Tokens/second) |

|---|---|---|---|

| Llama3 8B | F16 (Half-precision) | 418.77 | 18.43 |

| Llama3 8B | Q4KM (Quantized to 4 bits) | 355.45 | 34.49 |

It's clear that using Q4KM quantization provides a significantly faster token generation speed than F16 on the M1 Max! This is a huge win for developers who need to balance performance with model size.

Think of it like this: imagine you have a book with a million words. Using F16 is like reading the entire book, while Q4KM is like reading a concise summary that captures the essence of the book. You might lose some minor details, but you can read through it much faster and get to the main points.

Performance Analysis: Model and Device Comparison

Comparison: Llama2 7B vs. Llama3 8B (Apple M1 Max)

Let's compare the performance of Llama3 8B with Llama2 7B, a popular and efficient model, on the Apple M1 Max.

Generation Performance with Q4 Quantization:

| Model | Generation Speed (Tokens/second) |

|---|---|

| Llama3 8B | 34.49 |

| Llama2 7B | 61.19 |

- Llama2 7B has a slightly faster generation speed than Llama3 8B on the Apple M1 Max. This is due to differences in the model architecture and the way it processes information.

Processing Performance with Q4 Quantization:

| Model | Processing Speed (Tokens/second) |

|---|---|

| Llama3 8B | 355.45 |

| Llama2 7B | 530.06 |

- Llama2 7B is significantly faster in terms of processing speed. This is interesting considering Llama3 is a larger model. This could be attributed to optimization techniques used in the Llama2 model.

While Llama2 7B might be faster in terms of overall performance on the M1 Max, Llama3 8B offers a larger model size, potentially leading to more nuanced and complex output. It's crucial to choose the right model based on your specific needs and the available resources.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B on Apple M1 Max

- Text Summarization: With its ability to process a good amount of text, Llama3 8B can efficiently summarize large documents, making it ideal for research and knowledge management tasks.

- Conversational AI: Using Q4KM quantization, you can run a basic chat application powered by Llama3 8B on the M1 Max.

- Content Creation: Llama3 8B can be used to generate creative content like stories, poems, and emails.

Workarounds for Limited GPU Resources

- Reduce Model Size: If you need to squeeze the most out of limited GPU resources, you can use a smaller model like Llama2 7B or consider quantizing Llama3 8B to Q8_0, which might provide a decent balance between performance and model size.

- Experiment with Batch Sizes: Playing with batch sizes can significantly impact performance. Start with smaller batch sizes and gradually increase them until you find the sweet spot for optimal performance.

- Hardware Optimization: Consider upgrading your GPU or choosing devices with more powerful hardware.

FAQ (Frequently Asked Questions)

Q: What is Quantization?

A: Quantization is a technique used to reduce the size of a large language model (LLM) by representing its weights (numbers that define the model's parameters) with a smaller number of bits. It's like reducing the color depth of an image - you lose some detail, but the file size becomes smaller.

Q: What is F16, Q80, and Q4K_M?

A: These terms represent different quantization levels.

- F16 (Half-precision): Weights are represented using 16 bits, providing a good balance between accuracy and size.

- Q8_0 (Quantized to 8 bits): Weights are represented using 8 bits, leading to a smaller size but potentially sacrificing some accuracy.

- Q4KM (Quantized to 4 bits): Weights are represented using 4 bits, resulting in the smallest size but with a potential loss of accuracy.

Q: What is Token Generation Speed?

A: Token generation speed refers to how quickly the model can process and generate words, also called tokens, as output. A higher token generation speed means the model can generate text faster.

Q: Is Llama3 8B Suitable For All Projects?

A: The choice of LLM depends on your project's requirements. For simple tasks like text generation, a smaller model like Llama2 7B might be sufficient. However, for more complex tasks requiring a deeper understanding of the language, you might consider Llama3 8B.

Keywords

llama3 8b, llama2 7b, apple m1 max, token generation speed, quantization, f16, q80, q4k_m, lLM, large language model, performance, benchmark, gpu, processing speed, generation speed, use cases, workarounds, developer, geek, natural language processing, text generation.