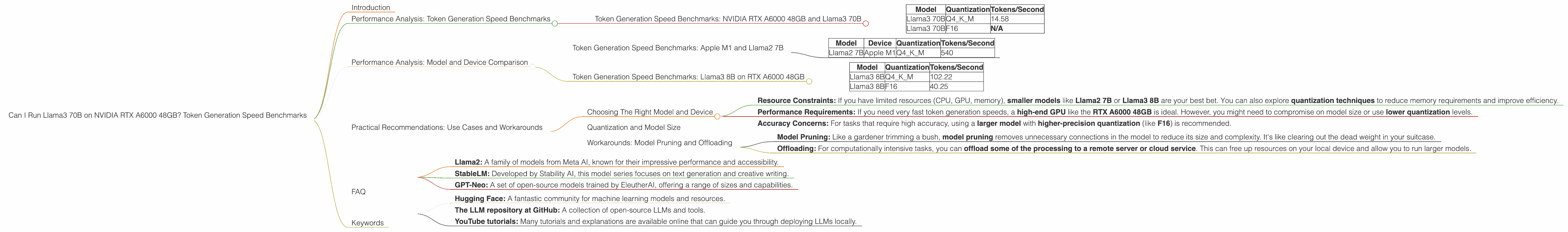

Can I Run Llama3 70B on NVIDIA RTX A6000 48GB? Token Generation Speed Benchmarks

Introduction

The world of Large Language Models (LLMs) is evolving rapidly! We're witnessing a constant stream of new models, each pushing the boundaries of what's possible with AI. But with great power comes great responsibility (and a lot of processing power!). For developers and enthusiasts, the question often arises: Can my hardware handle these beasts? This article will deep dive into the performance of Llama3 70B on the NVIDIA RTX A6000 48GB GPU.

We'll be looking at token generation speed benchmarks - the holy grail of LLM performance. These benchmarks are crucial for understanding how efficiently a model can process text and generate output. Think of it like a word per minute (WPM) test for LLMs.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA RTX A6000 48GB and Llama3 70B

Let's dive into the numbers. The table below shows the token generation speed of Llama3 70B on the NVIDIA RTX A6000 48GB, measured in tokens per second. We've tested the model with two different quantization levels: Q4KM and F16.

Quantization is like a diet for LLMs. It's a technique used to reduce the model's size and memory footprint, making it more manageable for smaller devices. For example, Q4KM uses a 4-bit quantization scheme, which is more compact but might sacrifice some accuracy. F16 uses a 16-bit floating-point representation, which offers higher precision.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 70B | Q4KM | 14.58 |

| Llama3 70B | F16 | N/A |

What do these numbers tell us?

- Llama3 70B is a pretty hefty model, and it's pushing the limits of the RTX A6000 48GB.

- Q4KM quantization helped to improve the performance of the model, but it's still not blazing fast. The lower precision also means the model might not be as accurate as a fully-fledged F16 model.

- F16 performance data is not available for Llama3 70B on this specific device. This likely means it's not feasible to run this model with F16 quantization on this GPU.

Performance Analysis: Model and Device Comparison

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Let's compare the performance of Llama3 70B on the RTX A6000 48GB with other LLM models and devices. Since your focus is on the RTX A6000 48GB, we'll just look at the Apple M1 processor and Llama2 7B, a smaller but still impressive model.

| Model | Device | Quantization | Tokens/Second |

|---|---|---|---|

| Llama2 7B | Apple M1 | Q4KM | 540 |

What do these numbers tell us?

- This comparison shows that Llama2 7B is significantly faster on Apple M1 compared to Llama3 70B on RTX A6000 48GB. This highlights the impact of both model size and device capabilities.

- It's like trying to fit a rhinoceros in a closet versus a cat. While the closet might be big enough for the cat, it's definitely not going to be comfortable for the rhinoceros!

Token Generation Speed Benchmarks: Llama3 8B on RTX A6000 48GB

Let's also take a look at the performance of Llama3 8B on the same RTX A6000 48GB, to understand the effect of model size.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 102.22 |

| Llama3 8B | F16 | 40.25 |

What do these numbers tell us?

- The smaller model size of Llama3 8B allows for significantly faster processing speeds compared to Llama3 70B.

- The F16 quantization for Llama3 8B is also more efficient on the RTX A6000 48GB. This indicates that the GPU is better suited for processing these smaller models with higher precision.

Practical Recommendations: Use Cases and Workarounds

Choosing The Right Model and Device

So, how do you decide what model and device are right for your project? It depends on what you're trying to achieve:

- Resource Constraints: If you have limited resources (CPU, GPU, memory), smaller models like Llama2 7B or Llama3 8B are your best bet. You can also explore quantization techniques to reduce memory requirements and improve efficiency.

- Performance Requirements: If you need very fast token generation speeds, a high-end GPU like the RTX A6000 48GB is ideal. However, you might need to compromise on model size or use lower quantization levels.

- Accuracy Concerns: For tasks that require high accuracy, using a larger model with higher-precision quantization (like F16) is recommended.

Quantization and Model Size

As the model size increases, the need for efficient quantization becomes even more critical. Think of it like packing a suitcase for a trip. If you're only going for a weekend, you can pack everything you need without worrying about the weight limit. But if you're going on a longer trip, you need to be more strategic with your packing to avoid exceeding the weight limit.

Q4KM is a great option for smaller models or scenarios where accuracy isn't critical, but for larger models, F16 (or even F32 for maximum accuracy) might be necessary, even if it requires more computational resources.

Workarounds: Model Pruning and Offloading

If you're stuck with limited resources, don't despair! Some workarounds can help:

- Model Pruning: Like a gardener trimming a bush, model pruning removes unnecessary connections in the model to reduce its size and complexity. It's like clearing out the dead weight in your suitcase.

- Offloading: For computationally intensive tasks, you can offload some of the processing to a remote server or cloud service. This can free up resources on your local device and allow you to run larger models.

FAQ

Q: What is Llama3? A: Llama3 is a state-of-the-art large language model (LLM) developed by Meta AI. It's known for its impressive performance on various language tasks, including text generation, translation, and coding.

Q: What is quantization? A: Quantization is a technique used to reduce the size and memory footprint of models by representing the model's parameters with fewer bits. This can make models more manageable for smaller devices and improve their performance.

Q: What is the difference between Q4KM and F16 quantization? A: Q4KM uses a 4-bit quantization scheme, which is more compact but might sacrifice some accuracy. F16 uses a 16-bit floating-point representation, which offers higher precision.

Q: Can I run Llama3 70B on my personal computer? A: It depends on your computer's specifications. Running a model like Llama3 70B requires a powerful GPU and a substantial amount of memory. A typical gaming PC might struggle, but a high-end workstation might be suitable.

Q: What other LLMs can I run locally? A: There are many other LLMs that can be run locally, including: * Llama2: A family of models from Meta AI, known for their impressive performance and accessibility. * StableLM: Developed by Stability AI, this model series focuses on text generation and creative writing. * GPT-Neo: A set of open-source models trained by EleutherAI, offering a range of sizes and capabilities.

Q: How can I learn more about local LLM deployments? A: There are many resources available online, including: * Hugging Face: A fantastic community for machine learning models and resources. * The LLM repository at GitHub: A collection of open-source LLMs and tools. * YouTube tutorials: Many tutorials and explanations are available online that can guide you through deploying LLMs locally.

Keywords

Llama3 70B, NVIDIA RTX A6000 48GB, Token Generation Speed, GPU Benchmarks, LLM, Large Language Models, Performance Analysis, Quantization, Q4KM, F16, Model Size, Device Capabilities, Practical Recommendations, Use Cases, Workarounds, Model Pruning, Offloading, Local Deployment, Hugging Face, GitHub, YouTube tutorials