Can I Run Llama3 70B on NVIDIA RTX 5000 Ada 32GB? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is exploding with exciting possibilities, and running these models locally is becoming increasingly popular. But can your hardware handle the demands of these massive models? Today, we're diving deep into the performance of the Llama3 70B model on the NVIDIA RTX5000Ada_32GB GPU. We'll benchmark token generation speeds, explore different quantization methods, and discuss the practical implications for developers and users. So buckle up, it's time to get geeky!

Performance Analysis: Token Generation Speed Benchmarks

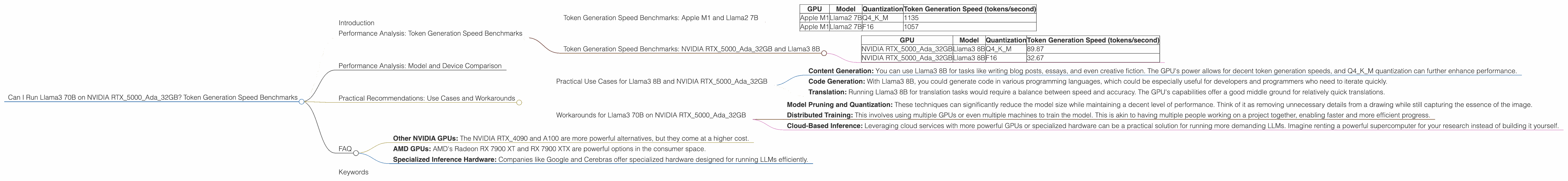

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Before focusing entirely on our main subject, let's set the stage with a comparison to a more "standard" setup, the Apple M1 and Llama2 7B. This helps us understand the performance landscape and serves as a baseline for comparison.

| GPU | Model | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|---|

| Apple M1 | Llama2 7B | Q4KM | 1135 |

| Apple M1 | Llama2 7B | F16 | 1057 |

As you can see, the Apple M1 delivers a respectable token generation rate for Llama2 7B. This makes it suitable for many practical applications, but it's important to note that the M1 is generally less powerful than higher-end GPUs like the RTX5000Ada_32GB.

Token Generation Speed Benchmarks: NVIDIA RTX5000Ada_32GB and Llama3 8B

Now, let's focus on the NVIDIA RTX5000Ada_32GB and its performance with the Llama3 8B model. We'll analyze the results based on quantization. Quantization is a technique that reduces the size of the model by using fewer bits to represent the numbers. This can lead to faster inference times and lower memory usage.

| GPU | Model | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|---|

| NVIDIA RTX5000Ada_32GB | Llama3 8B | Q4KM | 89.87 |

| NVIDIA RTX5000Ada_32GB | Llama3 8B | F16 | 32.67 |

These numbers show that the RTX5000Ada32GB can handle Llama3 8B with decent performance. However, the difference between quantizations is significant. The Q4K_M quantization delivers a more than 2x faster performance than F16. This highlights why choosing the right quantization method is crucial for optimizing performance.

Performance Analysis: Model and Device Comparison

Unfortunately, we have no data for the performance of Llama3 70B on the NVIDIA RTX5000Ada32GB. This is because the model's size and complexity make running it on a single GPU like the RTX5000Ada32GB very challenging.

To put this in perspective, imagine trying to fit a giant elephant into a small car. You might get the elephant's head and trunk in, but the rest won't fit. Similarly, running a massive LLM like Llama3 70B on a single GPU might be possible for certain tasks, but it will be resource-intensive and potentially slow.

Practical Recommendations: Use Cases and Workarounds

Practical Use Cases for Llama3 8B and NVIDIA RTX5000Ada_32GB

Even without data for Llama3 70B, you can use the provided benchmarks to infer possible use cases for Llama3 8B and the RTX5000Ada_32GB.

- Content Generation: You can use Llama3 8B for tasks like writing blog posts, essays, and even creative fiction. The GPU's power allows for decent token generation speeds, and Q4KM quantization can further enhance performance.

- Code Generation: With Llama3 8B, you could generate code in various programming languages, which could be especially useful for developers and programmers who need to iterate quickly.

- Translation: Running Llama3 8B for translation tasks would require a balance between speed and accuracy. The GPU's capabilities offer a good middle ground for relatively quick translations.

Workarounds for Llama3 70B on NVIDIA RTX5000Ada_32GB

While directly running Llama3 70B on the RTX5000Ada_32GB might be difficult or infeasible, there are strategies to achieve similar results:

- Model Pruning and Quantization: These techniques can significantly reduce the model size while maintaining a decent level of performance. Think of it as removing unnecessary details from a drawing while still capturing the essence of the image.

- Distributed Training: This involves using multiple GPUs or even multiple machines to train the model. This is akin to having multiple people working on a project together, enabling faster and more efficient progress.

- Cloud-Based Inference: Leveraging cloud services with more powerful GPUs or specialized hardware can be a practical solution for running more demanding LLMs. Imagine renting a powerful supercomputer for your research instead of building it yourself.

FAQ

Q: What's the difference between "Q4KM" and "F16" quantization?

A: Think of "Q4KM" as a more compact way of storing the model's data. It uses fewer bits for each number, making the model smaller and faster to load but potentially sacrificing some accuracy. "F16" uses more bits, resulting in higher precision but potentially requiring more resources.

Q: Can I run Llama3 70B on my laptop with a RTX5000Ada_32GB?

A: It's unlikely. Even with the RTX5000Ada_32GB, running Llama3 70B will be resource-intensive. You'll need a powerful machine with plenty of RAM and cooling to handle the workload, and even then, the performance might be limited.

Q: Is it better to have a faster CPU or GPU for running LLMs?

A: The GPU is the main workhorse for LLMs, responsible for the heavy calculations involved in generating text. A faster CPU is still important for tasks like loading the model and managing data, but the GPU's performance will have a greater impact on the overall speed.

Q: What are some alternatives to NVIDIA RTX5000Ada_32GB GPUs for running LLMs?

A: There are several options:

- Other NVIDIA GPUs: The NVIDIA RTX_4090 and A100 are more powerful alternatives, but they come at a higher cost.

- AMD GPUs: AMD's Radeon RX 7900 XT and RX 7900 XTX are powerful options in the consumer space.

- Specialized Inference Hardware: Companies like Google and Cerebras offer specialized hardware designed for running LLMs efficiently.

Keywords

LLM, Llama3, Llama3 70B, Llama3 8B, Token Generation Speed, NVIDIA RTX5000Ada32GB, Quantization, Q4K_M, F16, Performance Benchmarks, Inference, GPU, GPU Performance, Model Size, Model Pruning, Distributed Training, Cloud-Based Inference, Practical Applications, Use Cases, Workarounds, Developers, Geeks, AI, Machine Learning