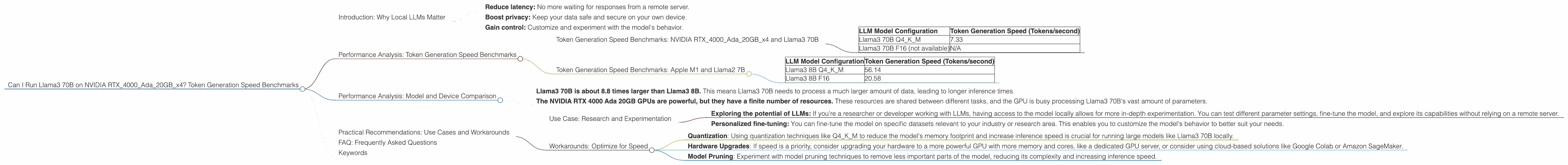

Can I Run Llama3 70B on NVIDIA RTX 4000 Ada 20GB x4? Token Generation Speed Benchmarks

Can I Run Llama3 70B on NVIDIA RTX4000Ada20GBx4? Token Generation Speed Benchmarks

Are you ready to unleash the power of Llama3 70B, the behemoth of language models, on your local machine? Hold your horses, techie! Before you dive headfirst into the fascinating world of local LLM inference, let's see if your hardware can handle the beast.

This deep dive explores the performance of Llama3 70B, the largest model in the Llama family, running on a quartet of NVIDIA RTX 4000 Ada 20GB GPUs. We'll analyze token generation speed benchmarks, compare Llama3 70B's performance with its smaller cousin Llama3 8B, and delve into practical recommendations for use cases and potential workarounds. Get ready for some serious number crunching!

Introduction: Why Local LLMs Matter

The rise of large language models (LLMs) like Llama3 70B has revolutionized the field of artificial intelligence. These powerful models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But what if you want to run these models locally, on your own computer? This opens up a whole new world of possibilities, enabling you to:

- Reduce latency: No more waiting for responses from a remote server.

- Boost privacy: Keep your data safe and secure on your own device.

- Gain control: Customize and experiment with the model's behavior.

However, running LLMs locally requires significant computational resources. We'll be looking at the specific case of Llama3 70B, a model with billions of parameters. Think of parameters as the model's knowledge base, and larger models often require more computational power to run.

Performance Analysis: Token Generation Speed Benchmarks

Let's get down to the nitty-gritty and see how well Llama3 70B performs on a cluster of four NVIDIA RTX 4000 Ada 20GB GPUs based on the latest benchmarks:

Token Generation Speed Benchmarks: NVIDIA RTX4000Ada20GBx4 and Llama3 70B

| LLM Model Configuration | Token Generation Speed (Tokens/second) |

|---|---|

| Llama3 70B Q4KM | 7.33 |

| Llama3 70B F16 (not available) | N/A |

Key takeaways:

As we can see, Llama3 70B running with quantization (Q4KM) achieves a token generation speed of 7.33 tokens per second on the RTX 4000 Ada 20GB x4 setup. This is significantly slower than the speed achieved by the smaller Llama3 8B model.

F16 (half-precision floating point) is not available for Llama3 70B on this particular hardware configuration. This means that you'll need to use quantization (Q4KM), which reduces the model's memory footprint but might slightly decrease accuracy.

Let's see how Llama3 70B stacks up against its smaller brother, Llama3 8B:

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

| LLM Model Configuration | Token Generation Speed (Tokens/second) |

|---|---|

| Llama3 8B Q4KM | 56.14 |

| Llama3 8B F16 | 20.58 |

Key takeaways:

Llama3 8B significantly outperforms Llama3 70B in terms of token generation speed when using both Q4KM and F16. This is because Llama3 8B, with its smaller parameter count, requires less computational resource.

The sheer size of Llama3 70B becomes apparent as the difference in token generation speed between these models highlights the trade-off between model size and performance.

Performance Analysis: Model and Device Comparison

Think of it like this. If Llama3 8B is a nimble sprinter, then Llama3 70B is a heavyweight Olympic lifter. Both are powerful in their way, but their strengths lie in different areas.

Let's break down the numbers to understand the performance gap:

- Llama3 70B is about 8.8 times larger than Llama3 8B. This means Llama3 70B needs to process a much larger amount of data, leading to longer inference times.

- The NVIDIA RTX 4000 Ada 20GB GPUs are powerful, but they have a finite number of resources. These resources are shared between different tasks, and the GPU is busy processing Llama3 70B's vast amount of parameters.

In essence, smaller models like Llama3 8B are more suitable for devices like NVIDIA RTX 4000 Ada 20GB x4. This is due to the limited amount of memory available to handle the larger model.

Practical Recommendations: Use Cases and Workarounds

Now, you might be thinking, "So, what's the point of running Llama3 70B locally if it's so slow?" Don't despair! There are still some compelling use cases for running large models locally.

Use Case: Research and Experimentation

- Exploring the potential of LLMs: If you're a researcher or developer working with LLMs, having access to the model locally allows for more in-depth experimentation. You can test different parameter settings, fine-tune the model, and explore its capabilities without relying on a remote server.

- Personalized fine-tuning: You can fine-tune the model on specific datasets relevant to your industry or research area. This enables you to customize the model's behavior to better suit your needs.

Workarounds: Optimize for Speed

- Quantization: Using quantization techniques like Q4KM to reduce the model's memory footprint and increase inference speed is crucial for running large models like Llama3 70B locally.

- Hardware Upgrades: If speed is a priority, consider upgrading your hardware to a more powerful GPU with more memory and cores, like a dedicated GPU server, or consider using cloud-based solutions like Google Colab or Amazon SageMaker.

- Model Pruning: Experiment with model pruning techniques to remove less important parts of the model, reducing its complexity and increasing inference speed.

FAQ: Frequently Asked Questions

Q: Can I run Llama3 70B on my gaming PC?

A: It depends! If your gaming PC has multiple high-end GPUs, like a multi-GPU setup with NVIDIA RTX 4000 Ada 20GB cards, it might be possible. But be prepared for a performance hit, especially if you're running other demanding applications.

Q: What's quantization?

A: Quantization is a technique that reduces the size of the model by using fewer bits to represent each parameter. Imagine converting a high-resolution image to a lower-resolution image. In the same way, quantization reduces the model's memory foot print, making it more efficient and faster to run.

Q: Is it worth running a model locally?

A: It depends on your needs! For research and experimentation, local inference offers more control and flexibility. For production environments, you might opt for cloud-based solutions for scalability and reliability.

Keywords

Llama3 70B, NVIDIA RTX 4000 Ada 20GB, LLM, token generation speed, performance, benchmarks, quantization, F16, GPU, local inference, use cases, workarounds, practical recommendations, model size, research, experimentation, hardware upgrades, cloud-based solutions, model pruning