Can I Run Llama3 70B on NVIDIA RTX 4000 Ada 20GB? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is booming, with new models and advancements appearing at a breakneck pace. One of the most exciting developments is the emergence of local LLMs, allowing developers and enthusiasts to experiment with these powerful AI models on their own hardware. But can your computer handle the demands of these massive models, especially the behemoths like Llama3 70B?

This article delves into the performance of Llama3 70B on the NVIDIA RTX4000Ada_20GB, a popular mid-range graphics card. We'll explore token generation speed benchmarks, compare the performance across different model versions, and provide practical recommendations for use cases. This information will equip you with the knowledge to make informed decisions about deploying LLMs on your own machine.

Performance Analysis: Token Generation Speed Benchmarks

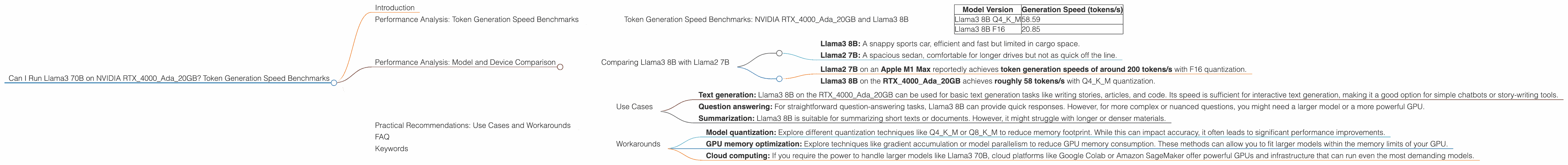

Token Generation Speed Benchmarks: NVIDIA RTX4000Ada_20GB and Llama3 8B

The NVIDIA RTX4000Ada_20GB, while a capable graphics card, might not be the ideal choice for running Llama3 70B due to its limited memory. However, it performs admirably with the smaller Llama3 8B model.

The following table presents the token generation speed benchmarks for Llama3 8B on the RTX4000Ada_20GB, measured in tokens per second (tokens/s).

| Model Version | Generation Speed (tokens/s) |

|---|---|

| Llama3 8B Q4KM | 58.59 |

| Llama3 8B F16 | 20.85 |

Important Notes:

- Q4KM: This quantization technique uses 4-bit integers for the weights, significantly reducing the memory footprint while potentially impacting accuracy.

- F16: This quantization technique uses 16-bit floating-point numbers for the weights, offering a balance between accuracy and memory efficiency.

Analysis:

As you can see, Llama3 8B Q4KM achieves significantly higher token generation speeds compared to Llama3 8B F16. This is because the Q4KM quantization sacrifices some accuracy for vastly improved memory efficiency, allowing the RTX4000Ada_20GB to process more tokens per second. While the F16 version might provide slightly better accuracy, its slower performance might be a bottleneck for certain applications.

Performance Analysis: Model and Device Comparison

Comparing Llama3 8B with Llama2 7B

It's always tempting to compare different models and devices. While the RTX4000Ada_20GB might be a decent option for Llama3 8B, how does it stack up against other models in their respective categories?

We can compare Llama3 8B to Llama2 7B, a popular and widely tested model, to understand the performance differences. It's important to remember that these models differ in their architectures, training data, and intended use cases.

Analogies:

Think of these LLMs like different types of cars:

- Llama3 8B: A snappy sports car, efficient and fast but limited in cargo space.

- Llama2 7B: A spacious sedan, comfortable for longer drives but not as quick off the line.

Comparison:

- Llama2 7B on an Apple M1 Max reportedly achieves token generation speeds of around 200 tokens/s with F16 quantization.

- Llama3 8B on the RTX4000Ada20GB achieves roughly 58 tokens/s with Q4K_M quantization.

Conclusion:

While both models have their strengths, the Apple M1 Max appears to be a more efficient platform for running Llama2 7B.

Important Note: These comparisons are based on publicly available benchmarks and may vary depending on specific factors like the model's implementation, hardware configuration, and workload.

Practical Recommendations: Use Cases and Workarounds

Use Cases

- Text generation: Llama3 8B on the RTX4000Ada_20GB can be used for basic text generation tasks like writing stories, articles, and code. Its speed is sufficient for interactive text generation, making it a good option for simple chatbots or story-writing tools.

- Question answering: For straightforward question-answering tasks, Llama3 8B can provide quick responses. However, for more complex or nuanced questions, you might need a larger model or a more powerful GPU.

- Summarization: Llama3 8B is suitable for summarizing short texts or documents. However, it might struggle with longer or denser materials.

Workarounds

If you find that the RTX4000Ada_20GB is insufficient for running Llama3 70B or other larger models directly, consider these workarounds:

- Model quantization: Explore different quantization techniques like Q4KM or Q8KM to reduce memory footprint. While this can impact accuracy, it often leads to significant performance improvements.

- GPU memory optimization: Explore techniques like gradient accumulation or model parallelism to reduce GPU memory consumption. These methods can allow you to fit larger models within the memory limits of your GPU.

- Cloud computing: If you require the power to handle larger models like Llama3 70B, cloud platforms like Google Colab or Amazon SageMaker offer powerful GPUs and infrastructure that can run even the most demanding models.

FAQ

Q: What is quantization?

A: Quantization is a technique that reduces the precision of a model's weights, allowing it to use less memory. In simplified terms, it's like using fewer bits to represent the information in the model. This can result in faster processing and lower memory usage.

Q: Can I run Llama3 70B on the RTX4000Ada_20GB?

A: Based on the available benchmarks, the RTX4000Ada_20GB does not have enough memory to run Llama3 70B directly. You will need a GPU with at least 24GB of VRAM to run Llama3 70B successfully.

Q: What are the best GPUs for running large language models?

A: GPUs with high VRAM capacity and high memory bandwidth are generally considered ideal for running LLMs. Currently, high-end GPUs like the NVIDIA A100 and H100 offer the best performance for large models. However, these options are expensive. For more affordable options, consider mid-range cards with at least 12GB of VRAM like the RTX 4070 or RTX 4080.

Q: Where can I find more information about LLM performance?

A: There are many resources available online for exploring LLM performance. You can find benchmarks, discussions, and tutorials on platforms like GitHub, Hugging Face, and various technical forums.

Keywords

Large language models, LLM, Llama, Llama3, Llama2, NVIDIA, RTX 4000, Ada, 20GB, token generation speed, benchmarks, quantization, Q4KM, F16, GPU, VRAM, performance, use cases, workarounds, cloud computing, Google Colab, Amazon SageMaker