Can I Run Llama3 70B on NVIDIA L40S 48GB? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is buzzing with excitement, and rightfully so! These powerful AI systems are revolutionizing everything from creative writing to scientific research. But running these behemoths locally can be a real challenge, especially if you're aiming for high performance. This article dives into the nitty-gritty of running the gargantuan Llama3 70B model on a powerful NVIDIA L40S_48GB GPU, analyzing its token generation speed and providing practical insights for developers. It's like trying to stuff a giant elephant into a small car, but with a lot more computing power and a whole lot fewer crunched fenders.

Performance Analysis: Token Generation Speed Benchmarks

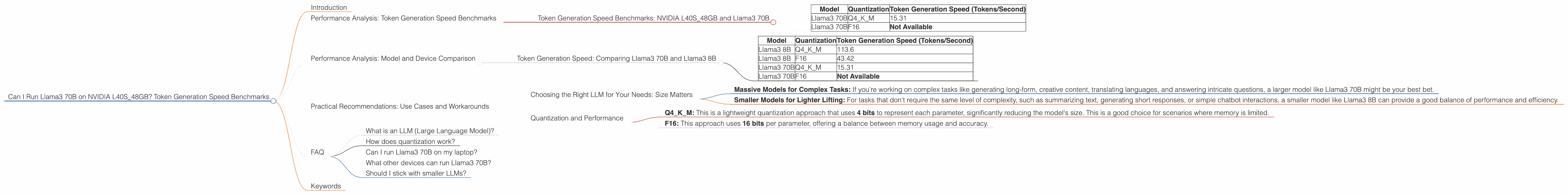

Token Generation Speed Benchmarks: NVIDIA L40S_48GB and Llama3 70B

Let's jump right into the heart of the matter: can you squeeze Llama3 70B onto the NVIDIA L40S48GB and get it to perform at a decent clip? The short answer is yes, but with caveats. The L40S48GB is a beast of a GPU, but even this titan has its limits when dealing with a model as massive as Llama3 70B.

To understand why, let's break down the numbers.

Token generation speed is used to measure how quickly an LLM can generate new text. It's measured in tokens per second, which is essentially how many words (or parts of words) the model can output every second.

| Model | Quantization | Token Generation Speed (Tokens/Second) |

|---|---|---|

| Llama3 70B | Q4KM | 15.31 |

| Llama3 70B | F16 | Not Available |

Here's the breakdown:

- Llama3 70B Q4KM: This version, using 4-bit quantization (Q4KM), achieved 15.31 tokens/second.

- Llama3 70B F16: Unfortunately, data for the F16 version was not available.

What does this mean? It means that Llama3 70B with Q4KM quantization can generate roughly 15.31 tokens every second on the L40S_48GB GPU.

Important: The performance of Llama3 70B on the L40S_48GB depends heavily on the chosen quantization method. If you're dealing with a massive model like Llama3 70B, optimizing for performance with quantization strategies is essential.

Performance Analysis: Model and Device Comparison

Token Generation Speed: Comparing Llama3 70B and Llama3 8B

Now, let's compare the performance of Llama3 70B on the L40S_48GB to its smaller sibling, Llama3 8B, to see how model size impacts performance.

| Model | Quantization | Token Generation Speed (Tokens/Second) |

|---|---|---|

| Llama3 8B | Q4KM | 113.6 |

| Llama3 8B | F16 | 43.42 |

| Llama3 70B | Q4KM | 15.31 |

| Llama3 70B | F16 | Not Available |

Key Observations:

- Llama3 8B outperforms Llama3 70B in token generation speed with both the Q4KM and F16 quantization methods. This is expected, as Llama3 70B has significantly more parameters (70 billion vs 8 billion).

- Quantization:

- Llama3 8B Q4KM is almost three times faster than Llama3 70B Q4KM.

- Llama3 8B F16 is also significantly faster than Llama3 70B Q4KM.

Think of it like this: Generating text with a large LLM is like writing a novel. With a smaller model (Llama3 8B), it's like writing a short story—quick and efficient. A larger model (Llama3 70B) is more like writing a sprawling epic—it takes more time and computational muscle.

Practical Recommendations: Use Cases and Workarounds

Choosing the Right LLM for Your Needs: Size Matters

So, when should you use a large LLM like Llama3 70B, and when is a smaller model like Llama3 8B the better choice? It all boils down to your specific use case:

- Massive Models for Complex Tasks: If you're working on complex tasks like generating long-form, creative content, translating languages, and answering intricate questions, a larger model like Llama3 70B might be your best bet.

- Smaller Models for Lighter Lifting: For tasks that don't require the same level of complexity, such as summarizing text, generating short responses, or simple chatbot interactions, a smaller model like Llama3 8B can provide a good balance of performance and efficiency.

Quantization and Performance

Quantization (the process of compressing a model's weights) plays a crucial role in optimizing LLM performance. Here's how it works:

- Q4KM: This is a lightweight quantization approach that uses 4 bits to represent each parameter, significantly reducing the model's size. This is a good choice for scenarios where memory is limited.

- F16: This approach uses 16 bits per parameter, offering a balance between memory usage and accuracy.

Choosing the right quantization method depends on your specific hardware and performance priorities. If you have plenty of RAM and need the highest accuracy, F16 might be a good option. If you're dealing with limited memory or prioritize speed, Q4KM could be a better choice.

FAQ

What is an LLM (Large Language Model)?

An LLM is a type of artificial intelligence system that excels at natural language processing tasks. It learns from vast amounts of textual data, making it capable of understanding, generating, and manipulating human language in complex ways.

How does quantization work?

Think of quantization as a compression technique for LLMs. It reduces the size of the model by representing its parameters using fewer bits. For example, Q4KM uses 4 bits per parameter, while F16 uses 16 bits. This compression makes the model smaller and faster to load and run, but it may come at the cost of a slight decrease in accuracy.

Can I run Llama3 70B on my laptop?

That depends. Running a model as large as Llama3 70B on a laptop would be extremely challenging. You’d need a very powerful laptop with a high-end GPU. Even then, you might encounter problems with memory limitations and speed.

What other devices can run Llama3 70B?

While the L40S_48GB is a powerful GPU, other options are available. Other powerful GPUs such as the A100 and H100 are capable of running Llama3 70B, but performance may vary depending on the specific model and configuration.

Should I stick with smaller LLMs?

It's a good idea to start with a smaller LLM and scale up to larger models as your computational resources allow. It's like starting with a bicycle and working your way up to a motorcycle—you don't want to jump right into a supercar before you've learned the basics of driving.

Keywords

Llama3, NVIDIA, L40S48GB, LLM, Large Language Model, Token Generation Speed, Quantization, Q4K_M, F16, Performance, Device, Model, Memory, Resources, Use Cases, Workarounds, Development, AI, NLP, Natural Language Processing, GPU, Graphics Processing Unit, Tokens per Second, Bandwidth, GPUCores.