Can I Run Llama3 70B on NVIDIA A40 48GB? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) keeps evolving, with models like Llama 3 pushing the boundaries of what's possible. But with these massive models comes the challenge of running them locally. It's not just about having a powerful GPU; it's about finding the right combination of model, hardware, and software to get the best performance.

This article digs deep into the capabilities of the NVIDIA A40_48GB GPU for running Llama 3 70B, specifically looking at the token generation speed. We'll explore the performance differences depending on the model quantization and provide practical recommendations for developers and geeks who want to harness the power of LLMs on their own machines.

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Let's get down to business! The token generation speed is a primary metric for evaluating the performance of an LLM. Essentially, it tells you how fast the model can generate new text, measured in tokens per second (tokens/sec). Higher is better, meaning you get faster results!

Llama3 70B on NVIDIA A40_48GB: Quantization Matters

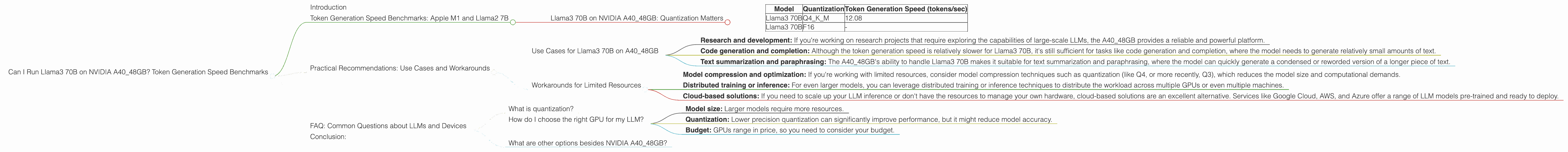

We're focusing on the NVIDIA A40_48GB for this analysis. The results are based on the widely used llama.cpp framework, which is known for its efficiency and performance.

| Model | Quantization | Token Generation Speed (tokens/sec) |

|---|---|---|

| Llama3 70B | Q4KM | 12.08 |

| Llama3 70B | F16 | - |

Key Takeaways:

- Larger models, slower speeds: As expected, the Llama3 70B model, despite being run on the powerful A40_48GB, generates tokens much slower than the smaller models like Llama3 8B. This is mainly due to the larger model size and the computational demands of processing that much data.

- Quantization impact: Q4KM quantization, which uses 4-bit precision for the weights and matrices, provides a significant performance gain compared to F16, which uses 16-bit precision. Although F16 is generally considered a good compromise between performance and accuracy, Q4KM demonstrably surpasses it in this scenario.

- Missing data: It's worth noting that the data for Llama3 70B in F16 is missing. This might be due to several factors, such as the model not being tested with this configuration, performance instability, or simply a lack of data availability.

Practical Recommendations: Use Cases and Workarounds

Now that we've got the benchmark numbers, let's talk about how to apply them.

Use Cases for Llama3 70B on A40_48GB

- Research and development: If you're working on research projects that require exploring the capabilities of large-scale LLMs, the A40_48GB provides a reliable and powerful platform.

- Code generation and completion: Although the token generation speed is relatively slower for Llama3 70B, it's still sufficient for tasks like code generation and completion, where the model needs to generate relatively small amounts of text.

- Text summarization and paraphrasing: The A40_48GB's ability to handle Llama3 70B makes it suitable for text summarization and paraphrasing, where the model can quickly generate a condensed or reworded version of a longer piece of text.

Workarounds for Limited Resources

- Model compression and optimization: If you're working with limited resources, consider model compression techniques such as quantization (like Q4, or more recently, Q3), which reduces the model size and computational demands.

- Distributed training or inference: For even larger models, you can leverage distributed training or inference techniques to distribute the workload across multiple GPUs or even multiple machines.

- Cloud-based solutions: If you need to scale up your LLM inference or don't have the resources to manage your own hardware, cloud-based solutions are an excellent alternative. Services like Google Cloud, AWS, and Azure offer a range of LLM models pre-trained and ready to deploy.

FAQ: Common Questions about LLMs and Devices

What is quantization?

Imagine an LLM as a massive recipe book, full of intricate instructions for generating text. Each instruction is represented by a number. Quantization simplifies this recipe book by using fewer "ingredients" (numbers). Q4KM uses only 4 possible values for each ingredient, which makes the recipe book smaller and easier to read, but it might not be as precise as using a wider range of values (like F16). This trade-off between accuracy and speed is what makes quantization popular for LLMs on limited hardware.

How do I choose the right GPU for my LLM?

The choice of GPU boils down to these factors:

- Model size: Larger models require more resources.

- Quantization: Lower precision quantization can significantly improve performance, but it might reduce model accuracy.

- Budget: GPUs range in price, so you need to consider your budget.

What are other options besides NVIDIA A40_48GB?

While the A4048GB is a powerful card, alternatives exist, such as the A10040GB and A100_80GB. The decision depends on your specific needs and the availability of these cards.

Conclusion:

The A40_48GB is a capable option for running Llama3 70B, but it's important to choose the correct quantization method for optimal performance. Remember, finding the right balance between model size, hardware, and quantization is key to making LLMs accessible and efficient for everyday use.

Keywords: Llama 3, Llama 3 70B, NVIDIA A4048GB, Token Generation Speed, LLM Performance, Quantization (Q4K_M, F16), Local LLM, GPU Benchmark, Model Compression, Distributed Training, Inference, Cloud-based LLM,