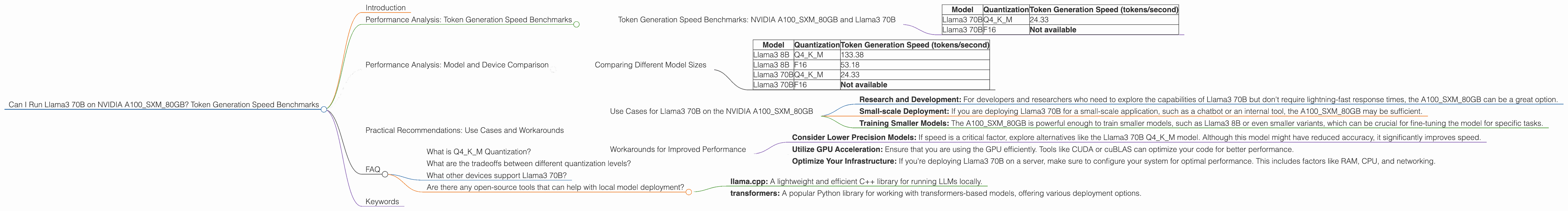

Can I Run Llama3 70B on NVIDIA A100 SXM 80GB? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is exploding, with new models and advancements emerging at a dizzying pace. One of the most popular models, Llama3, has captured the attention of developers and researchers alike. But with its massive size, running Llama3 locally can pose a significant challenge, especially for casual users. This article will delve into the performance of Llama3 70B on the powerful NVIDIA A100SXM80GB GPU, specifically focusing on token generation speed benchmarks. We will explore the factors that impact performance, compare different quantization levels, and uncover practical recommendations for using Llama3 70B on this device. If you're wondering whether your NVIDIA A100SXM80GB can handle the colossal Llama3 70B model, buckle up – it's time to dive into the data!

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA A100SXM80GB and Llama3 70B

The first question on everyone's mind is: how fast can Llama3 70B generate tokens on an NVIDIA A100SXM80GB? The answer, as you might expect, depends heavily on the quantization level of the model. Quantization is a technique that reduces the size of the model by using fewer bits to represent each number. This trade-off between accuracy and size is a crucial consideration in the world of LLMs.

Here are the token generation speeds for different quantization levels on the NVIDIA A100SXM80GB:

| Model | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama3 70B | Q4KM | 24.33 |

| Llama3 70B | F16 | Not available |

As you can see, only the Q4KM quantization level is available for Llama3 70B on the A100SXM80GB, and it achieves a respectable 24.33 tokens/second. This means that the model can generate roughly 24 tokens every second, which is a decent rate for a model of this size. However, it's important to remember that this speed is significantly lower than what you'd get with smaller models or different hardware.

Performance Analysis: Model and Device Comparison

Comparing Different Model Sizes

To further understand the performance implications of different model sizes, let's compare the token generation speeds of Llama3 8B and Llama3 70B on the NVIDIA A100SXM80GB.

| Model | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama3 8B | Q4KM | 133.38 |

| Llama3 8B | F16 | 53.18 |

| Llama3 70B | Q4KM | 24.33 |

| Llama3 70B | F16 | Not available |

As we can see, the smaller Llama3 8B model is significantly faster than Llama3 70B. This makes sense, considering that the 8B model has a much smaller footprint.

The difference in token generation speed between the two models emphasizes the trade-off between model size and performance. While larger models offer greater potential for complex tasks, they come at the cost of processing speed.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 70B on the NVIDIA A100SXM80GB

Despite its limitations, the NVIDIA A100SXM80GB can still be a valuable tool for running Llama3 70B. Here are some potential use cases:

- Research and Development: For developers and researchers who need to explore the capabilities of Llama3 70B but don't require lightning-fast response times, the A100SXM80GB can be a great option.

- Small-scale Deployment: If you are deploying Llama3 70B for a small-scale application, such as a chatbot or an internal tool, the A100SXM80GB may be sufficient.

- Training Smaller Models: The A100SXM80GB is powerful enough to train smaller models, such as Llama3 8B or even smaller variants, which can be crucial for fine-tuning the model for specific tasks.

Workarounds for Improved Performance

- Consider Lower Precision Models: If speed is a critical factor, explore alternatives like the Llama3 70B Q4KM model. Although this model might have reduced accuracy, it significantly improves speed.

- Utilize GPU Acceleration: Ensure that you are using the GPU efficiently. Tools like CUDA or cuBLAS can optimize your code for better performance.

- Optimize Your Infrastructure: If you're deploying Llama3 70B on a server, make sure to configure your system for optimal performance. This includes factors like RAM, CPU, and networking.

FAQ

What is Q4KM Quantization?

Q4KM quantization is a technique that reduces the size of the Llama3 70B model by using 4 bits to represent each number. This process is done by rounding each number to the nearest of 16 possible values. The 'K' and 'M' refer to additional techniques known as "kernel" and "matrix" quantization that further optimize the model for speed.

Think of it like converting a high-resolution photograph to a lower-resolution version. While you lose some detail, the file becomes much smaller and easier to share. Similarly, Q4KM quantization sacrifices some accuracy for a smaller, faster model.

What are the tradeoffs between different quantization levels?

Quantization is a balancing act. Lower quantization levels (like Q4KM) lead to smaller models, faster loading times, and improved inference speed. However, they often come with a decrease in accuracy. Higher quantization levels (like F16) offer better accuracy but result in larger models and slower inference times.

What other devices support Llama3 70B?

While the NVIDIA A100SXM80GB is a powerful GPU capable of running Llama3 70B, there are other options available. However, finding the ideal balance between model size and device capabilities is crucial for successful deployment. For larger models like Llama3 70B, devices with higher memory bandwidth and larger memory capacity are often required.

Are there any open-source tools that can help with local model deployment?

Yes, there are many open-source tools and frameworks available for running and deploying LLMs locally. Some popular examples include:

- llama.cpp: A lightweight and efficient C++ library for running LLMs locally.

- transformers: A popular Python library for working with transformers-based models, offering various deployment options.

Keywords

Llama3, LLM, NVIDIA A100SXM80GB, Token Generation Speed, Quantization, Q4KM, F16, Model Size, Performance, Practical Recommendations, Use Cases, Workarounds, Local Deployment, Open Source, GPU, CUDA, cuBLAS.