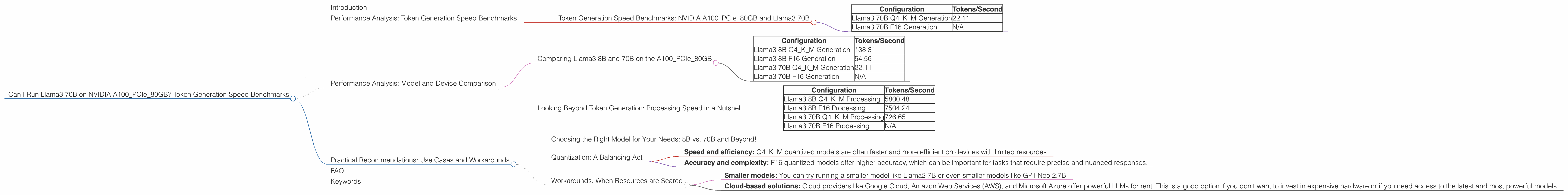

Can I Run Llama3 70B on NVIDIA A100 PCIe 80GB? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason. These powerful AI systems can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models locally can be a challenge. It requires powerful hardware and often involves some serious technical know-how.

This article dives deep into the performance of the Llama3 70B model on the NVIDIA A100PCIe80GB, a popular high-performance GPU. We'll explore token generation speed benchmarks, analyze the impact of different quantization levels, and offer practical recommendations for using these models effectively. Whether you're a seasoned developer or just getting started with LLMs, this guide will help you understand the capabilities of the Llama3 70B and equip you with the knowledge to make informed decisions about your project.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA A100PCIe80GB and Llama3 70B

Let's start with the main attraction: the token generation speed of the Llama3 70B model on the A100PCIe80GB GPU. Token generation is the process of converting text into numbers that the LLM can understand. The faster the model can generate tokens, the quicker it can churn out results.

Here's a table showcasing the token generation speed (measured in tokens per second) of Llama3 70B on the A100PCIe80GB under different configurations:

| Configuration | Tokens/Second |

|---|---|

| Llama3 70B Q4KM Generation | 22.11 |

| Llama3 70B F16 Generation | N/A |

Q4KM Quantization: This is a type of model compression that reduces the size of the model, making it more manageable for local processing. In Q4KM quantization, each number is represented using 4 bits.

F16 Quantization: This is another type of quantization, but it uses 16 bits per number. This provides higher precision, but the model may be larger.

As we can see, the Llama3 70B Q4KM quantized model generates tokens at a respectable speed. However, it's worth noting that we don't have data for the F16 quantized version of the model. We suspect this is due to the large size of the F16 model and the limitations of available resources.

Performance Analysis: Model and Device Comparison

Comparing Llama3 8B and 70B on the A100PCIe80GB

While the Llama3 70B model is the star of the show, we can gain valuable insights by looking at its smaller sibling, the Llama3 8B model. Let's compare the token generation speeds of both models on the A100PCIe80GB:

| Configuration | Tokens/Second |

|---|---|

| Llama3 8B Q4KM Generation | 138.31 |

| Llama3 8B F16 Generation | 54.56 |

| Llama3 70B Q4KM Generation | 22.11 |

| Llama3 70B F16 Generation | N/A |

This table shows a clear pattern: the smaller Llama3 8B model generates tokens significantly faster than the Llama3 70B model. This is expected because the smaller model has fewer parameters to process. This trend is consistent across both Q4KM and F16 quantized models.

Looking Beyond Token Generation: Processing Speed in a Nutshell

Token generation speed is crucial, but it's only part of the picture. Another important factor is the overall processing speed, which includes not just token generation but also other computational tasks involved in running the LLM.

Here's a table showing the processing speed (again measured in tokens per second) of the Llama3 8B and 70B models on the A100PCIe80GB:

| Configuration | Tokens/Second |

|---|---|

| Llama3 8B Q4KM Processing | 5800.48 |

| Llama3 8B F16 Processing | 7504.24 |

| Llama3 70B Q4KM Processing | 726.65 |

| Llama3 70B F16 Processing | N/A |

The differences in processing speed are quite dramatic. The Llama3 8B model is significantly faster in processing. This is understandable, as it's much smaller than the Llama3 70B model. The processing speed difference highlights the importance of considering the size of the model when choosing between different LLMs.

Practical Recommendations: Use Cases and Workarounds

Choosing the Right Model for Your Needs: 8B vs. 70B and Beyond!

So, how do you decide which model to use for your project? It depends on the specific task at hand.

If you need speed and efficiency, the smaller Llama3 8B model is the clear winner. It's a great choice for things like generating text, translating languages, or summarizing documents.

If you require more comprehensive and nuanced responses, and are willing to sacrifice some speed, the Llama3 70B model is a better option. It's capable of handling more complex tasks, such as creative writing, code generation, and even reasoning.

Ultimately, the right model for you will depend on your specific requirements.

Quantization: A Balancing Act

Quantization is a powerful tool for making LLMs more manageable, but it's not a one-size-fits-all solution. Q4KM quantization sacrifices some precision for increased speed and smaller model size. F16 quantization offers higher precision, but the model is larger. The choice between these options depends on your priorities:

- Speed and efficiency: Q4KM quantized models are often faster and more efficient on devices with limited resources.

- Accuracy and complexity: F16 quantized models offer higher accuracy, which can be important for tasks that require precise and nuanced responses.

Think of it like cooking: If you're making a simple dish, you might get away with a smaller set of ingredients (Q4KM). But if you're crafting a complex gourmet meal, you'll need more ingredients (F16).

Workarounds: When Resources are Scarce

What if your GPU just can't handle the Llama3 70B model, even with Q4KM quantization? Don't despair! There are workarounds, like using a smaller model or exploring cloud-based solutions.

- Smaller models: You can try running a smaller model like Llama2 7B or even smaller models like GPT-Neo 2.7B.

- Cloud-based solutions: Cloud providers like Google Cloud, Amazon Web Services (AWS), and Microsoft Azure offer powerful LLMs for rent. This is a good option if you don't want to invest in expensive hardware or if you need access to the latest and most powerful models.

FAQ

Q1: What is quantization?

A1: Quantization is a technique for reducing the size of an LLM by representing its numbers using fewer bits. This makes the model smaller and faster to process, but it can sacrifice some precision.

Q2: What are tokens?

A2: Tokens are the basic units of language that LLMs understand. Imagine them like the building blocks of text. They can be words, parts of words (like prefixes or suffixes), or punctuation marks.

Q3: What is token generation speed?

A3: Token generation speed refers to how quickly an LLM can convert text into these tokens. It's a crucial factor in determining the speed of the model.

Q4: What are LLMs?

A4: Large language models (LLMs) are a type of artificial intelligence that can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

Q5: What is the A100PCIe80GB?

A5: The NVIDIA A100PCIe80GB is a high-performance GPU designed for machine learning and scientific computing. It's a beast of a card that allows you to run demanding workloads like LLMs efficiently.

Q6: Can I run Llama3 70B on a CPU?

A6: It is highly unlikely that you can run Llama3 70B on a CPU unless you have a very powerful CPU with many cores, and even then, the performance will be significantly slower compared to a GPU. LLMs are extremely compute-intensive, and GPUs are specifically designed for these types of tasks.

Keywords

Llama3, NVIDIA A100, GPU, LLM, Token Generation, Token Speed, Quantization, Q4KM, F16, Processing Speed, Model Size, Local Inference, Cloud Computing, Performance Benchmarks, Model Comparison, Use Cases, Workarounds