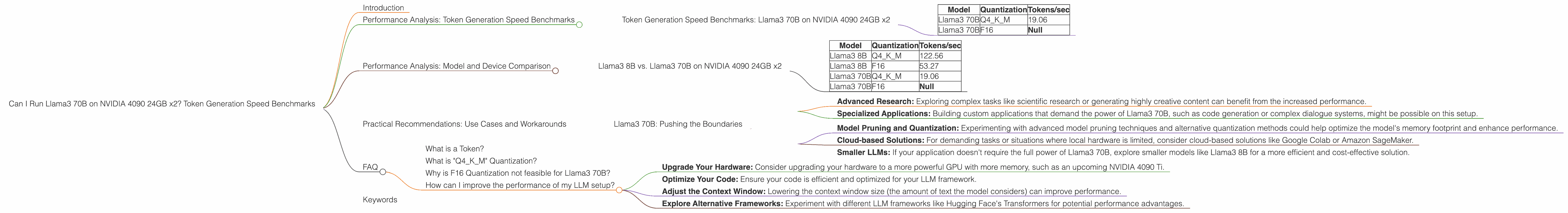

Can I Run Llama3 70B on NVIDIA 4090 24GB x2? Token Generation Speed Benchmarks

Introduction

The world of Large Language Models (LLMs) is exploding, with new models and advancements constantly pushing the boundaries of what's possible. LLMs like Llama3 are changing the game across various fields, from writing and translation to code generation and scientific research.

One of the most common questions surrounding LLMs is: "Can my hardware handle this?" This article dives deep into the performance of Llama3 70B – a behemoth of a model – on a powerful setup: two NVIDIA 4090 24GB GPUs. We'll be looking at token generation speed benchmarks and analyzing the results to help you understand the constraints and possibilities of running this model locally.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: Llama3 70B on NVIDIA 4090 24GB x2

Let's get down to the nitty-gritty. The following table showcases the token generation speed of Llama3 70B on the NVIDIA 4090 24GB x2 setup, measured in tokens per second (tokens/sec). Keep in mind that the actual performance can vary depending on various factors like prompt length and the context window size.

| Model | Quantization | Tokens/sec |

|---|---|---|

| Llama3 70B | Q4KM | 19.06 |

| Llama3 70B | F16 | Null |

Observations:

- Q4KM Quantization: Llama3 70B with Q4KM quantization achieves a token generation speed of 19.06 tokens/sec. This represents a significant improvement over F16 quantization, but still requires a powerful setup like the NVIDIA 4090 24GB x2.

- F16 Quantization: We don't have data for F16 quantization due to the massive memory requirements of Llama3 70B. Running this model with F16 quantization would likely exceed the combined memory capacity of the two 4090s.

Understanding Quantization:

Quantization is like simplifying a complex image. The original image contains lots of detailed information (high precision), but by reducing the colors (quantization), you can make it smaller and easier to store and process. LLMs are massive, so quantization helps reduce their size and memory footprint, allowing them to run on more modest hardware.

Analogies:

Imagine you have a super-detailed blueprint for building a skyscraper. It takes a ton of storage space and is difficult to manage. Quantization is like creating a simpler version of the blueprint, with less detail but still enough to guide the construction.

Performance Analysis: Model and Device Comparison

Llama3 8B vs. Llama3 70B on NVIDIA 4090 24GB x2

Let's see how Llama3 8B fares compared to its larger cousin, Llama3 70B.

| Model | Quantization | Tokens/sec |

|---|---|---|

| Llama3 8B | Q4KM | 122.56 |

| Llama3 8B | F16 | 53.27 |

| Llama3 70B | Q4KM | 19.06 |

| Llama3 70B | F16 | Null |

Observations:

- Smaller Models, Faster Generation: As expected, Llama3 8B shows significantly higher token generation speeds compared to Llama3 70B. This demonstrates that the size of the model plays a crucial role in performance. Smaller models are generally easier to run on less powerful hardware, but might not achieve the same level of accuracy or complexity.

- Quantization Impact: Both models benefit greatly from using Q4KM quantization, resulting in drastically improved token generation speeds compared to F16. This highlights the importance of choosing the right quantization method to balance performance and memory usage.

Practical Recommendations: Use Cases and Workarounds

Llama3 70B: Pushing the Boundaries

While running Llama3 70B on a single NVIDIA 4090 24GB might be a stretch, using two GPUs provides a viable option for developers and researchers pushing the boundaries of LLM capabilities. Here are some potential use cases:

- Advanced Research: Exploring complex tasks like scientific research or generating highly creative content can benefit from the increased performance.

- Specialized Applications: Building custom applications that demand the power of Llama3 70B, such as code generation or complex dialogue systems, might be possible on this setup.

Workarounds:

- Model Pruning and Quantization: Experimenting with advanced model pruning techniques and alternative quantization methods could help optimize the model's memory footprint and enhance performance.

- Cloud-based Solutions: For demanding tasks or situations where local hardware is limited, consider cloud-based solutions like Google Colab or Amazon SageMaker.

- Smaller LLMs: If your application doesn't require the full power of Llama3 70B, explore smaller models like Llama3 8B for a more efficient and cost-effective solution.

FAQ

What is a Token?

A token is a unit of language in the context of an LLM. It's like a building block of text, representing a word, punctuation mark, or even a part of a word.

What is "Q4KM" Quantization?

Q4KM is a type of quantization that reduces the precision of the model's weights. Think of it like using a smaller scale for measuring. It sacrifices a bit of accuracy for a significant gain in performance and memory usage.

Why is F16 Quantization not feasible for Llama3 70B?

F16 quantization requires double the memory compared to Q4KM. Llama3 70B is so massive that even with two 4090 GPUs, the combined memory is not enough to handle F16 quantization.

How can I improve the performance of my LLM setup?

There are several ways:

- Upgrade Your Hardware: Consider upgrading your hardware to a more powerful GPU with more memory, such as an upcoming NVIDIA 4090 Ti.

- Optimize Your Code: Ensure your code is efficient and optimized for your LLM framework.

- Adjust the Context Window: Lowering the context window size (the amount of text the model considers) can improve performance.

- Explore Alternative Frameworks: Experiment with different LLM frameworks like Hugging Face's Transformers for potential performance advantages.

Keywords

Llama3 70B, NVIDIA 4090 24GB, token generation speed, benchmarks, LLM performance, quantization, Q4KM, F16, use cases, workarounds, cloud-based solutions, LLM frameworks, GPU, LLM, token.