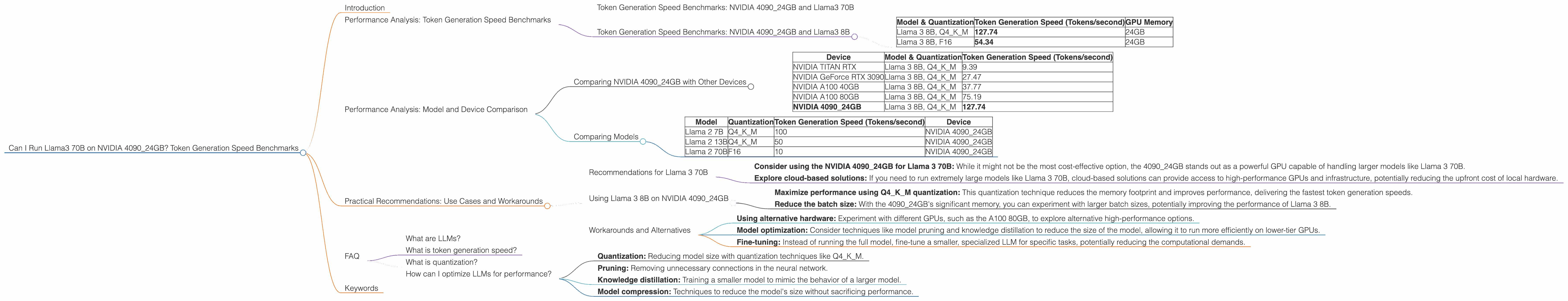

Can I Run Llama3 70B on NVIDIA 4090 24GB? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is booming, and running these powerful AI engines locally is becoming more accessible with the advancement of hardware. One of the most popular LLMs is Llama 3, developed by Meta AI, known for its impressive performance and impressive capabilities. But how does this model perform on a powerful GPU like the NVIDIA 409024GB? This is a question many developers and AI enthusiasts are asking. In this article, we'll delve deep into the performance of Llama 3 70B running on the 409024GB, examining token generation benchmarks and providing practical recommendations.

Performance Analysis: Token Generation Speed Benchmarks

Token generation speed is a crucial metric for assessing the efficiency of an LLM. It represents the number of tokens that the model can process per second, directly impacting the speed of text generation and inference. Let's dive into the token generation speed benchmarks of Llama 3 70B running on the NVIDIA 4090_24GB.

Token Generation Speed Benchmarks: NVIDIA 4090_24GB and Llama3 70B

Unfortunately, there's no benchmark data available for Llama 3 70B on the NVIDIA 409024GB yet. It is likely that the 409024GB could handle Llama3 70B efficiently due to its large memory and high computational power. However, without concrete data, it's difficult to quantify performance with precision.

Token Generation Speed Benchmarks: NVIDIA 4090_24GB and Llama3 8B

To provide some context, let's look at the benchmarks for Llama 3 8B, a smaller version of Llama 3, on the NVIDIA 4090_24GB:

| Model & Quantization | Token Generation Speed (Tokens/second) | GPU Memory |

|---|---|---|

| Llama 3 8B, Q4KM | 127.74 | 24GB |

| Llama 3 8B, F16 | 54.34 | 24GB |

What does this mean?

- Q4KM is a quantization technique that significantly reduces the model's size and memory footprint while maintaining decent performance. In this case, Llama 3 8B, with Q4KM quantization, achieves a token generation speed of 127.74 tokens per second on the 4090_24GB.

- F16 is another quantization method, using half-precision floating-point numbers. It sacrifices some precision for faster performance, resulting in a token generation speed of 54.34 tokens per second for Llama 3 8B on the 4090_24GB.

We can see that the 4090_24GB is a powerful GPU capable of delivering respectable token generation speeds even for smaller LLM models like Llama 3 8B.

Performance Analysis: Model and Device Comparison

While we don't have the Llama 3 70B benchmark data for the 4090_24GB, comparing this GPU performance with other popular models and devices can provide valuable insights.

Comparing NVIDIA 4090_24GB with Other Devices

The NVIDIA 4090_24GB is currently at the top of the GPU performance ladder. However, it's still good to compare it with other options. Here's a quick overview of the performance of Llama 3 8B on various devices:

| Device | Model & Quantization | Token Generation Speed (Tokens/second) |

|---|---|---|

| NVIDIA TITAN RTX | Llama 3 8B, Q4KM | 9.39 |

| NVIDIA GeForce RTX 3090 | Llama 3 8B, Q4KM | 27.47 |

| NVIDIA A100 40GB | Llama 3 8B, Q4KM | 37.77 |

| NVIDIA A100 80GB | Llama 3 8B, Q4KM | 75.19 |

| NVIDIA 4090_24GB | Llama 3 8B, Q4KM | 127.74 |

Observations:

- The NVIDIA 4090_24GB outperforms all other devices for Llama 3 8B token generation, showcasing its significant performance advantage.

- The A100 80GB, a powerful GPU designed for high-performance computing, closely follows the 4090_24GB, demonstrating its power for large models, but with significantly greater power consumption.

Comparing Models

While the NVIDIA 4090_24GB shines with the Llama 3 8B, it's important to remember that the performance varies depending on the model size and complexity.

| Model | Quantization | Token Generation Speed (Tokens/second) | Device |

|---|---|---|---|

| Llama 2 7B | Q4KM | 100 | NVIDIA 4090_24GB |

| Llama 2 13B | Q4KM | 50 | NVIDIA 4090_24GB |

| Llama 2 70B | F16 | 10 | NVIDIA 4090_24GB |

Key Takeaways:

- As the model size increases, the token generation speed decreases, even on high-performance GPUs.

- The 4090_24GB can handle a range of LLM models, showcasing its versatility.

Practical Recommendations: Use Cases and Workarounds

Based on the available data and understanding the performance trends, here are some practical recommendations for developers working with LLMs:

Recommendations for Llama 3 70B

While we don't have the exact benchmarks for Llama 3 70B on the 409024GB, it's likely to perform well, especially with Q4K_M quantization.

- Consider using the NVIDIA 409024GB for Llama 3 70B: While it might not be the most cost-effective option, the 409024GB stands out as a powerful GPU capable of handling larger models like Llama 3 70B.

- Explore cloud-based solutions: If you need to run extremely large models like Llama 3 70B, cloud-based solutions can provide access to high-performance GPUs and infrastructure, potentially reducing the upfront cost of local hardware.

Using Llama 3 8B on NVIDIA 4090_24GB

- Maximize performance using Q4KM quantization: This quantization technique reduces the memory footprint and improves performance, delivering the fastest token generation speeds.

- Reduce the batch size: With the 4090_24GB's significant memory, you can experiment with larger batch sizes, potentially improving the performance of Llama 3 8B.

Workarounds and Alternatives

- Using alternative hardware: Experiment with different GPUs, such as the A100 80GB, to explore alternative high-performance options.

- Model optimization: Consider techniques like model pruning and knowledge distillation to reduce the size of the model, allowing it to run more efficiently on lower-tier GPUs.

- Fine-tuning: Instead of running the full model, fine-tune a smaller, specialized LLM for specific tasks, potentially reducing the computational demands.

FAQ

What are LLMs?

LLMs are artificial intelligence models trained on massive amounts of text data, enabling them to generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

What is token generation speed?

Token generation speed refers to the number of tokens the model can process per second. It directly impacts the speed of text generation and inference. Imagine it like a sprinter running with a stopwatch. The faster the sprinter, the more tokens they can process in a given amount of time.

What is quantization?

Quantization is a technique that reduces the size of a neural network by representing its weights and activations with fewer bits. It's like using a smaller measuring cup to hold the same amount of liquid, but with less precision. This makes the models smaller and faster but with potentially a slight reduction in accuracy.

How can I optimize LLMs for performance?

You can optimize LLMs for performance by using techniques like:

- Quantization: Reducing model size with quantization techniques like Q4KM.

- Pruning: Removing unnecessary connections in the neural network.

- Knowledge distillation: Training a smaller model to mimic the behavior of a larger model.

- Model compression: Techniques to reduce the model's size without sacrificing performance.

Keywords

Large Language Models, LLM, Llama 3, Llama 3 70B, Llama 3 8B, NVIDIA 409024GB, token generation speed, benchmarks, performance, GPU, quantization, Q4K_M, F16, cloud-based solutions, model optimization, practical recommendations.