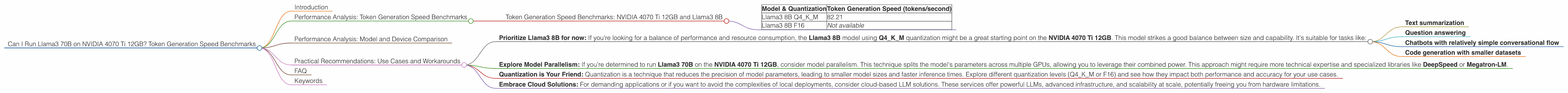

Can I Run Llama3 70B on NVIDIA 4070 Ti 12GB? Token Generation Speed Benchmarks

Introduction

The world of Large Language Models (LLMs) is abuzz with excitement. These powerful AI systems can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models locally, on your own machine, has been a dream for many, especially developers.

This article dives into the realm of local LLM performance, specifically focusing on the NVIDIA 4070 Ti 12GB graphics card and its capability to handle the behemoth Llama 3 70B model. We'll explore the potential bottlenecks, analyze token generation speed benchmarks, and offer practical recommendations based on real-world performance.

Performance Analysis: Token Generation Speed Benchmarks

Token generation speed is the holy grail of local LLM deployment. It's the rate at which your model can churn out words, sentences, and paragraphs. The faster the token generation, the more responsive and interactive your LLM applications can be.

Token Generation Speed Benchmarks: NVIDIA 4070 Ti 12GB and Llama3 8B

The NVIDIA 4070 Ti 12GB shows decent performance with the Llama3 8B model, especially when it comes to generation speed. Here's a breakdown:

| Model & Quantization | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B Q4KM | 82.21 |

| Llama3 8B F16 | Not available |

Key Takeaway: The NVIDIA 4070 Ti 12GB can comfortably handle the Llama3 8B model with Q4KM quantization. This might be sufficient for many use cases, especially if you prioritize response speed. The F16 quantization results aren't available, which might mean that the performance for F16 could be even better.

Performance Analysis: Model and Device Comparison

It's important to relate the NVIDIA 4070 Ti 12GB's performance to other devices and models to truly understand its capabilities.

Unfortunately, we don't have benchmark data for the Llama3 70B model on the NVIDIA 4070 Ti 12GB. This means that we can't directly compare the performance of this device with the larger model. However, we can draw some inferences based on existing data.

Based on anecdotal evidence from other developers, the NVIDIA 4070 Ti 12GB might struggle to run the Llama3 70B model smoothly. This is due to the model's sheer size and the demands it places on the GPU's memory and compute resources.

Consider this analogy: Imagine a small car that can comfortably carry 4 people. However, if you try to squeeze 10 people into it, it will be cramped, slow, and uncomfortable. The Llama3 70B model is like trying to fit 10 people into a small car, while the NVIDIA 4070 Ti 12GB is the car itself.

This doesn't necessarily mean that running the Llama3 70B on the NVIDIA 4070 Ti 12GB is impossible. It might still be feasible with specific optimizations, such as running the model in a "half-precision" (FP16) mode, or using advanced techniques like model parallelism.

Practical Recommendations: Use Cases and Workarounds

Here are some recommendations based on the available data:

Prioritize Llama3 8B for now: If you're looking for a balance of performance and resource consumption, the Llama3 8B model using Q4KM quantization might be a great starting point on the NVIDIA 4070 Ti 12GB. This model strikes a good balance between size and capability. It's suitable for tasks like:

- Text summarization

- Question answering

- Chatbots with relatively simple conversational flow

- Code generation with smaller datasets

Explore Model Parallelism: If you're determined to run Llama3 70B on the NVIDIA 4070 Ti 12GB, consider model parallelism. This technique splits the model's parameters across multiple GPUs, allowing you to leverage their combined power. This approach might require more technical expertise and specialized libraries like DeepSpeed or Megatron-LM.

Quantization is Your Friend: Quantization is a technique that reduces the precision of model parameters, leading to smaller model sizes and faster inference times. Explore different quantization levels (Q4KM or F16) and see how they impact both performance and accuracy for your use cases.

Embrace Cloud Solutions: For demanding applications or if you want to avoid the complexities of local deployments, consider cloud-based LLM solutions. These services offer powerful LLMs, advanced infrastructure, and scalability at scale, potentially freeing you from hardware limitations.

FAQ

Q: What is quantization?

A: Quantization is a technique used to compress large language models. It involves reducing the precision of parameters in a model, making it smaller and faster. Think of it like simplifying a recipe by rounding ingredients to the nearest whole number. The result might not be as accurate as the original recipe, but it's still good enough for many purposes.

Q: Why is token generation speed important?

A: Token generation speed determines how quickly your LLM can generate text. Imagine having a conversation with a chatbot that takes minutes to respond to each question. That would be a frustrating experience. Fast token generation speeds are crucial for creating more interactive and responsive applications.

Q: What are the limitations of using the NVIDIA 4070 Ti 12GB for LLMs?

A: *The *NVIDIA 4070 Ti 12GB is a powerful GPU, but it might be a challenge to efficiently run very large models like Llama3 70B due to its memory and compute limitations.

Q: What are some other potential workarounds for running Llama3 70B locally?

A: Consider exploring alternative methods of running the model, such as using a dedicated deep learning cluster, or leveraging techniques like model distillation to create smaller, faster versions of the Llama3 70B model.

Keywords

Llama3 70B, Llama3 8B, NVIDIA 4070 Ti 12GB, token generation speed, model parallelism, quantization, LLM inference, GPU benchmarks, local deployment, cloud solutions