Can I Run Llama3 70B on NVIDIA 3090 24GB x2? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is buzzing with excitement, and everyone wants to experience the magic of AI-powered text generation firsthand. But the question arises: can your hardware handle it? Running these sophisticated models locally requires some serious horsepower, and the choice of device and model configuration plays a crucial role.

This article delves into the performance of running the Llama3 70B model on a beefy dual NVIDIA 3090 24GB setup. We'll explore the token generation speed benchmarks, compare different model and device combinations, and provide practical recommendations for your AI adventures.

Performance Analysis: Token Generation Speed Benchmarks

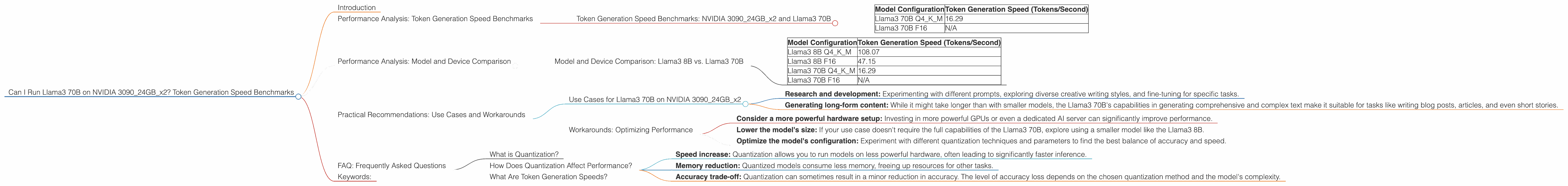

Token Generation Speed Benchmarks: NVIDIA 309024GBx2 and Llama3 70B

The first step is to understand how fast our chosen hardware can churn out tokens, which are the building blocks of text generation. Here's how the NVIDIA 309024GBx2 performs with the Llama3 70B model:

| Model Configuration | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama3 70B Q4KM | 16.29 |

| Llama3 70B F16 | N/A |

Breaking it down:

- Q4KM: This configuration uses 4-bit quantization for the key, value and matrix weights. This reduces the memory footprint of the model, allowing it to run on less powerful hardware.

- F16: This configuration utilizes 16-bit floating-point precision for the weights. It provides higher accuracy but requires more memory.

Important Note: We couldn't find any data for the Llama3 70B F16 configuration on this specific hardware setup. This might be because:

- The F16 configuration might be too demanding for the hardware. Even with two powerful GPUs, the memory requirements for the F16 configuration might exceed the capacity of the available GPUs.

- The data isn't available yet. Research and benchmarking are constantly evolving in the LLM space.

Performance Analysis: Model and Device Comparison

Model and Device Comparison: Llama3 8B vs. Llama3 70B

The Llama3 70B model is impressive, but it's not the only game in town. Let's compare it to its smaller sibling, the Llama3 8B model:

| Model Configuration | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama3 8B Q4KM | 108.07 |

| Llama3 8B F16 | 47.15 |

| Llama3 70B Q4KM | 16.29 |

| Llama3 70B F16 | N/A |

Observations:

- Smaller model, faster performance: The Llama3 8B model significantly outperforms the Llama3 70B in token generation speed. This is expected, as smaller models require less computational power.

- Quantization matters: The Q4KM configuration for both models offers a noticeable speed boost compared to the F16 configuration (where available). This highlights the importance of choosing the right quantization strategy for optimal performance.

Imagine this: If you were to generate a text with 10,000 tokens using the Llama3 8B Q4KM model, it would take approximately 92 seconds. With the Llama3 70B Q4KM model, the same task would take approximately 614 seconds, or over six times longer. This difference in speed can be crucial for certain applications.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 70B on NVIDIA 309024GBx2

Even with the slower token generation speed, the Llama3 70B model on this hardware setup can be useful for:

- Research and development: Experimenting with different prompts, exploring diverse creative writing styles, and fine-tuning for specific tasks.

- Generating long-form content: While it might take longer than with smaller models, the Llama3 70B's capabilities in generating comprehensive and complex text make it suitable for tasks like writing blog posts, articles, and even short stories.

Workarounds: Optimizing Performance

If you're seeking to maximize token generation speed with the Llama3 70B model, here are some strategies:

- Consider a more powerful hardware setup: Investing in more powerful GPUs or even a dedicated AI server can significantly improve performance.

- Lower the model's size: If your use case doesn't require the full capabilities of the Llama3 70B, explore using a smaller model like the Llama3 8B.

- Optimize the model's configuration: Experiment with different quantization techniques and parameters to find the best balance of accuracy and speed.

FAQ: Frequently Asked Questions

What is Quantization?

Quantization is a technique used to reduce the size of LLM weights. It involves converting the weights from 32-bit floating-point numbers to lower-precision formats like 8-bit or 4-bit integers. This can significantly reduce memory consumption and accelerate inference.

Imagine a large box filled with marbles of different sizes (the original weights). Quantization is like replacing those marbles with smaller, more uniform marbles (the quantized weights). You lose some precision, but you can now store many more "marbles" in the same box (less memory).

How Does Quantization Affect Performance?

Quantization can have a substantial impact on performance:

- Speed increase: Quantization allows you to run models on less powerful hardware, often leading to significantly faster inference.

- Memory reduction: Quantized models consume less memory, freeing up resources for other tasks.

- Accuracy trade-off: Quantization can sometimes result in a minor reduction in accuracy. The level of accuracy loss depends on the chosen quantization method and the model's complexity.

What Are Token Generation Speeds?

Token generation speed refers to how fast a model can create tokens (units of text) per second. It's a crucial metric for evaluating the efficiency of an LLM in text generation and inference tasks.

Think of it like a typing speed test, but instead of keystrokes per minute, we're measuring tokens per second. The higher the token generation speed, the faster the model can generate text.

Keywords:

Llama3, 70B, NVIDIA 3090, 24GB, token generation speed, benchmarks, performance, AI, LLM, quantization, Q4KM, F16, GPU, inference, use cases, workarounds, model comparison, practical recommendations, hardware, memory, accuracy, speed, development, research, content generation, long-form content, writing, blog posts, articles, stories.