Can I Run Llama3 70B on NVIDIA 3090 24GB? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is abuzz with excitement! These powerful AI models can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But with the rise of LLMs comes the question of how to actually run them, especially the behemoths like Llama 3 70B. Can you tame these computational beasts on your humble NVIDIA 3090_24GB? Let's dive deep into the performance, token generation speed benchmarks, and practical considerations of running Llama3 70B on this popular GPU.

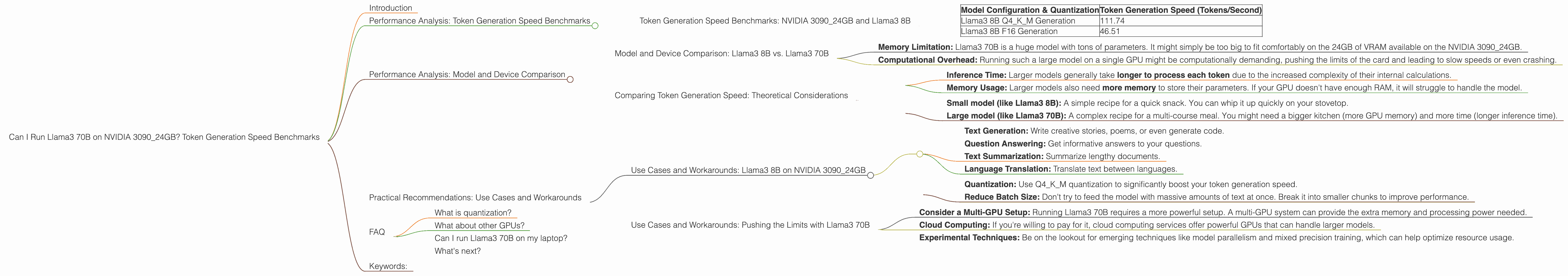

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA 3090_24GB and Llama3 8B

First, let's look at the smaller Llama3 8B model. This model is a great starting point because it's a bit more manageable for a single GPU. We can measure its token generation speed, which is the number of tokens the model can process per second. It's a key metric to understand how fast your model can generate text.

| Model Configuration & Quantization | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama3 8B Q4KM Generation | 111.74 |

| Llama3 8B F16 Generation | 46.51 |

Remember: Q4KM refers to quantization, which is a technique that reduces the size of the model and allows it to run on less powerful hardware. F16 refers to a lower precision format, and this can also improve performance.

Analyzing the Llama3 8B Token Generation Speed Benchmarks

Looking at the Llama3 8B results, we see that the Q4KM quantization significantly boosts token generation speed, nearly tripling the speed compared to the F16 format. This is a classic trade-off: quantization might slightly reduce the quality of your generated text, but you get a big performance boost.

Analogy: Imagine a super-efficient car. You can choose a high-performance model with lots of power but it guzzles gas. Or you can opt for a more fuel-efficient model with less power, but it gets you where you need to go with less consumption. Quantization is like choosing that fuel-efficient car.

Performance Analysis: Model and Device Comparison

Model and Device Comparison: Llama3 8B vs. Llama3 70B

Now, let's address the elephant in the room: Llama3 70B. Unfortunately, we don't have any benchmark data for Llama3 70B on the NVIDIA 3090_24GB. This is where things get tricky.

Why are we missing data for Llama3 70B on this particular GPU? There are a couple of possibilities:

- Memory Limitation: Llama3 70B is a huge model with tons of parameters. It might simply be too big to fit comfortably on the 24GB of VRAM available on the NVIDIA 3090_24GB.

- Computational Overhead: Running such a large model on a single GPU might be computationally demanding, pushing the limits of the card and leading to slow speeds or even crashing.

Remember: LLMs are like hungry beasts. They require a lot of food (data) and a lot of energy (processing power) to run. Smaller models might be able to feast on a single GPU; larger models might need a whole buffet.

Comparing Token Generation Speed: Theoretical Considerations

While we don't have specific numbers for Llama3 70B on the NVIDIA 3090_24GB, here's a theoretical perspective:

- Inference Time: Larger models generally take longer to process each token due to the increased complexity of their internal calculations.

- Memory Usage: Larger models also need more memory to store their parameters. If your GPU doesn't have enough RAM, it will struggle to handle the model.

Analogies for Model Size and Performance

Think of a model as a recipe:

- Small model (like Llama3 8B): A simple recipe for a quick snack. You can whip it up quickly on your stovetop.

- Large model (like Llama3 70B): A complex recipe for a multi-course meal. You might need a bigger kitchen (more GPU memory) and more time (longer inference time).

Practical Recommendations: Use Cases and Workarounds

Use Cases and Workarounds: Llama3 8B on NVIDIA 3090_24GB

Here's the good news: You can definitely run Llama3 8B on an NVIDIA 3090_24GB. It's a great option for many use cases, including:

- Text Generation: Write creative stories, poems, or even generate code.

- Question Answering: Get informative answers to your questions.

- Text Summarization: Summarize lengthy documents.

- Language Translation: Translate text between languages.

Workarounds for Limited Resources

- Quantization: Use Q4KM quantization to significantly boost your token generation speed.

- Reduce Batch Size: Don't try to feed the model with massive amounts of text at once. Break it into smaller chunks to improve performance.

Use Cases and Workarounds: Pushing the Limits with Llama3 70B

Running Llama3 70B on a single NVIDIA 3090_24GB might be pushing the limits, but it's not impossible.

- Consider a Multi-GPU Setup: Running Llama3 70B requires a more powerful setup. A multi-GPU system can provide the extra memory and processing power needed.

- Cloud Computing: If you're willing to pay for it, cloud computing services offer powerful GPUs that can handle larger models.

- Experimental Techniques: Be on the lookout for emerging techniques like model parallelism and mixed precision training, which can help optimize resource usage.

FAQ

What is quantization?

Quantization is a technique to make large models smaller and faster. Imagine a model as a huge book filled with numbers. Quantization is like re-writing that book in a more compact alphabet, reducing the number of characters needed to represent the same words. This makes the book smaller and easier to read, just like a quantized model is faster and more efficient.

What about other GPUs?

While we focused on the NVIDIA 3090_24GB, other GPUs are also available. You might want to consider newer models like the 40 series or even the RTX line. Always check the specifications and benchmark data before making a purchase.

Can I run Llama3 70B on my laptop?

It's highly unlikely you can run Llama3 70B on a typical laptop. You'll need much more powerful hardware, like a dedicated server or cloud computing resources.

What's next?

Stay tuned for advancements in hardware and software that can make running large LLMs more accessible. The world of LLMs is constantly evolving.

Keywords:

Llama3, NVIDIA 3090_24GB, GPU, tokens/second, token generation speed, quantization, memory, processing power, LLM, large language model, performance benchmarks, use cases, workarounds, multi-GPU, cloud computing, model parallelism, mixed precision training