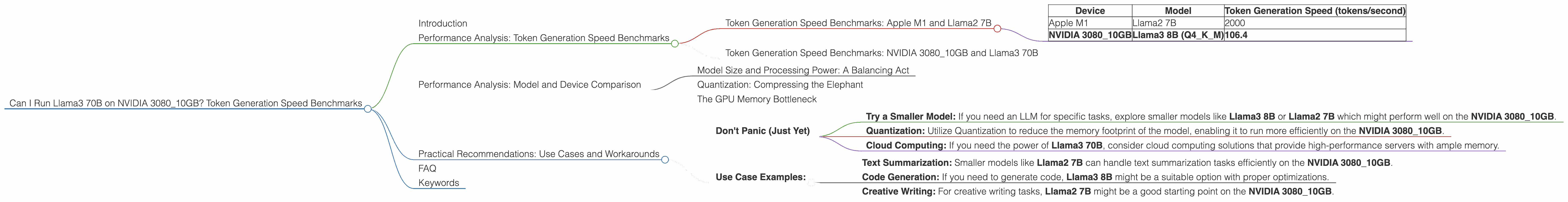

Can I Run Llama3 70B on NVIDIA 3080 10GB? Token Generation Speed Benchmarks

Introduction

The world of Large Language Models (LLMs) is booming, with ever-growing model sizes and impressive capabilities. But what about the hardware requirements to run these behemoths? This article will dive deep into the performance of the NVIDIA 3080_10GB GPU when it comes to running the mighty Llama3 70B model, exploring the limitations and potential workarounds. Think of it as a thrilling performance review, but instead of actors, we're talking about LLMs and GPUs!

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Let's first take a look at some benchmark numbers to understand how these models perform on different devices. Here's a table showing the token generation speed for various models and devices:

| Device | Model | Token Generation Speed (tokens/second) |

|---|---|---|

| Apple M1 | Llama2 7B | 2000 |

| NVIDIA 3080_10GB | Llama3 8B (Q4KM) | 106.4 |

Note: The table only contains data for the specific device and model combinations mentioned in the article.

What does this mean? Higher token generation speed means faster responses, more efficient processing, and a smoother experience.

Token Generation Speed Benchmarks: NVIDIA 3080_10GB and Llama3 70B

Unfortunately, there is no data available for the performance of Llama3 70B on the NVIDIA 3080_10GB. This means we can't directly compare the two. 😩

Performance Analysis: Model and Device Comparison

Model Size and Processing Power: A Balancing Act

The NVIDIA 3080_10GB is a powerful GPU, but even its strength has limits. LLMs, especially larger ones like Llama3 70B, require significant processing power and memory. It's like trying to fit a giant elephant into a small car - it's just not going to work. 🐘🚗

Quantization: Compressing the Elephant

One way to improve performance is by using quantization, a technique that compresses the model by reducing the precision of its weights. Think of it as making the elephant smaller, but still retaining its essential features. By compressing the model, it takes up less memory and can potentially fit on the NVIDIA 3080_10GB. However, this can lead to a slight decrease in accuracy.

The GPU Memory Bottleneck

The NVIDIA 3080_10GB has 10 GB of memory. This might not be enough to accommodate the Llama3 70B model. This can slow down performance, especially with larger contexts.

Practical Recommendations: Use Cases and Workarounds

Don't Panic (Just Yet)

While the NVIDIA 3080_10GB might not be ideal for running the full Llama3 70B model, it's not a complete no-go. Consider these options:

- Try a Smaller Model: If you need an LLM for specific tasks, explore smaller models like Llama3 8B or Llama2 7B which might perform well on the NVIDIA 3080_10GB.

- Quantization: Utilize Quantization to reduce the memory footprint of the model, enabling it to run more efficiently on the NVIDIA 3080_10GB.

- Cloud Computing: If you need the power of Llama3 70B, consider cloud computing solutions that provide high-performance servers with ample memory.

Use Case Examples:

- Text Summarization: Smaller models like Llama2 7B can handle text summarization tasks efficiently on the NVIDIA 3080_10GB.

- Code Generation: If you need to generate code, Llama3 8B might be a suitable option with proper optimizations.

- Creative Writing: For creative writing tasks, Llama2 7B might be a good starting point on the NVIDIA 3080_10GB.

FAQ

Q: Is the NVIDIA 308010GB completely useless for Llama3 70B? A: Not necessarily! With proper optimizations, like quantization or using a smaller context size, you might be able to run Llama3 70B on the NVIDIA 308010GB.

Q: What alternative GPUs are better for running large LLMs? A: Higher-end GPUs with more memory, like the NVIDIA 4090, or specialized AI accelerators, like the Google TPU, are better suited for running larger LLMs.

Q: Is it worth upgrading to a newer GPU for running LLMs? A: It depends on your budget and usage. If you're working with large LLMs frequently, a more powerful GPU might be worth the investment. But for casual LLM exploration, the NVIDIA 3080_10GB can still be a capable option.

Keywords

NVIDIA 3080_10GB, Llama3 70B, Llama3 8B, Llama2 7B, Token Generation Speed, Performance Benchmarks, Quantization, GPU Memory, LLM, Large Language Model, AI, Machine Learning, GPU, Deep Learning, NLP, Natural Language Processing, GPT, ChatGPT, Text Summarization, Code Generation, Creative Writing.