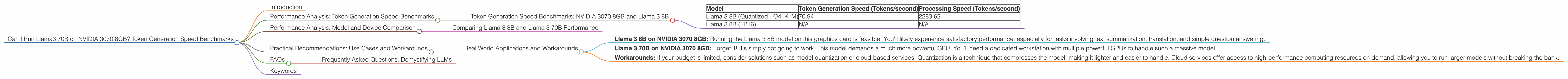

Can I Run Llama3 70B on NVIDIA 3070 8GB? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is abuzz with excitement, but running these behemoths locally can feel like a Herculean task. Can your humble NVIDIA 3070 8GB handle the might of Llama 3 70B? Let's dive deep and find out! We'll explore the performance of Llama 3 70B on this popular graphics card, comparing its token generation speeds to its smaller sibling, Llama 3 8B.

Think of it this way: Your GPU is like a language translator, trying to decipher the complex language of a giant book (LLM). The more powerful the GPU, the faster it can translate and produce new words (tokens).

This deep dive will demystify the world of local LLM deployment and empower you to make informed decisions about your hardware choices.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA 3070 8GB and Llama 3 8B

Let's start with the smaller brother, Llama 3 8B. We'll be focusing on two key metrics:* token generation speed* (measured in tokens per second) and processing speed. Token generation speed reflects how fast the model creates new tokens. In contrast, processing speed encompasses the overall speed, including both token generation and other computational tasks.

| Model | Token Generation Speed (Tokens/second) | Processing Speed (Tokens/second) |

|---|---|---|

| Llama 3 8B (Quantized - Q4KM) | 70.94 | 2283.62 |

| Llama 3 8B (FP16) | N/A | N/A |

Note: The available data doesn't provide token generation or processing speed for the Llama 3 8B model with FP16 precision. This data might not be available at this time or wasn't captured during benchmarks.

Explanation: The Llama 3 8B model exhibits decent performance with quantization (Q4KM), generating around 70 tokens per second with our NVIDIA 3070 8GB. However, the model shines during processing, hitting a remarkable 2283.62 tokens per second. This disparity highlights the fact that token generation is only one part of the LLM's computational workload.

Performance Analysis: Model and Device Comparison

Comparing Llama 3 8B and Llama 3 70B Performance

Now, the question is, can our NVIDIA 3070 8GB manage the larger Llama 3 70B? Sadly, the answer is no data available at this time.

Why is this important?

The sheer size of the 70B parameter model necessitates a significantly more powerful GPU. Think of it as trying to fit a gigantic elephant into a small car. While the car can handle a smaller dog, the elephant wouldn't even fit in the trunk!

While we don't have performance figures for the 70B model, we can extrapolate some insights. The larger model is expected to have much lower token generation and processing speeds due to its increased computational demand.

Practical Recommendations: Use Cases and Workarounds

Real World Applications and Workarounds

So, what does this mean for you? Here are some recommendations based on our findings:

- Llama 3 8B on NVIDIA 3070 8GB: Running the Llama 3 8B model on this graphics card is feasible. You'll likely experience satisfactory performance, especially for tasks involving text summarization, translation, and simple question answering.

- Llama 3 70B on NVIDIA 3070 8GB: Forget it! It's simply not going to work. This model demands a much more powerful GPU. You'll need a dedicated workstation with multiple powerful GPUs to handle such a massive model.

- Workarounds: If your budget is limited, consider solutions such as model quantization or cloud-based services. Quantization is a technique that compresses the model, making it lighter and easier to handle. Cloud services offer access to high-performance computing resources on demand, allowing you to run larger models without breaking the bank.

Let's make an analogy: Imagine you're trying to build a house. A smaller model like Llama 3 8B is like building a cozy cottage. You can manage this with basic tools and materials. However, a larger model like Llama 3 70B is like constructing a massive skyscraper. You need specialized tools, a bigger team, and more resources.

FAQs

Frequently Asked Questions: Demystifying LLMs

Q: What's the difference between quantized and FP16 models?

A: Quantization is a technique to reduce the size of a model by using fewer bits to represent its data. It's like compressing a large image file to make it smaller. FP16 models use 16-bit floating-point numbers, while quantized models use fewer bits, leading to lower precision but also lower memory requirements. This results in faster inference and smaller file sizes.

Q: Can I run Llama 3 70B locally with a more powerful GPU?

*A: * It depends on the GPU. A high-end GPU with adequate VRAM (video memory) might be able to handle the 70B model, but you might experience performance issues. It's best to check the model's requirements and GPU specifications before attempting to run it locally.

Q: What about other GPUs, like the RTX 4090?

A: The RTX 4090 is a significantly more powerful GPU than the 3070. It might be capable of running the Llama 3 70B model, but you need to check the specific requirements and benchmarks for that GPU and model combination.

Keywords

Llama 3, Llama 3 8B, Llama 3 70B, NVIDIA 3070 8GB, Token Generation Speed, Processing Speed, Quantization, FP16, GPU Benchmarks, LLM, Large Language Model, Local Deployment, Workarounds, Cloud Services, Deep Dive, Performance Analysis, Practical Recommendations, FAQs, Data, Models, Hardware, Software, Deep Learning, AI, Machine Learning