Can I Run Llama3 70B on Apple M3 Max? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is exploding, with new models and advancements emerging almost daily. One of the key questions on every developer's mind is: can I run these powerful LLMs on my local machine? The answer, as always, is "it depends."

This article dives deep into the performance of the latest Apple M3 Max chip, trying to tame the gargantuan Llama3 70B model. We'll dissect the token generation speed, compare it to other models and configurations, and provide practical insights to help you decide if running Llama3 70B locally on your M3 Max is feasible.

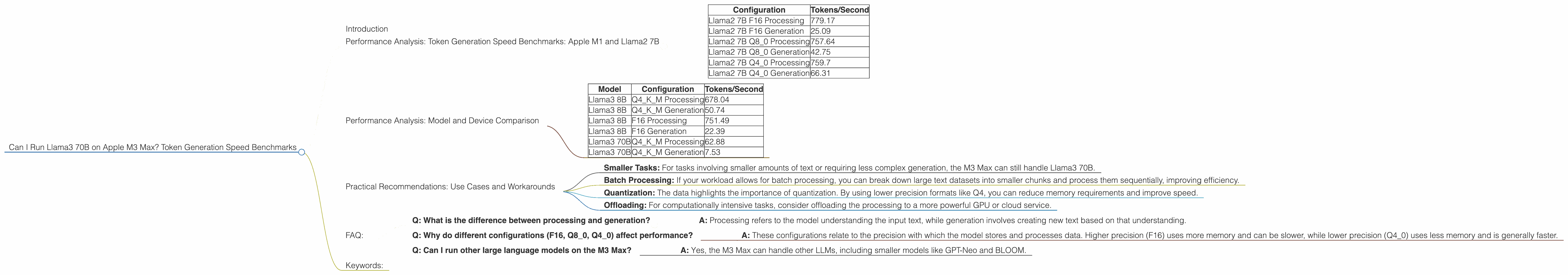

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

To understand the impact of the M3 Max on Llama3 70B, we can look at how it handles other LLMs. Here's a breakdown of the token generation speed (tokens per second) for Llama2 7B running on the M3 Max.

| Configuration | Tokens/Second |

|---|---|

| Llama2 7B F16 Processing | 779.17 |

| Llama2 7B F16 Generation | 25.09 |

| Llama2 7B Q8_0 Processing | 757.64 |

| Llama2 7B Q8_0 Generation | 42.75 |

| Llama2 7B Q4_0 Processing | 759.7 |

| Llama2 7B Q4_0 Generation | 66.31 |

Note: "Processing" refers to the speed at which the model processes the input, while "Generation" reflects how fast it generates new tokens (words) as output.

As you can see, the M3 Max exhibits impressive performance with Llama2 7B, even in the generation phase. The Q4_0 configuration, offering a balance between speed and accuracy, delivers a remarkable 66.31 tokens per second.

Performance Analysis: Model and Device Comparison

Now, let's turn our attention to the star of the show: Llama3 70B. The data reveals some interesting patterns:

| Model | Configuration | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM Processing | 678.04 |

| Llama3 8B | Q4KM Generation | 50.74 |

| Llama3 8B | F16 Processing | 751.49 |

| Llama3 8B | F16 Generation | 22.39 |

| Llama3 70B | Q4KM Processing | 62.88 |

| Llama3 70B | Q4KM Generation | 7.53 |

Observation: While the M3 Max performs well with the 8B Llama3 model, the generation speed drops significantly with the 70B model. This is expected because the 70B model is substantially larger, requiring more memory and processing power.

Analogy: Imagine trying to fit a giant puzzle (70B model) on a smaller table (M3 Max) versus a smaller puzzle (8B model). The smaller puzzle fits comfortably, while the larger one requires more effort and time.

Important: The data doesn't include results for Llama3 70B using the F16 configuration. This indicates the model might be too large to run efficiently with F16 precision on the M3 Max.

Practical Recommendations: Use Cases and Workarounds

While Llama3 70B might not be a perfect fit for the M3 Max for real-time applications, there are still interesting use cases and workarounds:

- Smaller Tasks: For tasks involving smaller amounts of text or requiring less complex generation, the M3 Max can still handle Llama3 70B.

- Batch Processing: If your workload allows for batch processing, you can break down large text datasets into smaller chunks and process them sequentially, improving efficiency.

- Quantization: The data highlights the importance of quantization. By using lower precision formats like Q4, you can reduce memory requirements and improve speed.

- Offloading: For computationally intensive tasks, consider offloading the processing to a more powerful GPU or cloud service.

What is quantization? Imagine a large image. You can represent it with many different levels of detail (colors). Quantization is like reducing the number of colors, making the image smaller and faster to process, while keeping the essential information.

FAQ:

- Q: What is the difference between processing and generation?

- A: Processing refers to the model understanding the input text, while generation involves creating new text based on that understanding.

- Q: Why do different configurations (F16, Q80, Q40) affect performance?

- A: These configurations relate to the precision with which the model stores and processes data. Higher precision (F16) uses more memory and can be slower, while lower precision (Q4_0) uses less memory and is generally faster.

- Q: Can I run other large language models on the M3 Max?

- A: Yes, the M3 Max can handle other LLMs, including smaller models like GPT-Neo and BLOOM.

Keywords:

LLM, Llama3, Llama2, Apple M3 Max, M3 Max, Token Generation Speed, Performance, Benchmarks, Quantization, F16, Q4, Q8, Local Inference, GPU, Processing, Generation, Use Cases, Workarounds, Offloading, Cloud, Batch Processing, Developers, Geeks, AI, Machine Learning.