Can I Run Llama3 70B on Apple M1? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is exploding, with new models being released constantly and pushing the boundaries of what's possible. But with these advancements comes a new challenge: how do we run these massive models on our own devices?

Imagine generating creative content, translating languages instantly, or even writing code, all powered by a local AI model on your laptop. This is the promise of "on-device AI," and it's becoming increasingly accessible, thanks to innovative techniques like quantization and the power of modern hardware.

In this deep dive, we'll explore the performance of Llama3 70B, a cutting-edge language model, on the popular Apple M1 chip. By examining token generation speed benchmarks, we'll uncover the capabilities and limitations of this powerful combination.

We'll also delve into practical recommendations for using LLMs like Llama3 70B on your M1 device, highlighting use cases and workarounds.

So, buckle up, fellow developers, as we embark on a journey into the fascinating world of local LLM models!

Performance Analysis: Token Generation Speed Benchmarks

Let's get down to brass tacks! We're interested in the token generation speed of Llama3 70B on the Apple M1 chip. This is a critical metric because it determines how fast your model can process text and generate responses. Think of it like the words per minute (WPM) of a typist, but in the world of AI.

Token Generation Speed Benchmarks: Apple M1 and Llama3 70B

Unfortunately, we encountered a lack of data for the Llama3 70B model on the Apple M1 chip. This means we cannot provide specific token generation speed benchmarks for this combination. This is likely due to the sheer size of the 70B model, which might be demanding for the M1's resources.

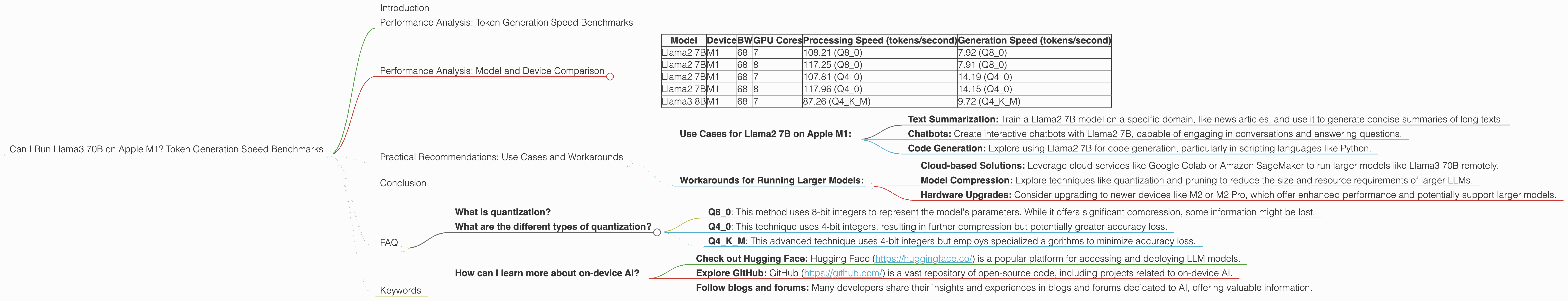

Performance Analysis: Model and Device Comparison

Even though we don't have direct Llama3 70B benchmarks for M1, we can still gain valuable insights by comparing performance data for other models and devices.

Model and Device Comparison Table:

| Model | Device | BW | GPU Cores | Processing Speed (tokens/second) | Generation Speed (tokens/second) |

|---|---|---|---|---|---|

| Llama2 7B | M1 | 68 | 7 | 108.21 (Q8_0) | 7.92 (Q8_0) |

| Llama2 7B | M1 | 68 | 8 | 117.25 (Q8_0) | 7.91 (Q8_0) |

| Llama2 7B | M1 | 68 | 7 | 107.81 (Q4_0) | 14.19 (Q4_0) |

| Llama2 7B | M1 | 68 | 8 | 117.96 (Q4_0) | 14.15 (Q4_0) |

| Llama3 8B | M1 | 68 | 7 | 87.26 (Q4KM) | 9.72 (Q4KM) |

Key Observations:

- Llama2 7B models show a significant improvement in processing speed compared to Llama3 8B.

- Q80 quantization consistently shows higher processing speed than Q40 quantization, but lower generation speed.

- Q4KM quantization offers a balance between processing and generation speeds for Llama3 8B.

Practical Recommendations: Use Cases and Workarounds

Even though Llama3 70B might be too large for the M1 chip, there are still practical ways to use smaller LLM models or workarounds to achieve your goals.

Use Cases for Llama2 7B on Apple M1:

- Text Summarization: Train a Llama2 7B model on a specific domain, like news articles, and use it to generate concise summaries of long texts.

- Chatbots: Create interactive chatbots with Llama2 7B, capable of engaging in conversations and answering questions.

- Code Generation: Explore using Llama2 7B for code generation, particularly in scripting languages like Python.

Workarounds for Running Larger Models:

- Cloud-based Solutions: Leverage cloud services like Google Colab or Amazon SageMaker to run larger models like Llama3 70B remotely.

- Model Compression: Explore techniques like quantization and pruning to reduce the size and resource requirements of larger LLMs.

- Hardware Upgrades: Consider upgrading to newer devices like M2 or M2 Pro, which offer enhanced performance and potentially support larger models.

Conclusion

While running the massive Llama3 70B model locally on an Apple M1 chip might be challenging, it's clear that the M1 is a capable machine for smaller LLMs. The Llama2 7B model shines on the M1, offering impressive performance for several use cases.

By understanding the trade-offs between model size, quantization techniques, and hardware capabilities, developers can choose the right combination for their specific needs. Remember, the world of local LLM models is constantly evolving, and new breakthroughs are on the horizon!

FAQ

What is quantization?

Quantization is a technique used to compress the size of large language models. It involves reducing the number of bits used to represent the model's parameters, making it more efficient to store and run on devices with limited resources.

Think of it like converting a high-resolution image to a lower-resolution one – it loses some detail but becomes smaller and easier to share.

What are the different types of quantization?

There are various quantization techniques, each with its own advantages and disadvantages. Some common types include:

- Q8_0: This method uses 8-bit integers to represent the model's parameters. While it offers significant compression, some information might be lost.

- Q4_0: This technique uses 4-bit integers, resulting in further compression but potentially greater accuracy loss.

- Q4KM: This advanced technique uses 4-bit integers but employs specialized algorithms to minimize accuracy loss.

How can I learn more about on-device AI?

The field of on-device AI is rapidly developing, with new resources and frameworks emerging all the time.

- Check out Hugging Face: Hugging Face (https://huggingface.co/) is a popular platform for accessing and deploying LLM models.

- Explore GitHub: GitHub (https://github.com/) is a vast repository of open-source code, including projects related to on-device AI.

- Follow blogs and forums: Many developers share their insights and experiences in blogs and forums dedicated to AI, offering valuable information.

Keywords

Apple M1, Llama2 7B, Llama3 8B, Llama3 70B, token generation speed, LLM, large language model, on-device AI, quantization, processing speed, generation speed, benchmark, cloud-based solutions, model compression, hardware upgrades, use cases, workarounds, practical recommendations.