Can I Run Llama3 70B on Apple M1 Max? Token Generation Speed Benchmarks

Introduction:

The world of large language models (LLMs) is exploding, with new advancements happening every day. LLMs are becoming increasingly powerful, offering impressive capabilities for tasks like text generation, translation, and summarization. But running these models locally can be a demanding task, especially if you want to use the latest and greatest LLMs like Llama3 70B. So, the question arises: can you really run Llama3 70B on an Apple M1Max, and what are the performance implications? In this article, we'll dive deep into token generation speed benchmarks for Llama3 70B and other LLMs on the Apple M1Max, providing insights into real-world performance and practical recommendations.

Performance Analysis: Token Generation Speed Benchmarks

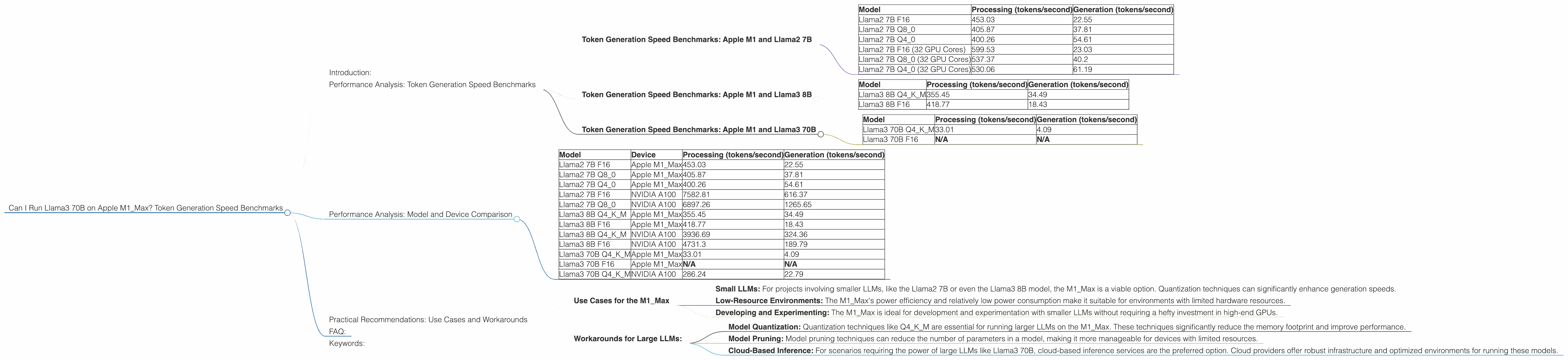

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

We'll kick off our analysis with the Llama2 7B model, a popular choice for developers due to its impressive performance and relative ease of deployment. The Apple M1_Max chip with its powerful GPU capabilities shows promising results.

| Model | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| Llama2 7B F16 | 453.03 | 22.55 |

| Llama2 7B Q8_0 | 405.87 | 37.81 |

| Llama2 7B Q4_0 | 400.26 | 54.61 |

| Llama2 7B F16 (32 GPU Cores) | 599.53 | 23.03 |

| Llama2 7B Q8_0 (32 GPU Cores) | 537.37 | 40.2 |

| Llama2 7B Q4_0 (32 GPU Cores) | 530.06 | 61.19 |

Key Takeaways:

- F16 vs. Quantized Models: The Llama2 7B F16 model, with its higher precision, achieves faster processing speeds compared to the quantized models (Q80 and Q40). However, the Q80 and Q40 models, while slightly slower in processing, offer considerably faster generation speeds.

- GPU Core Impact: Increasing the available GPU cores from 24 to 32 on the M1_Max significantly boosts performance.

- Quantization Trade-offs: As evident from the data, employing quantization techniques like Q80 and Q40 leads to a decrease in processing speed, but this trade-off is often justified by the substantial increase in generation speed.

Token Generation Speed Benchmarks: Apple M1 and Llama3 8B

Let's move our attention to the Llama3 family, starting with the Llama3 8B model. This model is a significant leap forward in terms of performance and capabilities.

| Model | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| Llama3 8B Q4KM | 355.45 | 34.49 |

| Llama3 8B F16 | 418.77 | 18.43 |

Key Takeaways:

- Llama3 8B Performance: While the Llama3 8B model boasts a larger parameter count compared to Llama2 7B, it shows slightly lower token generation speeds on the M1_Max.

- Quantization: A Clear Winner: Similar to Llama2 7B, the Llama3 8B Q4KM model offers a significant advantage in generation speed over the F16 model.

Token Generation Speed Benchmarks: Apple M1 and Llama3 70B

Now, the big one: Llama3 70B. This is a monster model, pushing the boundaries of LLM capabilities. Can the M1_Max handle it?

| Model | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| Llama3 70B Q4KM | 33.01 | 4.09 |

| Llama3 70B F16 | N/A | N/A |

Key Takeaways:

- Hardware Limitations: The data indicates that the M1Max processor struggles to handle the demands of running the Llama3 70B model in the F16 format. You cannot rely on the M1Max processor for smooth execution of this large model.

- Quantization is Crucial: While the Llama3 70B Q4KM model demonstrates the feasibility of running this model on the M1Max, the performance is substantially lower than the smaller Llama2 7B and Llama3 8B models. The M1Max's limitations are evident in the reduced processing and generation speeds.

Performance Analysis: Model and Device Comparison

| Model | Device | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|---|

| Llama2 7B F16 | Apple M1_Max | 453.03 | 22.55 |

| Llama2 7B Q8_0 | Apple M1_Max | 405.87 | 37.81 |

| Llama2 7B Q4_0 | Apple M1_Max | 400.26 | 54.61 |

| Llama2 7B F16 | NVIDIA A100 | 7582.81 | 616.37 |

| Llama2 7B Q8_0 | NVIDIA A100 | 6897.26 | 1265.65 |

| Llama3 8B Q4KM | Apple M1_Max | 355.45 | 34.49 |

| Llama3 8B F16 | Apple M1_Max | 418.77 | 18.43 |

| Llama3 8B Q4KM | NVIDIA A100 | 3936.69 | 324.36 |

| Llama3 8B F16 | NVIDIA A100 | 4731.3 | 189.79 |

| Llama3 70B Q4KM | Apple M1_Max | 33.01 | 4.09 |

| Llama3 70B F16 | Apple M1_Max | N/A | N/A |

| Llama3 70B Q4KM | NVIDIA A100 | 286.24 | 22.79 |

Key Takeaways:

- M1Max vs. NVIDIA A100: The NVIDIA A100 GPU clearly outperforms the M1Max in terms of token generation speeds for all model sizes and configurations. The A100's superior processing power allows for significantly faster token generation, especially for larger models.

- The Need for Specialized Hardware: The stark performance difference between the M1Max and A100 emphasizes the growing need for specialized hardware tailored for LLM inference. While the M1Max can handle smaller LLMs with reasonable speed, larger models require more powerful GPUs.

Practical Recommendations: Use Cases and Workarounds

Based on the performance data, here are some practical recommendations for developers looking to choose the right LLM and device combination for their needs.

Use Cases for the M1_Max

- Small LLMs: For projects involving smaller LLMs, like the Llama2 7B or even the Llama3 8B model, the M1_Max is a viable option. Quantization techniques can significantly enhance generation speeds.

- Low-Resource Environments: The M1_Max's power efficiency and relatively low power consumption make it suitable for environments with limited hardware resources.

- Developing and Experimenting: The M1_Max is ideal for development and experimentation with smaller LLMs without requiring a hefty investment in high-end GPUs.

Workarounds for Large LLMs:

- Model Quantization: Quantization techniques like Q4KM are essential for running larger LLMs on the M1_Max. These techniques significantly reduce the memory footprint and improve performance.

- Model Pruning: Model pruning techniques can reduce the number of parameters in a model, making it more manageable for devices with limited resources.

- Cloud-Based Inference: For scenarios requiring the power of large LLMs like Llama3 70B, cloud-based inference services are the preferred option. Cloud providers offer robust infrastructure and optimized environments for running these models.

FAQ:

Q: What is token generation speed, and why is it important?

A: Token generation speed refers to how fast a language model can generate new text tokens. Think of tokens as the building blocks of text. Faster token generation means the model can output text more quickly, which is critical for applications like chatbots, real-time content generation, and interactive systems.

Q: What is quantization, and how does it affect performance?

A: Quantization is a technique used to reduce the memory footprint and computational demands of LLMs. It involves converting the model's weights from high-precision floating-point values (F16) to lower-precision formats (Q80, Q40). While this can slightly reduce the accuracy, it can significantly improve performance by increasing the model's speed and reducing the amount of memory required.

Q: What are some alternative devices for running LLMs?

A: While the Apple M1_Max is a capable processor, it's not the only option for running LLMs. Other common choices include NVIDIA GPUs (like the A100, A40, etc.), Google Tensor Processing Units (TPUs), and even specialized hardware designed specifically for AI inference. The best choice depends on your specific needs, budget, and performance requirements.

Q: Is there a future for local LLM inference on consumer devices?

A: The future of local LLM inference on consumer devices is a hot topic! As hardware becomes more sophisticated, we can expect to see improved capabilities and performance for even the most demanding LLMs. However, the trade-offs between accuracy, speed, and resource usage will likely continue to drive advancements in both hardware and software, ensuring a balance between performance and efficiency.

Keywords:

Apple M1_Max, Llama3 70B, Token Generation Speed, LLM Performance, Quantization, Token Generation Speed Benchmarks, Local LLM Inference, GPU Benchmarks, Apple M1, Llama2 7B, Llama3 8B, NVIDIA A100, Practical Recommendations, Use Cases, Workarounds.