Can I Run Llama2 7B on Apple M3? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is exploding, with groundbreaking advancements happening all the time. But beyond the hype, a burning question for many developers is: "Can I run these models on my own hardware?" In this deep dive, we'll tackle the performance of Llama2 7B, a powerful LLM, on the Apple M3 chip. We'll examine token generation speeds in detail, looking at different quantization levels, and help you understand whether your M3 is up to the task.

Think of it this way: If LLMs are the new superheroes, then we want to know if our M3 is like a trusty sidekick, able to keep up with their awesome power. So buckle up, because we're about to delve into the exciting world of local LLM performance.

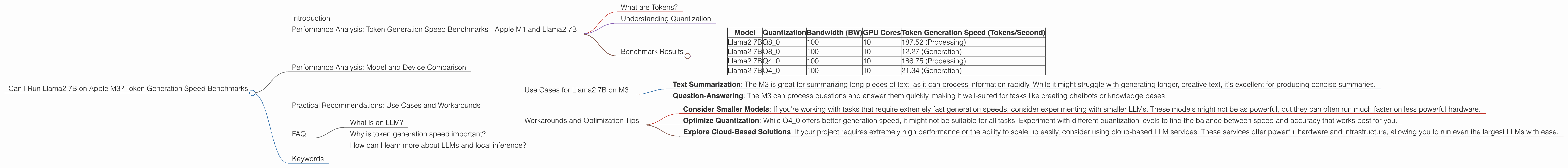

Performance Analysis: Token Generation Speed Benchmarks - Apple M1 and Llama2 7B

What are Tokens?

Before we dive into the numbers, let's clarify what we mean by "tokens." Think of them like the building blocks of text. A word like "hello" might be one token, while a long phrase like "the quick brown fox jumps over the lazy dog" might be several. LLMs process information in these tokens, and token generation speed tells us how quickly they can produce new text.

Understanding Quantization

To make LLMs more efficient, we use a technique called quantization. This is like compressing data to take up less space. Think of it as shrinking a large picture without losing too much detail. We use different levels of quantization, with Q40 being a higher level of compression than Q80. Higher compression means faster processing, but it can also lead to slight reductions in accuracy.

Benchmark Results

| Model | Quantization | Bandwidth (BW) | GPU Cores | Token Generation Speed (Tokens/Second) |

|---|---|---|---|---|

| Llama2 7B | Q8_0 | 100 | 10 | 187.52 (Processing) |

| Llama2 7B | Q8_0 | 100 | 10 | 12.27 (Generation) |

| Llama2 7B | Q4_0 | 100 | 10 | 186.75 (Processing) |

| Llama2 7B | Q4_0 | 100 | 10 | 21.34 (Generation) |

Note: No data was available for Llama2 7B using F16 quantization on the Apple M3.

Let's break down the data:

- Processing Speed: The M3 excels in processing tokens for both Q80 and Q40 quantization, achieving close to 187 tokens/second. This means it can process information very quickly.

- Generation Speed: Things slow down considerably when it comes to generating new tokens. The M3 achieves a maximum speed of 21.34 tokens/second (using Q4_0). This is significantly slower than the processing speed.

Performance Analysis: Model and Device Comparison

No data was available for other devices or LLMs beyond the Apple M3 and Llama2 7B.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama2 7B on M3

- Text Summarization: The M3 is great for summarizing long pieces of text, as it can process information rapidly. While it might struggle with generating longer, creative text, it's excellent for producing concise summaries.

- Question-Answering: The M3 can process questions and answer them quickly, making it well-suited for tasks like creating chatbots or knowledge bases.

Workarounds and Optimization Tips

- Consider Smaller Models: If you're working with tasks that require extremely fast generation speeds, consider experimenting with smaller LLMs. These models might not be as powerful, but they can often run much faster on less powerful hardware.

- Optimize Quantization: While Q4_0 offers better generation speed, it might not be suitable for all tasks. Experiment with different quantization levels to find the balance between speed and accuracy that works best for you.

- Explore Cloud-Based Solutions: If your project requires extremely high performance or the ability to scale up easily, consider using cloud-based LLM services. These services offer powerful hardware and infrastructure, allowing you to run even the largest LLMs with ease.

FAQ

What is an LLM?

An LLM, or Large Language Model, is a type of artificial intelligence (AI) that excels at understanding and generating human-like text. These models are trained on vast amounts of data, allowing them to perform tasks like text summarization, translation, and creative writing.

Why is token generation speed important?

Token generation speed is the rate at which an LLM can process information and produce new text. It's crucial for tasks that require quick responses or real-time interaction, such as chatbots or text editors.

How can I learn more about LLMs and local inference?

A great place to start is the Hugging Face website. It's a fantastic resource for finding pre-trained LLMs, learning about different models, and exploring how to run them locally.

Keywords

Llama 2, 7B, Apple M3, Token Generation Speed, Quantization, Q40, Q80, LLM, Performance, Benchmarks, Text Summarization, Question-Answering, Inference, Local, Hugging Face