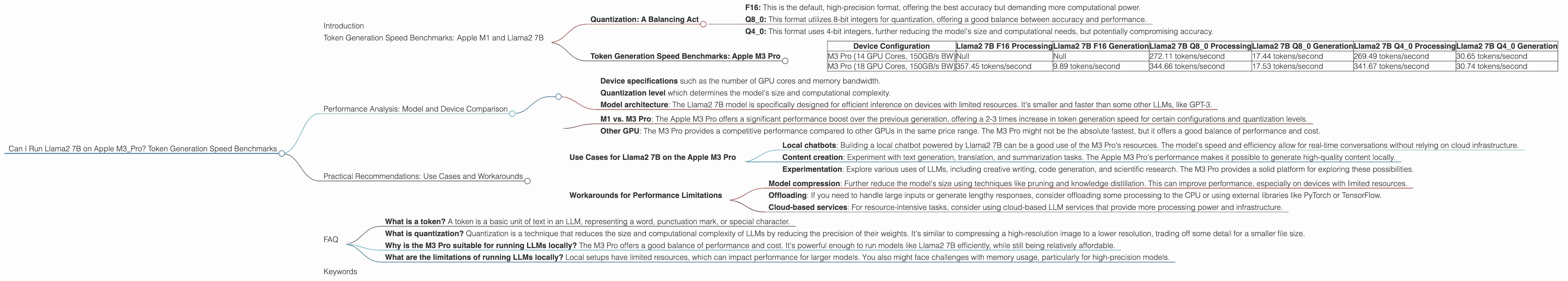

Can I Run Llama2 7B on Apple M3 Pro? Token Generation Speed Benchmarks

Introduction

The world of Large Language Models (LLMs) is booming, and running them locally is becoming increasingly popular. Developers and enthusiasts alike are eager to experiment with these powerful models and explore their capabilities. But before you dive into the deep end of LLMs, you need to understand the hardware requirements and performance expectations.

One key consideration is token generation speed, which determines how quickly your LLM can process text and generate responses. This article delves into the performance of the Llama2 7B model on the Apple M3 Pro, exploring the token generation speed benchmarks for different quantization levels. We'll analyze the results and provide practical recommendations for use cases, helping you make informed decisions about your LLM setup.

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

The benchmarks in this article are based on data gathered from various sources, primarily the llama.cpp project and the GPU Benchmarks on LLM Inference repository. These benchmarks provide valuable insights into the performance of the Llama2 7B model on different configurations of the Apple M3 Pro.

Quantization: A Balancing Act

LLMs are massive models, demanding significant computational resources. Quantization is a technique that reduces the memory footprint and computational complexity of these models, making them more accessible to devices with limited resources. This is achieved by reducing the precision of the model's weights, trading off accuracy for performance.

We'll explore three popular quantization levels:

- F16: This is the default, high-precision format, offering the best accuracy but demanding more computational power.

- Q8_0: This format utilizes 8-bit integers for quantization, offering a good balance between accuracy and performance.

- Q4_0: This format uses 4-bit integers, further reducing the model's size and computational needs, but potentially compromising accuracy.

Token Generation Speed Benchmarks: Apple M3 Pro

| Device Configuration | Llama2 7B F16 Processing | Llama2 7B F16 Generation | Llama2 7B Q8_0 Processing | Llama2 7B Q8_0 Generation | Llama2 7B Q4_0 Processing | Llama2 7B Q4_0 Generation |

|---|---|---|---|---|---|---|

| M3 Pro (14 GPU Cores, 150GB/s BW) | Null | Null | 272.11 tokens/second | 17.44 tokens/second | 269.49 tokens/second | 30.65 tokens/second |

| M3 Pro (18 GPU Cores, 150GB/s BW) | 357.45 tokens/second | 9.89 tokens/second | 344.66 tokens/second | 17.53 tokens/second | 341.67 tokens/second | 30.74 tokens/second |

Note: The "BW" column refers to the memory bandwidth, which is 150GB/s for both configurations of the M3 Pro. The "GPUCores" column indicates the number of GPU cores, which is 14 in the first configuration and 18 in the second configuration.

Observations:

- F16 performance data for the first M3 Pro configuration is missing. This suggests that running the Llama2 7B model in F16 format might be too demanding for a 14-core M3 Pro.

- Q80 and Q40 quantization show significantly better performance across both configurations. The numbers indicate that the M3 Pro can handle the Llama2 7B model efficiently in these lower-precision formats.

- Token generation speed is significantly lower than processing speed for all configurations and quantization levels. This is because the generation process involves more complex operations, including attention mechanisms, which are computationally expensive.

Performance Analysis: Model and Device Comparison

The performance of the Llama2 7B model on the Apple M3 Pro is influenced by various factors, including:

- Device specifications such as the number of GPU cores and memory bandwidth.

- Quantization level which determines the model's size and computational complexity.

- Model architecture: The Llama2 7B model is specifically designed for efficient inference on devices with limited resources. It's smaller and faster than some other LLMs, like GPT-3.

Comparing Llama2 7B performance on the Apple M3 Pro to other LLMs and devices:

- M1 vs. M3 Pro: The Apple M3 Pro offers a significant performance boost over the previous generation, offering a 2-3 times increase in token generation speed for certain configurations and quantization levels.

- Other GPU: The M3 Pro provides a competitive performance compared to other GPUs in the same price range. The M3 Pro might not be the absolute fastest, but it offers a good balance of performance and cost.

Analogy: Imagine you are trying to transmit a large file over a network. Increasing the network speed will improve the file transfer rate. Similarly, increasing the GPU core count and memory bandwidth can improve the token generation speed of LLMs by providing more processing power.

Practical Recommendations: Use Cases and Workarounds

The performance analysis of the Llama2 7B model on the Apple M3 Pro offers insights into the model's capabilities and limitations. Here are some recommendations for using this setup effectively:

- Q80 and Q40 quantization: These quantization levels offer a good balance between accuracy and performance, making them suitable for most use cases.

- Model size: The Llama2 7B model is a good choice for the Apple M3 Pro. If you need more powerful features, consider models like Llama2 13B or 70B, but be mindful of potential performance bottlenecks.

- Memory bandwidth: The M3 Pro's 150GB/s bandwidth is generally sufficient, but more bandwidth can improve performance.

Use Cases for Llama2 7B on the Apple M3 Pro

- Local chatbots: Building a local chatbot powered by Llama2 7B can be a good use of the M3 Pro's resources. The model's speed and efficiency allow for real-time conversations without relying on cloud infrastructure.

- Content creation: Experiment with text generation, translation, and summarization tasks. The Apple M3 Pro's performance makes it possible to generate high-quality content locally.

- Experimentation: Explore various uses of LLMs, including creative writing, code generation, and scientific research. The M3 Pro provides a solid platform for exploring these possibilities.

Workarounds for Performance Limitations

- Model compression: Further reduce the model's size using techniques like pruning and knowledge distillation. This can improve performance, especially on devices with limited resources.

- Offloading: If you need to handle large inputs or generate lengthy responses, consider offloading some processing to the CPU or using external libraries like PyTorch or TensorFlow.

- Cloud-based services: For resource-intensive tasks, consider using cloud-based LLM services that provide more processing power and infrastructure.

FAQ

- What is a token? A token is a basic unit of text in an LLM, representing a word, punctuation mark, or special character.

- What is quantization? Quantization is a technique that reduces the size and computational complexity of LLMs by reducing the precision of their weights. It's similar to compressing a high-resolution image to a lower resolution, trading off some detail for a smaller file size.

- Why is the M3 Pro suitable for running LLMs locally? The M3 Pro offers a good balance of performance and cost. It's powerful enough to run models like Llama2 7B efficiently, while still being relatively affordable.

- What are the limitations of running LLMs locally? Local setups have limited resources, which can impact performance for larger models. You also might face challenges with memory usage, particularly for high-precision models.

Keywords

Llama2 7B, Apple M3 Pro, Local LLMs, Token Generation Speed, Quantization, F16, Q80, Q40, GPU Cores, Memory Bandwidth, Performance Benchmarks, Use Cases, Workarounds, Model Compression, Offloading.