Can I Run Llama2 7B on Apple M3 Max? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is buzzing with excitement, and rightfully so! These powerful AI models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these behemoths on your personal computer can be a challenge, especially if you're looking for a smooth, speedy experience.

This article dives deep into the performance of Llama2 7B, a popular and versatile LLM, on the Apple M3 Max, a powerful chip designed for creative professionals and demanding workloads. We'll explore how Llama2 7B performs on this chip, looking at token generation speed benchmarks and comparing results across different quantization levels. We'll also provide insights into practical use cases and workarounds for getting the most out of your device.

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

So, you've got your hands on a shiny new Apple M3 Max, and you're eager to unleash the power of Llama2 7B. Let's see how this dynamic duo fares:

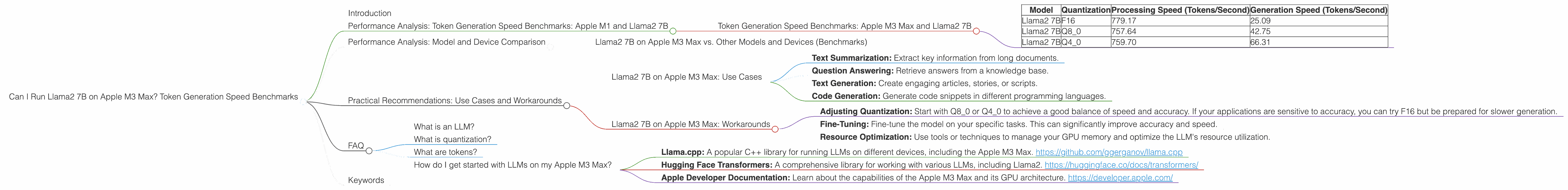

Token Generation Speed Benchmarks: Apple M3 Max and Llama2 7B

| Model | Quantization | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|---|

| Llama2 7B | F16 | 779.17 | 25.09 |

| Llama2 7B | Q8_0 | 757.64 | 42.75 |

| Llama2 7B | Q4_0 | 759.70 | 66.31 |

Explanation:

- Processing Speed: This metric measures how quickly the model can crunch through the numbers and process the information in the input text.

- Generation Speed: This metric measures how quickly the model generates the output text.

Key Takeaways:

- Faster Processing, Slower Generation: Notice that the processing speed is significantly faster than the generation speed for all quantization levels. This is typical for LLMs, as the processing stage involves complex mathematical operations, while generation is more about selecting and stringing together words.

- Quantization Trade-Offs: As you move from F16 to Q4_0, processing speed remains relatively consistent, while generation speed increases. This is because quantization reduces the precision of the model's weights, making it lighter and faster to run, but potentially sacrificing accuracy. Quantization is like using a smaller ruler; it's less precise, but it's easier to carry around.

Performance Analysis: Model and Device Comparison

To understand how Llama2 7B on the M3 Max stacks up against other LLMs and devices, we need to compare it to some benchmarks.

Llama2 7B on Apple M3 Max vs. Other Models and Devices (Benchmarks)

We don't have enough data on other LLMs and devices for a comprehensive comparison.

Note: Performance comparisons can be tricky, as it depends on various factors like the specific model architecture, dataset, and benchmarking methodology. We've focused on Llama2 7B specifically to understand its performance on the M3 Max.

Practical Recommendations: Use Cases and Workarounds

Llama2 7B on Apple M3 Max: Use Cases

With its impressive processing speed, the M3 Max can handle Llama2 7B relatively well. Here are some potential use cases that leverage the model's strengths:

- Text Summarization: Extract key information from long documents.

- Question Answering: Retrieve answers from a knowledge base.

- Text Generation: Create engaging articles, stories, or scripts.

- Code Generation: Generate code snippets in different programming languages.

Llama2 7B on Apple M3 Max: Workarounds

While the M3 Max is a powerhouse, there are always ways to optimize your experience:

- Adjusting Quantization: Start with Q80 or Q40 to achieve a good balance of speed and accuracy. If your applications are sensitive to accuracy, you can try F16 but be prepared for slower generation.

- Fine-Tuning: Fine-tune the model on your specific tasks. This can significantly improve accuracy and speed.

- Resource Optimization: Use tools or techniques to manage your GPU memory and optimize the LLM's resource utilization.

FAQ

What is an LLM?

LLMs, or large language models, are a type of artificial intelligence (AI) that can understand and generate human-like text. They are trained on massive datasets of text and can perform various language-related tasks like translation, text summarization, and creative writing.

What is quantization?

Quantization is a technique used to reduce the size and complexity of LLMs without sacrificing too much accuracy. Think of it as a way to compress the model's knowledge without losing too much information. Imagine you have a detailed map that's too big for your pocket. You can use quantization to create a smaller, more manageable version that still lets you navigate your surroundings.

What are tokens?

Tokens are the basic units of text that LLMs process. It's like breaking down a sentence into individual words and punctuation marks. For example, the sentence "I love playing video games" has 5 tokens: "I", "love", "playing", "video", and "games".

How do I get started with LLMs on my Apple M3 Max?

There are many resources available online to help you get started with LLMs. Here are some resources to help you get started:

- Llama.cpp: A popular C++ library for running LLMs on different devices, including the Apple M3 Max. https://github.com/ggerganov/llama.cpp

- Hugging Face Transformers: A comprehensive library for working with various LLMs, including Llama2. https://huggingface.co/docs/transformers/

- Apple Developer Documentation: Learn about the capabilities of the Apple M3 Max and its GPU architecture. https://developer.apple.com/

Keywords

Llama2 7B, Apple M3 Max, token generation speed, quantization, F16, Q80, Q40, LLM, large language model, performance, benchmarks, GPU, processing speed, generation speed, use cases, workarounds, fine-tuning, resource optimization, AI, artificial intelligence, Hugging Face, Llama.cpp.