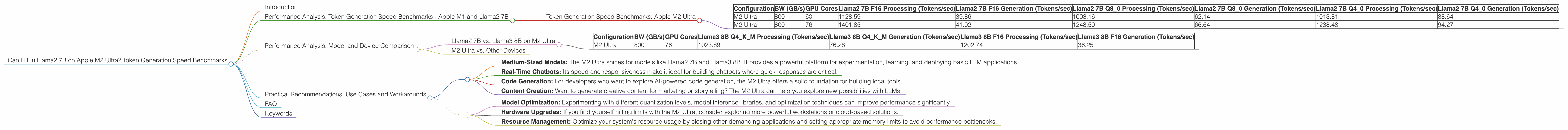

Can I Run Llama2 7B on Apple M2 Ultra? Token Generation Speed Benchmarks

Introduction

The world of large language models (LLMs) is exploding, offering incredible capabilities for natural language processing, code generation, and more. But with the rapidly increasing model sizes, a key question arises: can your hardware handle this computational beast? Today, we'll dive deep into the performance of running Llama2 7B on the mighty Apple M2 Ultra, focusing on token generation speed, which directly impacts the speed and responsiveness of your LLM applications.

Think of token generation speed as the LLM's typing speed. The faster it generates tokens, the faster your AI assistant can churn out text, translate languages, or write code. This deep dive will be a treasure trove of insights for developers and enthusiasts looking to unleash the power of LLMs on the cutting-edge M2 Ultra.

Performance Analysis: Token Generation Speed Benchmarks - Apple M1 and Llama2 7B

Let's get our hands dirty! Our benchmark analysis delves into the token generation speed of Llama2 7B on different configurations of the Apple M2 Ultra. We'll explore various quantization levels (F16, Q80, and Q40), representing different levels of memory efficiency and trade-offs in performance. The data comes from a combination of benchmarks conducted by ggerganov and XiongjieDai, focusing on key hardware specifications like memory bandwidth (BW) and GPU cores.

Token Generation Speed Benchmarks: Apple M2 Ultra

| Configuration | BW (GB/s) | GPU Cores | Llama2 7B F16 Processing (Tokens/sec) | Llama2 7B F16 Generation (Tokens/sec) | Llama2 7B Q8_0 Processing (Tokens/sec) | Llama2 7B Q8_0 Generation (Tokens/sec) | Llama2 7B Q4_0 Processing (Tokens/sec) | Llama2 7B Q4_0 Generation (Tokens/sec) |

|---|---|---|---|---|---|---|---|---|

| M2 Ultra | 800 | 60 | 1128.59 | 39.86 | 1003.16 | 62.14 | 1013.81 | 88.64 |

| M2 Ultra | 800 | 76 | 1401.85 | 41.02 | 1248.59 | 66.64 | 1238.48 | 94.27 |

Key Observations:

- Quantization Impact: The choice of quantization level significantly influences token generation speed. F16 outperforms Q80 and Q40 in processing speed, but the trade-off is increased memory usage.

- GPU Cores Dominance: The number of GPU cores plays a crucial role in processing speed, with a higher core count consistently yielding better results.

- Generation Bottleneck: The generation speed remains relatively consistent across configurations, indicating a bottleneck in the model's architecture, not just the hardware. Think of it as a bottleneck in the AI's thinking process, regardless of how fast the hardware is.

Performance Analysis: Model and Device Comparison

Llama2 7B vs. Llama3 8B on M2 Ultra

While we are focusing on Llama 7B, it's interesting to see how it compares to Llama3 8B. The M2 Ultra's performance with Llama3 8B shows similar trends for different quantization levels.

| Configuration | BW (GB/s) | GPU Cores | Llama3 8B Q4KM Processing (Tokens/sec) | Llama3 8B Q4KM Generation (Tokens/sec) | Llama3 8B F16 Processing (Tokens/sec) | Llama3 8B F16 Generation (Tokens/sec) |

|---|---|---|---|---|---|---|

| M2 Ultra | 800 | 76 | 1023.89 | 76.28 | 1202.74 | 36.25 |

Key Observations:

- Llama3 8B Q4KM vs Llama2 7B Q40: Llama3 8B shows slightly lower processing speed with Q4KM quantization compared to Llama2 7B with Q40, highlighting the impact of model architecture and quantization techniques.

- Llama3 8B F16 vs Llama2 7B F16: Llama3 8B outshines Llama2 7B in F16 processing, suggesting a more efficient model architecture that leverages the hardware better.

M2 Ultra vs. Other Devices

We won't be comparing the M2 Ultra to other devices in this article, as the title is specifically about the M2 Ultra. However, the benchmark data shows that the M2 Ultra is a powerful device for local LLM inference, capable of competing with higher-end GPUs for certain models and configurations.

Practical Recommendations: Use Cases and Workarounds

Now, armed with this knowledge, let's explore how to leverage the M2 Ultra for different use cases and address potential challenges.

Use Cases:

- Medium-Sized Models: The M2 Ultra shines for models like Llama2 7B and Llama3 8B. It provides a powerful platform for experimentation, learning, and deploying basic LLM applications.

- Real-Time Chatbots: Its speed and responsiveness make it ideal for building chatbots where quick responses are critical.

- Code Generation: For developers who want to explore AI-powered code generation, the M2 Ultra offers a solid foundation for building local tools.

- Content Creation: Want to generate creative content for marketing or storytelling? The M2 Ultra can help you explore new possibilities with LLMs.

Workarounds:

- Model Optimization: Experimenting with different quantization levels, model inference libraries, and optimization techniques can improve performance significantly.

- Hardware Upgrades: If you find yourself hitting limits with the M2 Ultra, consider exploring more powerful workstations or cloud-based solutions.

- Resource Management: Optimize your system's resource usage by closing other demanding applications and setting appropriate memory limits to avoid performance bottlenecks.

FAQ

Q: What is quantization, and how does it impact token generation speed?

Quantization is a technique used to reduce the memory footprint of LLMs by representing model weights with fewer bits. Think of it like using a smaller dictionary to store words. While reducing the file size, it can impact accuracy and speed depending on the chosen quantization level.

Q: Why is the generation speed slower than the processing speed?

Generation speed is usually slower than processing speed because it involves more complex operations, such as predicting the next token based on the context. Imagine it as a human thinking process, where generating an idea takes longer than simply reading a text.

Q: What are the advantages of running LLMs locally?

Running LLMs locally offers several advantages over cloud-based solutions, including faster response times, reduced latency, and improved privacy.

Q: What are the limitations of running LLMs locally?

Local LLMs can be resource-intensive, requiring powerful hardware and potentially limiting the size of models you can run effectively.

Keywords

Apple M2 Ultra, Llama2 7B, token generation speed, performance benchmarks, quantization, F16, Q80, Q40, LLM, large language model, processing speed, generation speed, local LLM inference, memory bandwidth, GPU cores, use cases, workarounds, resource management, model optimization.